Training a language model is memory-intensive, not only because the model itself is large but also because training data batches often contain long sequences. Training a model with limited memory is challenging. In this article, you will learn techniques that enable model training in memory-constrained environments. In particular, you will learn about: Low-precision floating-point numbers and mixed-precision training Using gradient checkpointing Let’s get started! Training a Model with Limited Memory using Mixed Precision and Gradient CheckpointingPhoto by Meduana. Some rights reserved. Overview This article is divided into three parts; they are: Floating-point Numbers Automatic Mixed Precision Training Gradient Checkpointing Let’s get started! Floating-Point Numbers The default data type in PyTorch is the IEEE 754 32-bit floating-point format, also known as single precision. It is not the only floating-point type you can use. For example, most CPUs support 64-bit double-precision floating-point, and GPUs often support half-precision floating-point as well. The table below lists some floating-point types: Data Type PyTorch Type Total Bits Sign Bit Exponent Bits Mantissa Bits Min Value Max Value eps IEEE 754 double precision torch.float64 64 1 11 52 -1.79769e+308 1.79769e+308 2.22045e-16 IEEE 754 single precision torch.float32 32 1 8 23 -3.40282e+38 3.40282e+38 1.19209e-07 IEEE 754 half precision torch.float16 16 1 5 10 -65504 65504 0.000976562 bf16 torch.bfloat16 16 1 8 7 -3.38953e+38 3.38953e+38 0.0078125 fp8 (e4m3) torch.float8_e4m3fn 8 1 4 3 -448 448 0.125 fp8 (e5m2) torch.float8_e5m2 8 1 5 2 -57344 57344 0.25 fp8 (e8m0) torch.float8_e8m0fnu 8 1 8 0 1.70141e+38 5.87747e-39 1.0 fp6 (e3m2) 6 1 3 2 -28 28 0.25 fp6 (e2m3) 6 1 2 3 -7.5 7.5 0.125 fp4 (e2m1) 4 1 2 1 -6 6 Floating-point numbers are binary representations of real numbers. Each consists of a sign bit, several bits for the exponent, and several bits for the mantissa. They are laid out as shown in the figure below. When sorted by their binary representation, floating-point numbers retain their order by real-number value. Floating-point number representation. Figure from Wikimedia. Different floating-point types have different ranges and precisions. Not all types are supported by all hardware. For example, fp4 is only supported in Nvidia’s Blackwell architecture. PyTorch supports only a few data types. You can run the following code to print information about various floating-point types: import torch from tabulate import tabulate # float types: float_types = [ torch.float64, torch.float32, torch.float16, torch.bfloat16, torch.float8_e4m3fn, torch.float8_e5m2, torch.float8_e8m0fnu, ] # collect finfo for each type table = [] for dtype in float_types: info = torch.finfo(dtype) try: typename = info.dtype except: typename = str(dtype) table.append([typename, info.max, info.min, info.smallest_normal, info.eps]) headers = [‘data type’, ‘max’, ‘min’, ‘smallest normal’, ‘eps’] print(tabulate(table, headers=headers)) 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 import torch from tabulate import tabulate # float types: float_types = [ torch.float64, torch.float32, torch.float16, torch.bfloat16, torch.float8_e4m3fn, torch.float8_e5m2, torch.float8_e8m0fnu, ] # collect finfo for each type table = [] for dtype in float_types: info = torch.finfo(dtype) try: typename = info.dtype except: typename = str(dtype) table.append([typename, info.max, info.min, info.smallest_normal, info.eps]) headers = [‘data type’, ‘max’, ‘min’, ‘smallest normal’, ‘eps’] print(tabulate(table, headers=headers)) Pay attention to the min and max values for each type, as well as the eps value. The min and max values indicate the range a type can support (the dynamic range). If you train a model with such a type, but the model weights exceed this range, you will get overflow or underflow, usually causing the model to output NaN or Inf. The eps value is the smallest positive number such that the type can differentiate between 1+eps and 1. This is a metric for precision. If your model’s gradient updates are smaller than eps, you will likely observe the vanishing gradient problem. Therefore, float32 is a good default choice for deep learning: it has a wide dynamic range and high precision. However, each float32 number requires 4 bytes of memory. As a compromise, you can use float16 to save memory, but you are likely to encounter overflow or underflow issues since the dynamic range is much smaller. The Google Brain team identified this problem and proposed bfloat16, a 16-bit floating-point format with the same dynamic range as float32. As a trade-off, the precision is an order of magnitude worse than float16. It turns out that dynamic range is more important than precision for deep learning, making bfloat16 highly useful. When you create a tensor in PyTorch, you can specify the data type. For example: x = torch.tensor([1.0, 2.0, 3.0], dtype=torch.float16) print(x) x = torch.tensor([1.0, 2.0, 3.0], dtype=torch.float16) print(x) There is a straightforward way to change the default to a different type, such as bfloat16. This is handy for model training. All you need to do is set the following line before you create any model or optimizer: # set default dtype to bfloat16 torch.set_default_dtype(torch.bfloat16) # set default dtype to bfloat16 torch.set_default_dtype(torch.bfloat16) Just by doing this, you force all your model weights and gradients to be bfloat16 type. This saves half of the memory. In the previous article, you were advised to set the batch size to 8 to fit a GPU with only 12GB of VRAM. With bfloat16, you should be able to set the batch size to 16. Note that using 8-bit float or lower-precision types may not work. This is because you need hardware support and PyTorch to perform the corresponding mathematical operations. You can try the following code (requires a CUDA device) and find that you will need extra effort to operate on 8-bit float: dtype = torch.float8_e4m3fn # Define a tensor with float8 will see # NotImplementedError: “normal_kernel_cuda” not implemented for ‘Float8_e4m3fn’ x = torch.randn(16, 16, dtype=dtype, device=”cuda”) # Create in float32 and convert to float8 works x = torch.randn(16, 16, device=”cuda”).to(dtype) # But matmul is not supported. You will see # NotImplementedError: “addmm_cuda” not implemented for ‘Float8_e4m3fn’ y = x @ x.T # The correct way to run matrix multiplication on 8-bit

Can Public Wi-Fi At Railway Stations Expose Your Online Search? Here’s How To Protect Your Sensitive Data | Technology News

Public Wi-Fi Safety: You’re at your favourite cafe, sipping coffee, at a railway station, and logging onto the free public Wi-Fi. It feels convenient, fast, and easy. But have you ever wondered who might be watching your online activity? Every click, search, and login could leave a digital trail. Public Wi-Fi networks are like open windows into your online life, and while they promise connection, they might also invite unseen eyes. Can the owner of that café or any public Wi-Fi provider actually see what you are doing online? In this article, we will uncover the truth behind these invisible spectators. Public Wi-Fi Networks: ISPs Monitor All Unencrypted Traffic Public Wi-Fi is everywhere and crucial these days, from metro stations to airports, because our devices always need an internet connection, but internet service providers (ISPs) can see all unencrypted activity on their networks, meaning every click, search, and login can be logged. This does not mean they are constantly watching, but the possibility is real, which is why browsing personal content on company Wi-Fi can be risky. Where you connect also matters, as airports and railway stations may have extra security to detect unusual activity, yet their high traffic can make these networks less safe than smaller public Wi-Fi spots. Add Zee News as a Preferred Source Are Public Wi-Fi Connections Safe? Public Wi-Fi is not as safe as private networks because it often has no password and weak encryption. This makes it an easy target for “man-in-the-middle” attacks, where hackers can intercept your internet data. They might capture sensitive information like credit card numbers, passwords, and the websites you visit, even though they cannot see exactly what you do on those sites. On top of that, some cybercriminals create “fake hotspots” that look real. If you connect to them, they can quietly monitor your activity and steal your data without you even realizing it. (Also Read: What Is AI Voice Scam? Indore School Teacher Duped Of Rs 1,00,000; Here’s How To Avoid) Public Wi-Fi Safety: How To Protect Your Sensitive Data You should always use a trusted VPN like Norton or Surfshark on public Wi-Fi to keep your data encrypted and safe from snoopers. Turn off auto-connect and file or printer sharing to avoid unauthorized access. Always confirm the network name with staff to avoid fake hotspots. Keep your device firewall on and enable two-factor authentication for extra protection. Avoid sensitive activities like online banking or shopping, log out after use, and clear your browser cache to remove any traces of your activity. These steps help you stay secure on open networks.

5 Python Libraries for Advanced Time Series Forecasting

5 Python Libraries for Advanced Time Series ForecastingImage by Editor Introduction Predicting the future has always been the holy grail of analytics. Whether it is optimizing supply chain logistics, managing energy grid loads, or anticipating financial market volatility, time series forecasting is often the engine driving critical decision-making. However, while the concept is simple — using historical data to predict future values — the execution is notoriously difficult. Real-world data rarely adheres to the clean, linear trends found in introductory textbooks. Fortunately, Python’s ecosystem has evolved to meet this demand. The landscape has shifted from purely statistical packages to a rich array of libraries that integrate deep learning, machine learning pipelines, and classical econometrics. But with so many options, choosing the right framework can be overwhelming. This article cuts through the noise to focus on 5 powerhouse Python libraries designed specifically for advanced time series forecasting. We move beyond the basics to explore tools capable of handling high-dimensional data, complex seasonality, and exogenous variables. For each library, we provide a high-level overview of its standout features and a concise “Hello World” code snippet to familiarize yourself immediately. 1. Statsmodels statsmodels provides best-in-class models for non-stationary and multivariate time series forecasting, primarily based on methods from statistics and econometrics. It also offers explicit control over seasonality, exogenous variables, and trend components. This example shows how to import and use the library’s SARIMAX model (Seasonal AutoRegressive Integrated Moving Average with eXogenous regressors): from statsmodels.tsa.statespace.sarimax import SARIMAX model = SARIMAX(y, exog=X, order=(1,1,1), seasonal_order=(1,1,1,12)) res = model.fit() forecast = res.forecast(steps=12, exog=X_future) from statsmodels.tsa.statespace.sarimax import SARIMAX model = SARIMAX(y, exog=X, order=(1,1,1), seasonal_order=(1,1,1,12)) res = model.fit() forecast = res.forecast(steps=12, exog=X_future) 2. Sktime Fan of scikit-learn? Good news! sktime mimics the popular machine learning library’s style framework-wise, and it is suited for advanced forecasting tasks, enabling panel and multivariate forecasting through machine-learning model reduction and pipeline composition. For instance, the make_reduction() function takes a machine-learning model as a base component and applies recursion to perform predictions multiple steps ahead. Note that fh is the “forecasting horizon,” allowing prediction of n steps, and X_future is meant to contain future values for exogenous attributes, should the model utilize them. from sktime.forecasting.compose import make_reduction from sklearn.ensemble import RandomForestRegressor forecaster = make_reduction(RandomForestRegressor(), strategy=”recursive”) forecaster.fit(y_train, X_train) y_pred = forecaster.predict(fh=[1,2,3], X=X_future) from sktime.forecasting.compose import make_reduction from sklearn.ensemble import RandomForestRegressor forecaster = make_reduction(RandomForestRegressor(), strategy=“recursive”) forecaster.fit(y_train, X_train) y_pred = forecaster.predict(fh=[1,2,3], X=X_future) 3. Darts The Darts library stands out for its simplicity compared to other frameworks. Its high-level API combines classical and deep learning models to address probabilistic and multivariate forecasting problems. It also captures past and future covariates effectively. This example shows how to use Darts’ implementation of the N-BEATS model (Neural Basis Expansion Analysis for Interpretable Time Series Forecasting), an accurate choice to handle complex temporal patterns. from darts.models import NBEATSModel model = NBEATSModel(input_chunk_length=24, output_chunk_length=12, n_epochs=10) model.fit(series, verbose=True) forecast = model.predict(n=12) from darts.models import NBEATSModel model = NBEATSModel(input_chunk_length=24, output_chunk_length=12, n_epochs=10) model.fit(series, verbose=True) forecast = model.predict(n=12) 5 Python Libraries for Advanced Time Series Forecasting: A Simple ComparisonImage by Editor 4. PyTorch Forecasting For high-dimensional and large-scale forecasting problems with massive data, PyTorch Forecasting is a solid choice that incorporates state-of-the-art forecasting models like Temporal Fusion Transformers (TFT), as well as tools for model interpretability. The following code snippet illustrates, in a simplified fashion, the use of a TFT model. Although not explicitly shown, models in this library are typically instantiated from a TimeSeriesDataSet (in the example, dataset would play that role). from pytorch_forecasting import TemporalFusionTransformer tft = TemporalFusionTransformer.from_dataset(dataset) tft.fit(train_dataloader) pred = tft.predict(val_dataloader) from pytorch_forecasting import TemporalFusionTransformer tft = TemporalFusionTransformer.from_dataset(dataset) tft.fit(train_dataloader) pred = tft.predict(val_dataloader) 5. GluonTS Lastly, GluonTS is a deep learning–based library that specializes in probabilistic forecasting, making it ideal for handling uncertainty in large time series datasets, including those with non-stationary characteristics. We wrap up with an example that shows how to import GluonTS modules and classes — training a Deep Autoregressive model (DeepAR) for probabilistic time series forecasting that predicts a distribution of possible future values rather than a single point forecast: from gluonts.model.deepar import DeepAREstimator from gluonts.mx.trainer import Trainer estimator = DeepAREstimator(freq=”D”, prediction_length=14, trainer=Trainer(epochs=5)) predictor = estimator.train(train_data) from gluonts.model.deepar import DeepAREstimator from gluonts.mx.trainer import Trainer estimator = DeepAREstimator(freq=“D”, prediction_length=14, trainer=Trainer(epochs=5)) predictor = estimator.train(train_data) Wrapping Up Choosing the right tool from this arsenal depends on your specific trade-offs between interpretability, training speed, and the scale of your data. While classical libraries like Statsmodels offer statistical rigor, modern frameworks like Darts and GluonTS are pushing the boundaries of what deep learning can achieve with temporal data. There is rarely a “one-size-fits-all” solution in advanced forecasting, so we encourage you to use these snippets as a launchpad for benchmarking multiple approaches against one another. Experiment with different architectures and exogenous variables to see which library best captures the nuances of your signals. The tools are available; now it’s time to turn that historical noise into actionable future insights.

Viksit Bharat Young Leaders 2026: Why NSA Ajit Doval Avoids Mobile Phones And Internet; Know About His Career | Technology News

Viksit Bharat Young Leaders 2026: In a world where mobile phones and the internet are an important part of daily life, National Security Advisor Ajit Doval shared a surprising detail about his personal habits. Speaking at the inaugural session of the Viksit Bharat Young Leaders Dialogue 2026 on Saturday, he addressed the youth and spoke about the high price India paid for its independence, with many generations facing hardship and loss. During a question-and-answer session, the former Indian intelligence and law enforcement officer revealed that he still does not use a mobile phone or the internet. This statement quickly caught attention online and left many people curious about how a top security expert works in today’s digital world. Why India’s Top Security Chief Avoids Mobile Phones And Internet Add Zee News as a Preferred Source During the Q&A session at Bharat Mandapam, NSA Ajit Doval was asked if he really avoids using mobile phones and the internet. He smiled and confirmed that it was true. Doval explained that phones and the internet are not the only ways to communicate and that there are other methods most people are not aware of. He added that he only uses phones or the internet in special situations, such as talking to family or connecting with people abroad. Ajit Doval also shared an important lesson: patience is essential, and messages should be communicated honestly without using propaganda. (Also Read: OnePlus Likely To Launch OnePlus Nord 6 In India With 9,000mAh Battery; Check Expected Camera, Display, Chipset, Price And Other Specs) Who Is Ajit Doval and His Achievements Ajit Kumar Doval is the fifth and current National Security Advisor (NSA) to the Prime Minister of India. He is a retired Indian Police Service (IPS) officer from Kerala and has worked in Indian intelligence and law enforcement. He was born in Uttarakhand in 1945 and became the youngest police officer in India to receive the Kirti Chakra, a bravery award for his service. Doval played an important role in India’s September 2016 surgical strikes and the February 2019 Balakot airstrikes in Pakistan. He also helped resolve the Doklam standoff and took strong steps to fight insurgency in Northeast India. Ajit Doval’s Career Ajit Doval began his police career in 1968 as an IPS officer. He worked actively in fighting insurgency in Mizoram and Punjab. In 1999, he was one of three negotiators who helped release passengers from the hijacked IC-814 plane in Kandahar. Between 1971 and 1999, he successfully handled at least 15 hijacking cases of Indian Airlines aircraft. Ajit Doval is said to have spent seven years working undercover in Pakistan, gathering important information on active militant groups. After one year as a secret agent, he worked at the Indian High Commission in Islamabad for six years. Ajit Doval’s Powerful Message To Youth Addressing the gathering, Ajit Doval urged young people to learn from history and work towards building a strong and great India based on its values, rights, and beliefs. Looking back at India’s past, he said the country once had a highly advanced civilization.

How To Delete OpenAI’s ChatGPT History From Android, iOS Devices And Laptops: Step-By-Step Guide | Technology News

OpenAI’s ChatGPT History Delete: Are you one of the millions of people using OpenAI’s ChatGPT every day? AI chatbots have now become a regular part of daily life, helping users save time and complete tasks more easily. From writing a quick email to creating a full article, generating ideas on a topic, or exploring different angles for a story, ChatGPT can do it all. However, as more people rely on this powerful tool, an important question comes up: where does your data go? By default, ChatGPT saves your chat history, which may include personal and work-related information. One question naturally comes to mind: is there a way to delete the data stored in ChatGPT? The answer is yes, and the process is quite simple. With just a few steps, you can clear your chat history and take control of your privacy. Add Zee News as a Preferred Source But there’s another side to the story. Experts warn that relying too heavily on AI tools can have drawbacks. Over time, constant dependence on AI may reduce your own thinking ability, creativity, and problem-solving skills, making it important to use these tools wisely rather than endlessly. (Also Read: Want To Turn Off Google’s Gemini AI Features In Gmail? Follow THESE Simple Steps) How To Delete OpenAI’s ChatGPT From Android Or iOS Device Step 1: Open the ChatGPT app on your Android or iOS device and log in using your email ID. Step 2: Tap the two horizontal lines in the top-left corner to open the menu. Step 3: Select your profile to access ChatGPT settings. Step 4: Scroll down and tap on Data Controls. Step 5: Choose Delete OpenAI Account and follow the instructions to permanently delete your account. How To Delete OpenAI’s ChatGPT From Your PC and Laptops Step 1: Open ChatGPT on your laptop using any web browser and log in with your email account. Step 2: Look at the bottom-left corner of the screen and click on your profile icon. Step 3: From the menu, select Settings. Step 4: In the Settings window, click on the Account option. Step 5: Scroll down and select Delete Account, then follow the on-screen instructions to confirm. It is important to note that deleting your ChatGPT account is permanent and cannot be reversed, so make sure you are certain before going ahead. If you have an active subscription through the Google Play Store, you will need to cancel it separately, as deleting your account will not cancel the subscription. Once the account is deleted, you won’t be able to sign up again using the same email address or phone number. All your data across OpenAI apps will be erased. After you click on “Delete,” it may take up to 30 days for all your data to be fully removed.

D2M Broadcast Technology: Watch Movies, Live Streaming On Your Phone Without Internet After THIS Tech-Upgrade – EXPLAINED | Technology News

In the infinite world of Technological updates and features, a new technology is being developed by Direct-to-Mobile (D2M) broadcast technology, it will allow television and multimedia content to be sent directly to mobile phones without using mobile data or cellular networks. Instead of relying on 4G or 5G, D2M will work like traditional TV broadcasting, enabling users to watch live channels even without an internet connection. With this technology, it is expected to be useful during emergencies, reduce network congestion, and expand access to free-to-air public broadcasts. What Is D2M Technology? Add Zee News as a Preferred Source D2M technology works in a similar way to FM radio and direct-to-home (DTH) television services. Just as FM radio stations send signals that are received by radios on specific frequencies, D2M will broadcast signals that can be received directly by smartphones. Like DTH, where a dish antenna receives signals from satellites and sends them to a set-top box, D2M will send content straight to mobile devices. The technology will use terrestrial transmission infrastructure along with a dedicated spectrum to deliver signals directly to smartphones, without needing an internet connection. (Also Read: Stop Scrolling! Check WhatsApp’s Latest Group Chat Updates: Text Stickers, Member Tags, Event Reminders And More) Spectrum Allocation for D2M The government plans to reserve the 470–582 MHz frequency band for D2M services. This spectrum will be specifically set aside to support the rollout of this new broadcast technology across the country, according to Information and Broadcasting Secretary Apurva Chandra, quoted by the Times of India. Live TV Without Internet One of the key benefits of D2M is that users will be able to watch live TV content, such as sports matches and news, without using mobile data. This could be especially useful in areas with poor internet connectivity or during situations where networks are overloaded. Government Push In June last year, IIT Kanpur released a white paper on D2M broadcasting in collaboration with Prasar Bharati and the Telecommunications Development Society. Later, in August 2023, the Ministry of Communications listed several use cases for D2M, including content delivery, education, and sharing important information during emergencies and disasters. Benefits for Users and Networks The government believes D2M can shift around 25–30% of video traffic away from mobile networks, helping reduce pressure on 5G services. According to reports, with nearly 80 crore smartphones in India and 69% of consumed content being video, the technology could play a major role in easing network congestion.

Train Your Large Model on Multiple GPUs with Pipeline Parallelism

import dataclasses import os import datasets import tokenizers import torch import torch.distributed as dist import torch.nn as nn import torch.nn.functional as F import torch.optim.lr_scheduler as lr_scheduler import tqdm from torch import Tensor from torch.distributed.checkpoint import load, save from torch.distributed.checkpoint.state_dict import StateDictOptions, get_state_dict, set_state_dict from torch.distributed.pipelining import PipelineStage, ScheduleGPipe # Build the model @dataclasses.dataclass class LlamaConfig: “”“Define Llama model hyperparameters.”“” vocab_size: int = 50000 # Size of the tokenizer vocabulary max_position_embeddings: int = 2048 # Maximum sequence length hidden_size: int = 768 # Dimension of hidden layers intermediate_size: int = 4*768 # Dimension of MLP’s hidden layer num_hidden_layers: int = 12 # Number of transformer layers num_attention_heads: int = 12 # Number of attention heads num_key_value_heads: int = 3 # Number of key-value heads for GQA class RotaryPositionEncoding(nn.Module): “”“Rotary position encoding.”“” def __init__(self, dim: int, max_position_embeddings: int) -> None: “”“Initialize the RotaryPositionEncoding module. Args: dim: The hidden dimension of the input tensor to which RoPE is applied max_position_embeddings: The maximum sequence length of the input tensor ““” super().__init__() self.dim = dim self.max_position_embeddings = max_position_embeddings # compute a matrix of n\theta_i N = 10_000.0 inv_freq = 1.0 / (N ** (torch.arange(0, dim, 2) / dim)) inv_freq = torch.cat((inv_freq, inv_freq), dim=–1) position = torch.arange(max_position_embeddings) sinusoid_inp = torch.outer(position, inv_freq) # save cosine and sine matrices as buffers, not parameters self.register_buffer(“cos”, sinusoid_inp.cos()) self.register_buffer(“sin”, sinusoid_inp.sin()) def forward(self, x: Tensor) -> Tensor: “”“Apply RoPE to tensor x. Args: x: Input tensor of shape (batch_size, seq_length, num_heads, head_dim) Returns: Output tensor of shape (batch_size, seq_length, num_heads, head_dim) ““” batch_size, seq_len, num_heads, head_dim = x.shape dtype = x.dtype # transform the cosine and sine matrices to 4D tensor and the same dtype as x cos = self.cos.to(dtype)[:seq_len].view(1, seq_len, 1, –1) sin = self.sin.to(dtype)[:seq_len].view(1, seq_len, 1, –1) # apply RoPE to x x1, x2 = x.chunk(2, dim=–1) rotated = torch.cat((–x2, x1), dim=–1) output = (x * cos) + (rotated * sin) return output class LlamaAttention(nn.Module): “”“Grouped-query attention with rotary embeddings.”“” def __init__(self, config: LlamaConfig) -> None: super().__init__() self.hidden_size = config.hidden_size self.num_heads = config.num_attention_heads self.head_dim = self.hidden_size // self.num_heads self.num_kv_heads = config.num_key_value_heads # GQA: H_kv < H_q # hidden_size must be divisible by num_heads assert (self.head_dim * self.num_heads) == self.hidden_size # Linear layers for Q, K, V projections self.q_proj = nn.Linear(self.hidden_size, self.num_heads * self.head_dim, bias=False) self.k_proj = nn.Linear(self.hidden_size, self.num_kv_heads * self.head_dim, bias=False) self.v_proj = nn.Linear(self.hidden_size, self.num_kv_heads * self.head_dim, bias=False) self.o_proj = nn.Linear(self.num_heads * self.head_dim, self.hidden_size, bias=False) def forward(self, hidden_states: Tensor, rope: RotaryPositionEncoding) -> Tensor: bs, seq_len, dim = hidden_states.size() # Project inputs to Q, K, V query_states = self.q_proj(hidden_states).view(bs, seq_len, self.num_heads, self.head_dim) key_states = self.k_proj(hidden_states).view(bs, seq_len, self.num_kv_heads, self.head_dim) value_states = self.v_proj(hidden_states).view(bs, seq_len, self.num_kv_heads, self.head_dim) # Apply rotary position embeddings query_states = rope(query_states) key_states = rope(key_states) # Transpose tensors from BSHD to BHSD dimension for scaled_dot_product_attention query_states = query_states.transpose(1, 2) key_states = key_states.transpose(1, 2) value_states = value_states.transpose(1, 2) # Use PyTorch’s optimized attention implementation # setting is_causal=True is incompatible with setting explicit attention mask attn_output = F.scaled_dot_product_attention( query_states, key_states, value_states, is_causal=True, dropout_p=0.0, enable_gqa=True, ) # Transpose output tensor from BHSD to BSHD dimension, reshape to 3D, and then project output attn_output = attn_output.transpose(1, 2).reshape(bs, seq_len, self.hidden_size) attn_output = self.o_proj(attn_output) return attn_output class LlamaMLP(nn.Module): “”“Feed-forward network with SwiGLU activation.”“” def __init__(self, config: LlamaConfig) -> None: super().__init__() # Two parallel projections for SwiGLU self.gate_proj = nn.Linear(config.hidden_size, config.intermediate_size, bias=False) self.up_proj = nn.Linear(config.hidden_size, config.intermediate_size, bias=False) self.act_fn = F.silu # SwiGLU activation function # Project back to hidden size self.down_proj = nn.Linear(config.intermediate_size, config.hidden_size, bias=False) def forward(self, x: Tensor) -> Tensor: # SwiGLU activation: multiply gate and up-projected inputs gate = self.act_fn(self.gate_proj(x)) up = self.up_proj(x) return self.down_proj(gate * up) class LlamaDecoderLayer(nn.Module): “”“Single transformer layer for a Llama model.”“” def __init__(self, config: LlamaConfig) -> None: super().__init__() self.input_layernorm = nn.RMSNorm(config.hidden_size, eps=1e–5) self.self_attn = LlamaAttention(config) self.post_attention_layernorm = nn.RMSNorm(config.hidden_size, eps=1e–5) self.mlp = LlamaMLP(config) def forward(self, hidden_states: Tensor, rope: RotaryPositionEncoding) -> Tensor: # First residual block: Self-attention residual = hidden_states hidden_states = self.input_layernorm(hidden_states) attn_outputs = self.self_attn(hidden_states, rope=rope) hidden_states = attn_outputs + residual # Second residual block: MLP residual = hidden_states hidden_states = self.post_attention_layernorm(hidden_states) hidden_states = self.mlp(hidden_states) + residual return hidden_states class LlamaModel(nn.Module): “”“The full Llama model without any pretraining heads.”“” def __init__(self, config: LlamaConfig) -> None: super().__init__() self.rope = RotaryPositionEncoding( config.hidden_size // config.num_attention_heads, config.max_position_embeddings, ) self.embed_tokens = nn.Embedding(config.vocab_size, config.hidden_size) self.layers = nn.ModuleDict({ str(i): LlamaDecoderLayer(config) for i in range(config.num_hidden_layers) }) self.norm = nn.RMSNorm(config.hidden_size, eps=1e–5) def forward(self, input_ids: Tensor) -> Tensor: # Convert input token IDs to embeddings if self.embed_tokens is not None: hidden_states = self.embed_tokens(input_ids) else: hidden_states = input_ids # Process through all transformer layers, then the final norm layer for n in range(len(self.layers)): if self.layers[str(n)] is not None: hidden_states = self.layers[str(n)](hidden_states, self.rope) if self.norm is not None: hidden_states = self.norm(hidden_states) # Return the final hidden states, and copy over the attention mask return hidden_states class LlamaForPretraining(nn.Module): def __init__(self, config: LlamaConfig) -> None: super().__init__() self.base_model = LlamaModel(config) self.lm_head = nn.Linear(config.hidden_size, config.vocab_size, bias=False) def forward(self, input_ids: Tensor) -> Tensor: hidden_states = self.base_model(input_ids) if self.lm_head is not None: hidden_states = self.lm_head(hidden_states) return hidden_states # Generator function to create padded sequences of fixed length class PretrainingDataset(torch.utils.data.Dataset): def __init__(self, dataset: datasets.Dataset, tokenizer: tokenizers.Tokenizer, seq_length: int, device: torch.device = None): self.dataset = dataset self.tokenizer = tokenizer self.device = device self.seq_length = seq_length self.bot = tokenizer.token_to_id(“[BOT]”) self.eot = tokenizer.token_to_id(“[EOT]”) self.pad = tokenizer.token_to_id(“[PAD]”) def __len__(self): return len(self.dataset) def __getitem__(self, index): “”“Get a sequence of token ids from the dataset. [BOT] and [EOT] tokens are added. Clipped and padded to the sequence length. ““” seq = self.dataset[index][“text”] tokens: list[int] = [self.bot] + self.tokenizer.encode(seq).ids + [self.eot] # pad to target sequence length toklen = len(tokens) if toklen < self.seq_length+1: pad_length = self.seq_length+1 – toklen tokens += [self.pad] * pad_length # return the sequence x = torch.tensor(tokens[:self.seq_length], dtype=torch.int64, device=self.device) y = torch.tensor(tokens[1:self.seq_length+1], dtype=torch.int64, device=self.device) return x, y def load_checkpoint(model: nn.Module, optimizer: torch.optim.Optimizer) -> None: dist.barrier() model_state, optimizer_state = get_state_dict( model, optimizer, options=StateDictOptions(full_state_dict=True), ) load( {“model”: model_state, “optimizer”:

WhatsApp Adds Member Tags And Event Reminders To Fix Group Chat Chaos – New Features Explained | Technology News

WhatsApp New Features: WhatsApp has rolled out several new updates to make group chats more organised and expressive. Since many users depend on group chats for planning events, running communities, and staying in touch, the app has now refined how people communicate in shared conversations. The biggest update is the introduction of member tags. This feature lets users add custom labels to their names within a group. For example, someone can appear as “Anna’s Dad” in a school group, “Secretary” in a society group, or “Striker” in a football chat. These labels are visible only inside that group. Users can choose different tags for different groups. WhatsApp said the feature will be available gradually to users worldwide. WhatsApp is also adding new ways to express yourself. With text stickers, users can now turn any word or phrase into a sticker by typing it into the sticker search bar. These stickers can be saved directly to the sticker pack without sending them first. This makes it quicker to reuse favourite expressions without depending only on emojis or GIFs. Add Zee News as a Preferred Source Group planning is also getting simpler. WhatsApp now allows custom event reminders inside group chats. Users can set early alerts while creating events. This helps reduce missed calls, late arrivals, or forgotten plans. The reminders work for both physical meetups and online events. These updates add to WhatsApp’s existing group features. Users can already share files up to 2GB, send HD photos and videos, share screens during calls, and start voice chats without making a formal call. WhatsApp says these changes are part of its effort to improve the group chat experience across devices. As group conversations play a bigger role in daily life, the app is clearly aiming to make chats more organised, clearer, and more user-friendly.

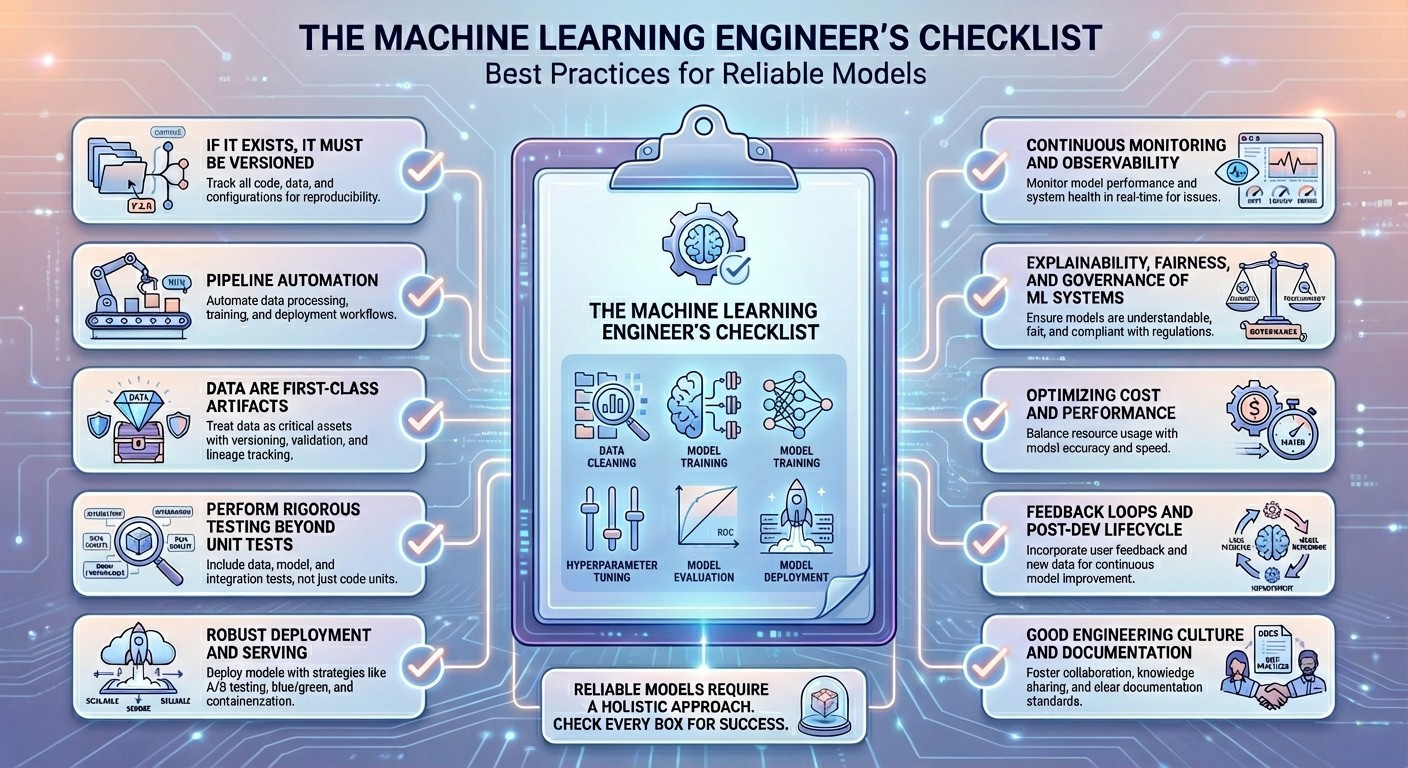

The Machine Learning Engineer’s Checklist: Best Practices for Reliable Models

The Machine Learning Engineer’s Checklist: Best Practices for Reliable ModelsImage by Editor Introduction Building newly trained machine learning models that work is a relatively straightforward endeavor, thanks to mature frameworks and accessible computing power. However, the real challenge in the production lifecycle of a model begins after the first successful training run. Once deployed, a model operates in a dynamic, unpredictable environment where its performance can degrade rapidly, turning a successful proof-of-concept into a costly liability. Practitioners often encounter issues like data drift, where the characteristics of the production data change over time; concept drift, where the underlying relationship between input and output variables evolves; or subtle feedback loops that bias future training data. These pitfalls — which range from catastrophic model failures to slow, insidious performance decay — are often the result of lacking the right operational rigor and monitoring systems. Building reliable models that keep performing well in the long run is a different story, one that requires discipline, a robust MLOps pipeline, and, of course, skill. This article focuses on exactly that. By providing a systematic approach to tackle these challenges, this research-backed checklist outlines essential best practices, core skills, and sometimes not-to-miss tools that every machine learning engineer should be familiar with. By adopting the principles outlined in this guide, you will be equipped to transform your initial models into maintainable, high-quality production systems, ensuring they remain accurate, unbiased, and resilient to the inevitable shifts and challenges of the real world. Without further ado, here is the list of 10 machine learning engineer best practices I curated for you and your upcoming models to shine at their best in terms of long-term reliability. The Checklist 1. If It Exists, It Must Be Versioned Data snapshots, code for training models, hyperparameters used, and model artifacts — everything matters, and everything is subject to variations across your model lifecycle. Therefore, everything surrounding a machine learning model should be properly versioned. Just imagine, for instance, that your image classification model’s performance, which used to be great, starts to drop after a concrete bug fix. With versioning, you will be able to reproduce the old model settings and isolate the root cause of the problem more safely. There is no rocket science here — versioning is widely known across the engineering community, with core skills like managing Git workflows, data lineage, and experiment tracking; and specific tools like DVC, Git/GitHub, MLflow, and Delta Lake. 2. Pipeline Automation As part of continuous integration and continuous delivery (CI/CD) principles, repeatable processes that involve data preprocessing through training, validation, and deployments should be encapsulated in pipelines with automated running and testing underneath them. Suppose a nightly set-up pipeline that fetches new data — e.g. images captured by a sensor — runs validation tests, retrains the model if needed (because of data drift, for example), re-evaluates business key performance indicators (KPIs), and pushes the updated model(s) to staging. This is a common example of pipeline automation, and it takes skills like workflow orchestration, fundamentals of technologies like Docker and Kubernetes, and test automation knowledge. Commonly useful tools here include: Airflow, GitLab CI, Kubeflow, Flyte, and GitHub Actions. 3. Data Are First-Class Artifacts The rigor with which software tests are applied in any software engineering project must be present for enforcing data quality and constraints. Data is the essential nourishment of machine learning models from inception to serving in production; hence, the quality of whatever data they ingest must be optimal. A solid understanding of data types, schema designs, and data quality issues like anomalies, outliers, duplicates, and noise is vital to treat data as first-class assets. Tools like Evidently, dbt tests, and Deequ are designed to help with this. 4. Perform Rigorous Testing Beyond Unit Tests Testing machine learning systems involves specific tests for aspects like pipeline integration, feature logic, and statistical consistency of inputs and outputs. If a refactored feature engineering script applies a subtle modification in a feature’s original distribution, your system may pass basic unit tests, but through distribution tests, the issue might be detected in time. Test-driven development (TDD) and knowledge of statistical hypothesis tests are strong allies to “put this best practice into practice,” with imperative tools under the radar like the pytest library, customized data drift tests, and mocking in unit tests. 5. Robust Deployment and Serving Having a robust machine learning model deployment and serving in production entails that the model should be packaged, reproducible, scalable to large settings, and have the ability to roll back safely if needed. The so-called blue–green strategy, based on deploying into two “identical” production environments, is a way to ensure incoming data traffic can be shifted back quickly in the event of latency spikes. Cloud architectures together with containerization help to this end, with specific tools like Docker, Kubernetes, FastAPI, and BentoML. 6. Continuous Monitoring and Observability This is probably already in your checklist of best practices, but as an essential of machine learning engineering, it is worth pointing it out. Continuous monitoring and observability of the deployed model involves monitoring data drift, model decay, latency, cost, and other domain-specific business metrics beyond just accuracy or error. For example, if the recall metric of a fraud detection model drops upon the emergence of new fraud patterns, properly set drift alerts may trigger the need for retraining the model with fresh transaction data. Prometheus and business intelligence tools like Grafana can help a lot here. 7. Explainability, Fairness, and Governance of ML Systems Another essential for machine learning engineers, this best practice aims at ensuring the delivery of models with transparent, compliant, and responsible behavior, understanding and adhering to existing national or regional regulations — for instance, the European Union AI Act. An example of the application of these principles could be a loan classification model that triggers fairness checks before being deployed to guarantee no protected groups are unreasonably rejected. For interpretability and governance, tools like SHAP, LIME, model registries, and Fairlearn are highly recommended. 8. Optimizing Cost and Performance

Samsung Reports $13.8 Billion Operating Profit In Oct-Dec Quarter | Technology News

Seoul: Samsung Electronics on Thursday reported a record-breaking operating profit for the fourth quarter, touching the 20 trillion-won ($13.8 billion) mark for the first time, driven by a supercycle in the chip industry. The fourth-quarter operating profit marked a more than 200 percent rise from a year earlier, the company said in a preliminary earnings report. If confirmed, it would mark the first time for the company’s quarterly earnings to reach the 20 trillion-won level, reports Yonhap news agency. Sales increased 22.7 percent to 93 trillion won. It was also the first time for the quarterly sales to surpass the 90 trillion-won mark. The data for net profit was not available. The operating profit was 1.8 percent higher than the average estimate, according to a survey by Yonhap Infomax, the financial data firm of Yonhap News Agency. Samsung Electronics did not disclose a detailed earnings breakdown for its individual business divisions. Add Zee News as a Preferred Source The company will release its final earnings report later this month. Analysts said the increased earnings apparently came amid improved profitability at the Device Solutions (DS) division, which covers the company’s core semiconductor business. According to Korea Investment & Securities Co., global prices of dynamic random-access memory (DRAM) and NAND flash jumped about 40 percent in the fourth quarter from the previous three-month period. Market observers estimate the operating profit of the DS division at around 16 trillion to 17 trillion won. The projection represents a sharp rise from just 7 trillion won posted in the third quarter. Analysts said Samsung’s non-memory business is also likely to have narrowed its operating losses, leading to an overall improvement in the division’s performance. Samsung’s mobile business is estimated to have posted an operating profit in the 2 trillion-won range, while the home appliance business likely suffered an operating loss of 100 billion won, according to market watchers. For the entire year of 2025, Samsung Electronics estimated its annual operating earnings at 43.53 trillion won, up 33 percent from a year earlier. Annual sales increased 10.6 percent to 332.77 trillion won. Data for net profit was not available as well. For 2026, analysts said Samsung Electronics is anticipated to maintain its robust performance, backed by its expanded high bandwidth memory (HBM) capacity. “This year, Samsung Electronics is expected to post an annual operating profit of 123 trillion won, driven by a sharp rise in DRAM prices and increased HBM shipments,” said Kim Dong-won, a researcher at KB Securities Co.