# research_agent.py # Purpose: A research agent with full AgentOps instrumentation. # Every session is logged, replayed, and cost-tracked in the AgentOps dashboard. # # Prerequisites: # pip install agentops anthropic python-dotenv # # Environment variables required (in .env): # AGENTOPS_API_KEY — from https://app.agentops.ai # ANTHROPIC_API_KEY — from https://console.anthropic.com # # How to run: # python research_agent.py import os import json import time from dotenv import load_dotenv import anthropic import agentops from agentops.sdk.decorators import record_function load_dotenv() # ── Initialize AgentOps ──────────────────────────────────────────────────────── # This must be called before any agent code runs. # Tags let you filter and group sessions in the dashboard. # The SDK automatically intercepts LLM calls once initialized. agentops.init( api_key=os.environ[“AGENTOPS_API_KEY”], tags=[“research-agent”, “production”, “v1.0”], auto_start_session=True # Automatically starts a session on init ) # Initialize the Anthropic client after AgentOps — the SDK wraps LLM clients # to automatically capture every call’s input, output, tokens, and cost. client = anthropic.Anthropic(api_key=os.environ[“ANTHROPIC_API_KEY”]) MODEL = “claude-sonnet-4-20250514” # ── System prompt ───────────────────────────────────────────────────────────── # Stored as a constant, not inline — version-controllable and testable. SYSTEM_PROMPT = “”“You are a research assistant. When given a topic: 1. Use the available tools to gather information systematically 2. Call search_topic to get an overview of the subject 3. Call get_key_facts to extract the most important points 4. Call format_summary to structure the final output Be thorough but concise. Always call format_summary as your final step.”“” # ── Tool definitions ────────────────────────────────────────────────────────── # These are the tools the agent can call. In a real system, search_topic # would call a real search API (Tavily, SerpAPI, etc.). Here they are stubs # that return realistic data so you can run the example without external APIs. TOOLS = [ { “name”: “search_topic”, “description”: ( “Search for comprehensive information about a topic. “ “Returns an overview with key themes and context. “ “Use this as the first step for any research task.” ), “input_schema”: { “type”: “object”, “properties”: { “topic”: { “type”: “string”, “description”: “The topic to research. Be specific.” }, “depth”: { “type”: “string”, “enum”: [“overview”, “detailed”], “description”: “How deep to search. Use ‘overview’ first.” } }, “required”: [“topic”] } }, { “name”: “get_key_facts”, “description”: ( “Extract the most important facts about a topic from search results. “ “Use after search_topic to identify the 5-7 most significant points.” ), “input_schema”: { “type”: “object”, “properties”: { “topic”: { “type”: “string”, “description”: “The topic to extract facts about” }, “focus”: { “type”: “string”, “description”: “Optional: specific angle to focus on (e.g., ‘recent developments’, ‘key players’)” } }, “required”: [“topic”] } }, { “name”: “format_summary”, “description”: ( “Format research findings into a clean structured summary. “ “Always call this as the final step before returning to the user.” ), “input_schema”: { “type”: “object”, “properties”: { “title”: { “type”: “string”, “description”: “Title for the summary” }, “key_points”: { “type”: “array”, “items”: {“type”: “string”}, “description”: “List of key findings (5-7 items)” }, “conclusion”: { “type”: “string”, “description”: “A 2-3 sentence synthesis of the research” } }, “required”: [“title”, “key_points”, “conclusion”] } } ] # ── Tool implementations ────────────────────────────────────────────────────── # @record_function decorates each tool so AgentOps captures: # – The function name # – Input arguments # – Return value # – Execution time # – Any exceptions # These appear as labeled spans in the session replay timeline. @record_function(“search_topic”) def search_topic(topic: str, depth: str = “overview”) -> dict: “”“ Search for information about a topic. In production: replace this stub with a real search API call. ““” # Simulate search latency — remove in production time.sleep(0.3) # Stub response — replace with: tavily_client.search(query=topic) return { “topic”: topic, “depth”: depth, “results”: f“Comprehensive overview of {topic}: This is a rapidly evolving field “ f“with significant developments in 2025-2026. Key themes include “ f“technical innovation, adoption patterns, and organizational impact. “ f“Multiple research groups and companies are actively advancing the field.”, “source_count”: 12, “timestamp”: “2026-05-26” } @record_function(“get_key_facts”) def get_key_facts(topic: str, focus: str = None) -> dict: “”“ Extract key facts about a topic. In production: this would process real search results. ““” time.sleep(0.2) focus_note = f” (focus: {focus})” if focus else “” return { “topic”: topic, “focus”: focus_note, “facts”: [ f“{topic} has seen 42% year-over-year growth in adoption”, f“Leading organizations report 3-5x productivity improvements”, f“Key technical challenges include reliability, cost, and governance”, f“The market is projected to reach $4.9B by 2028”, f“Open-source tooling has matured significantly in the past 18 months”, ], “confidence”: “high” } @record_function(“format_summary”) def format_summary(title: str, key_points: list, conclusion: str) -> dict: “”“ Format research into a structured summary. This is always the final step in the research workflow. ““” return { “title”: title, “key_points”: key_points, “conclusion”: conclusion, “format”: “structured_summary”, “generated_at”: “2026-05-26” } def execute_tool(tool_name: str, tool_input: dict) -> str: “”“ Route tool calls to the correct implementation. Returns the result as a JSON string for the model to read. ““” if tool_name == “search_topic”: result = search_topic(**tool_input) elif tool_name == “get_key_facts”: result = get_key_facts(**tool_input) elif tool_name == “format_summary”: result = format_summary(**tool_input) else: result = {“error”: f“Unknown tool: {tool_name}”} return json.dumps(result) # ── The agent loop ───────────────────────────────────────────────────────────── def run_research_agent(topic: str) -> dict: “”“ Run the research agent on a given topic. The loop: 1. Send the goal to Claude with the available tools 2. If Claude wants to call a tool, execute it and return the result 3. Continue until Claude signals it is done (stop_reason == ‘end_turn’) 4. Return the final structured summary AgentOps captures every iteration automatically because: – The LLM client is wrapped after agentops.init() – Each tool is decorated with @record_function – The session spans the full lifecycle from init to end_session() ““” print(f“\nStarting research agent for topic: ‘{topic}’”) print(“Session will be visible at https://app.agentops.ai\n”) messages = [ {“role”: “user”, “content”: f“Research this topic and produce a structured summary: {topic}”} ] final_summary = None iteration = 0 max_iterations = 10 # Safety limit — prevents runaway loops while iteration < max_iterations:

Access Denied

Access Denied You don’t have permission to access “http://zeenews.india.com/technology/your-old-android-smartphone-is-a-security-risk-here-is-when-to-stop-using-it-3054132.html” on this server. Reference #18.c4f43717.1781020883.9c6db65e https://errors.edgesuite.net/18.c4f43717.1781020883.9c6db65e

Building Semantic Search with Transformers.js and Sentence Embeddings

In this article, you will learn how sentence embeddings work and how to build a fully client-side semantic search engine using Transformers.js, with no server, no API key, and no backend infrastructure required. Topics we will cover include: How sentence embeddings and cosine similarity form the foundation of semantic search. How to generate and cache embeddings using the Transformers.js feature-extraction pipeline, including batching and Web Worker offloading. How to build a complete, reusable SemanticSearch class and persist its index across page loads. Building Semantic Search with Transformers.js and Sentence Embeddings Introduction You’ve probably shipped this bug before, where a user types “affordable laptop” into your search bar and gets zero results. But you know the database has dozens of laptop articles. They’re just all titled “budget notebook.” The words are different. The meaning is identical. Keyword search treats both as unrelated strings. This isn’t an edge case. It’s the core limitation of keyword matching: it compares characters, not concepts. It doesn’t know that “cancel” and “return” describe related actions, that “broken” and “defective” mean the same thing, or that “I can’t log in” and “account access issue” are the same problem phrased two different ways. What Sentence Embeddings Actually Are Semantic search fixes this by comparing meaning. And with Transformers.js, you can build it entirely in the browser with no server, no API key, and no backend infrastructure. This tutorial walks through the full pipeline: how sentence embeddings work, how to generate them, how cosine similarity scores relevance, and how to wire it all into a working knowledge base search application. A transformer model cannot process raw text. Before any computation happens, a sentence needs to become numbers. Embeddings are the result of that conversion: a sentence represented as a list of floating-point values called a vector. The key property isn’t just that sentences become numbers. It’s that sentences with similar meaning become vectors that are geometrically close to each other in the same vector space. The model used throughout this tutorial, sentence-transformers/all-MiniLM-L6-v2, maps every sentence to a point in a 384-dimensional vector space. The model was fine-tuned on over 1 billion sentence pairs specifically to learn this geometric property. “I need to cancel my order” and “How do I return a product?” end up close together. “The weather is beautiful today” ends up far from both. The 384 dimensions aren’t human-readable. You can’t look at dimension 47 and say what it encodes. What matters for search is not any individual dimension but the distance between two vectors. Short distance means similar meaning. Large distance means unrelated. A 3D scatter plot diagram illustrating how semantically similar sentences cluster together in vector space (click to enlarge) Pooling and Normalization The raw transformer model outputs one vector per token; every word and subword in a sentence gets its own vector. For semantic search, you need one vector per sentence. Mean pooling handles this by averaging all token vectors, weighted by the attention mask, so padding tokens don’t contribute. Normalization then scales the result to unit length (magnitude = 1), which simplifies the similarity calculation covered in the next section. In Transformers.js, both happen automatically when you pass { pooling: ‘mean’, normalize: true } to the pipeline call. Without these options, you get token-level embeddings, which are useful for tasks like named entity recognition, but not for sentence-level search. The Feature-Extraction Pipeline The feature-extraction task is different from every other Transformers.js pipeline. Tasks like text-classification or question-answering return human-readable outputs: labels, scores, strings. feature-extraction returns the raw vector representations that the model computed internally. You’re working one level lower, getting the numbers that all higher-level tasks are built on top of. import { pipeline } from ‘https://cdn.jsdelivr.net/npm/@huggingface/transformers@3.0.2’; // Load the feature-extraction pipeline // Xenova/all-MiniLM-L6-v2 is the ONNX-converted version of // sentence-transformers/all-MiniLM-L6-v2 — same model weights, browser-compatible format const extractor = await pipeline( ‘feature-extraction’, ‘Xenova/all-MiniLM-L6-v2’, { dtype: ‘q8’ } // 8-bit quantization: smaller download (~23 MB), good accuracy ); // Embed a single sentence // pooling: ‘mean’ — averages all token vectors into one sentence vector // normalize: true — scales the result to unit length (needed for cosine similarity) const output = await extractor(‘I need help with my order’, { pooling: ‘mean’, normalize: true }); console.log(output); // Tensor { // dims: [1, 384], // 1 sentence, 384 dimensions // type: ‘float32’, // data: Float32Array(384) // the actual numbers // } // Convert to a plain JavaScript array for use in your own code const vector = output.tolist()[0]; // [0.045, 0.073, -0.012, …] — 384 numbers console.log(`Vector length: ${vector.length}`); // 384 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 import { pipeline } from ‘https://cdn.jsdelivr.net/npm/@huggingface/transformers@3.0.2’; // Load the feature-extraction pipeline // Xenova/all-MiniLM-L6-v2 is the ONNX-converted version of // sentence-transformers/all-MiniLM-L6-v2 — same model weights, browser-compatible format const extractor = await pipeline( ‘feature-extraction’, ‘Xenova/all-MiniLM-L6-v2’, { dtype: ‘q8’ } // 8-bit quantization: smaller download (~23 MB), good accuracy ); // Embed a single sentence // pooling: ‘mean’ — averages all token vectors into one sentence vector // normalize: true — scales the result to unit length (needed for cosine similarity) const output = await extractor(‘I need help with my order’, { pooling: ‘mean’, normalize: true }); console.log(output); // Tensor { // dims: [1, 384], // 1 sentence, 384 dimensions // type: ‘float32’, // data: Float32Array(384) // the actual numbers // } // Convert to a plain JavaScript array for use in your own code const vector = output.tolist()[0]; // [0.045, 0.073, -0.012, …] — 384 numbers console.log(`Vector length: ${vector.length}`); // 384 What this code does: pipeline() downloads and initializes the model on first run (the browser caches it after that, so subsequent page loads are instant) You then call the extractor with a string and the two options that give you a single, normalized sentence vector The result is a Tensor object; calling .tolist()[0] converts it to a plain JavaScript array of 384 numbers

Access Denied

Access Denied You don’t have permission to access “http://zeenews.india.com/technology/wwdc-2026-apple-set-to-unveil-major-ai-upgrades-siri-may-get-a-massive-makeover-3053810.html” on this server. Reference #18.c7277368.1780921927.1c2e7301 https://errors.edgesuite.net/18.c7277368.1780921927.1c2e7301

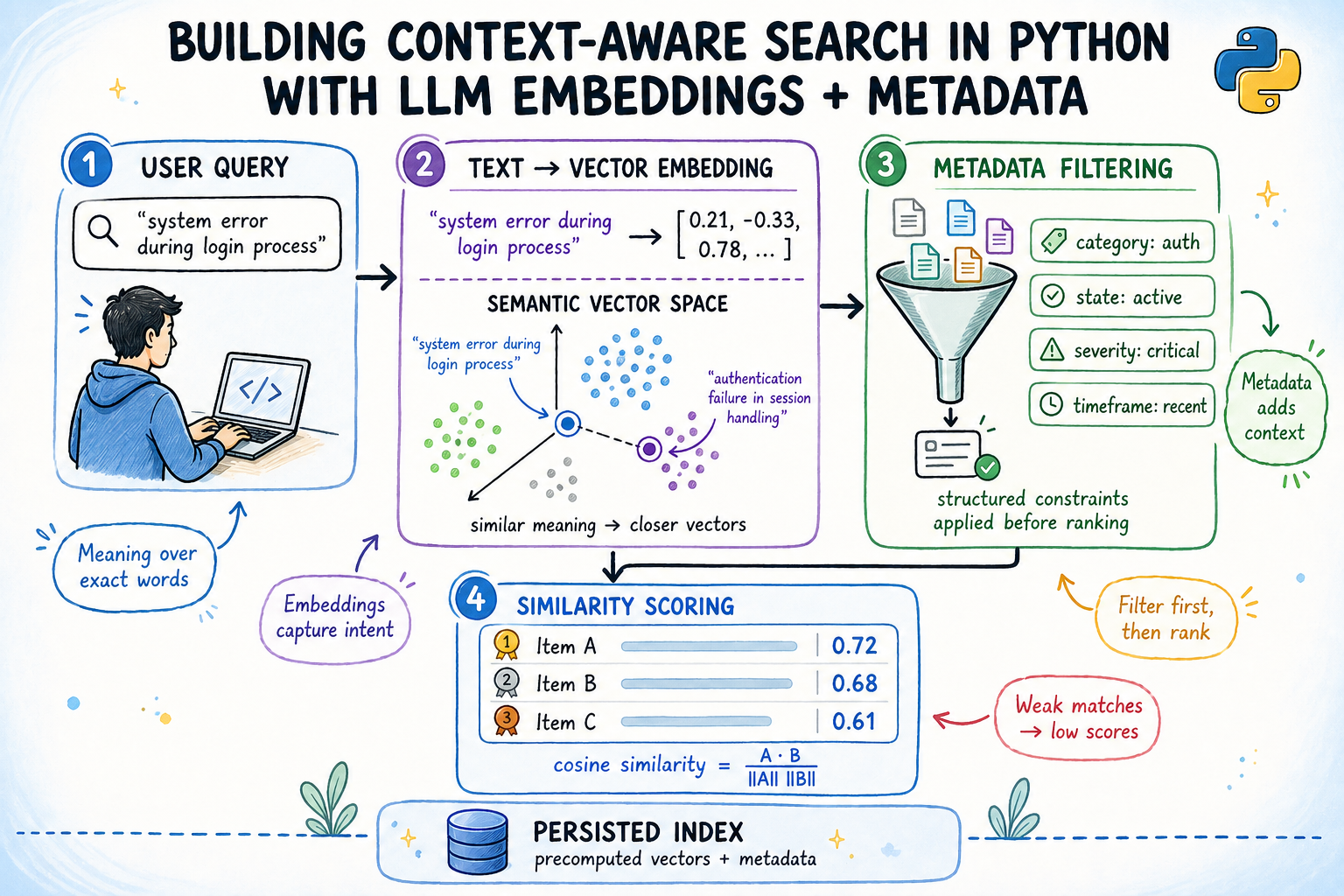

Building Context-Aware Search in Python with LLM Embeddings + Metadata

In this article, you will learn how to build a context-aware semantic search engine in Python that combines embedding-based similarity with structured metadata filtering. Topics we will cover include: How sentence embeddings and cosine similarity work together to find semantically relevant documents. How to build a metadata-aware search index that filters by team, status, priority, and date before scoring candidates. How to persist the index to disk so embeddings are computed only once and reloaded efficiently on subsequent runs. Building Context-Aware Search in Python with LLM Embeddings + Metadata Introduction Keyword search breaks the moment a user types something a document doesn’t literally say. A support engineer searching for “login keeps failing” won’t find a ticket titled “OAuth2 token refresh race condition”, even though that’s exactly what they need. This is the core problem that context-aware semantic search aims to solve. Semantic search solves this by converting text into dense vector representations called embeddings, where meaning determines proximity rather than exact word overlap. Layer structured metadata filters on top — by date, status, team, priority — and you get a system that understands what someone is asking while respecting contextual constraints at the same time. This article walks through building that system end-to-end: embeddings from a local pretrained model, a metadata-aware index, cosine similarity ranking, and an index that persists across restarts without requiring re-encoding. You can get the code on GitHub. What You Will Build A simple context-aware search engine over a corpus of engineering support tickets. By the end you will have: 384-dimensional embeddings generated locally from a pretrained model, no API key required A search index that filters by team, status, priority, and date before scoring Cosine similarity ranking over the filtered candidate pool A persisted index that reloads without re-encoding Prerequisites: Python 3.8+, basic familiarity with NumPy and working with lists of dictionaries. Install dependencies: pip install sentence-transformers numpy pip install sentence–transformers numpy Understanding How Semantic Search Works A sentence embedding model takes a string and returns a fixed-length vector of floating-point numbers. The model is trained so that sentences with similar meanings produce vectors pointing in similar directions in high-dimensional space. Cosine similarity measures the angle between two vectors:\[\text{cosine similarity}(A, B) =\frac{A \cdot B}{\|A\| \, \|B\|}\] When vectors are unit-normalized — meaning their length equals 1.0 — this simplifies to the dot product: A · B. Scores range from -1 (opposite) to 1 (identical). In practice, unrelated documents score around 0.1–0.25, and strong matches score above 0.6. So why does metadata filtering matter? Embedding models encode semantic content. They do not encode who wrote a document, what team owns it, or when it was created. These attributes live outside the text and must be handled separately. Combining both signals — semantic score and metadata constraints — is what makes search useful in real systems. Setting Up the Dataset We’ll work with 20 engineering support tickets across three teams — infrastructure, backend, and frontend — with four priority levels, two statuses, and a two-month date window. Each ticket is a plain dictionary. The text field is what gets embedded; everything else is metadata for filtering. To keep things concise, a truncated list is shown here instead of the full code block. The complete set of tickets is available in this GitHub gist. from datetime import date tickets = [ {“id”: “T-101”, “team”: “infrastructure”, “status”: “open”, “priority”: “high”, “created”: date(2025, 11, 3), “text”: “Kubernetes pod keeps crashing with OOMKilled — memory limits on the ML inference container are set too low for the model it loads at runtime.”}, {“id”: “T-102”, “team”: “infrastructure”, “status”: “open”, “priority”: “high”, “created”: date(2025, 11, 8), “text”: “Nginx ingress returning 502 after rotating TLS certificate. Chain is valid per openssl verify but the backend handshake fails immediately.”}, {“id”: “T-103”, “team”: “infrastructure”, “status”: “resolved”, “priority”: “medium”, “created”: date(2025, 10, 14), “text”: “Terraform state file locked in S3 — a team member force-applied a plan without releasing the DynamoDB lock first.”}, … {“id”: “T-401”, “team”: “infrastructure”, “status”: “open”, “priority”: “medium”, “created”: date(2025, 11, 11), “text”: “CI pipeline fails on ARM64 runners — base Docker image has no ARM variant, exec format error at build stage.”}, {“id”: “T-402”, “team”: “infrastructure”, “status”: “resolved”, “priority”: “high”, “created”: date(2025, 10, 9), “text”: “VPN gateway latency spikes at peak hours — BGP route flapping between two peers causing intermittent packet loss across the private subnet.”}, ] 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 from datetime import date tickets = [ {“id”: “T-101”, “team”: “infrastructure”, “status”: “open”, “priority”: “high”, “created”: date(2025, 11, 3), “text”: “Kubernetes pod keeps crashing with OOMKilled — memory limits on the ML inference container are set too low for the model it loads at runtime.”}, {“id”: “T-102”, “team”: “infrastructure”, “status”: “open”, “priority”: “high”, “created”: date(2025, 11, 8), “text”: “Nginx ingress returning 502 after rotating TLS certificate. Chain is valid per openssl verify but the backend handshake fails immediately.”}, {“id”: “T-103”, “team”: “infrastructure”, “status”: “resolved”, “priority”: “medium”, “created”: date(2025, 10, 14), “text”: “Terraform state file locked in S3 — a team member force-applied a plan without releasing the DynamoDB lock first.”}, … {“id”: “T-401”, “team”: “infrastructure”, “status”: “open”, “priority”: “medium”, “created”: date(2025, 11, 11), “text”: “CI pipeline fails on ARM64 runners — base Docker image has no ARM variant, exec format error at build stage.”}, {“id”: “T-402”, “team”: “infrastructure”, “status”: “resolved”, “priority”: “high”, “created”: date(2025, 10, 9), “text”: “VPN gateway latency spikes at peak hours — BGP route flapping between two peers causing intermittent packet loss across the private subnet.”}, ] A quick check on the shape of the corpus before moving on: open_ct = sum(1 for t in tickets if t[“status”] == “open”) resolved_ct = sum(1 for t in tickets if t[“status”] == “resolved”) print(f”{len(tickets)} tickets | {open_ct} open | {resolved_ct} resolved”) open_ct = sum(1 for

Access Denied

Access Denied You don’t have permission to access “http://zeenews.india.com/technology/does-airplane-mode-actually-stop-smartphone-radiation-the-real-answer-may-surprise-you-3053229.html” on this server. Reference #18.eff43717.1780747709.6b94f506 https://errors.edgesuite.net/18.eff43717.1780747709.6b94f506

Implementing Hybrid Semantic-Lexical Search in RAG

In this article, you will learn how to implement a hybrid search strategy for RAG systems by combining BM25 lexical search with semantic search, fused together using Reciprocal Rank Fusion. Topics we will cover include: Why hybrid search outperforms either lexical or semantic search alone in retrieval-augmented generation systems. How to implement BM25 lexical search and dense vector semantic search as independent retrieval engines in Python. How to merge both rankings using Reciprocal Rank Fusion (RRF) to produce a final, balanced retrieval result. Let’s get straight to it. Implementing Hybrid Semantic-Lexical Search in RAG Introduction Implementing hybrid search strategies is a critical step in building modern RAG (Retrieval-Augmented Generation) systems, especially when shifting from prototype to production-ready solutions. There is little argument against semantic search — fueled by dense vectors or embeddings, which are numerical representations of text — being incredibly useful at understanding semantics, synonyms, and context. However, lexical, keyword-based search with approaches like BM25 covers a small blind spot neglected by semantic search. Combining the best of both worlds is therefore the perfect recipe to take your RAG system’s retrieval mechanism the extra mile. Let’s explore how to implement such a hybrid search strategy through a gentle coding example, guiding you through every step of the process! Note: If you are unfamiliar with RAG systems, you may find the “Understanding RAG” article series remarkably insightful for getting the most out of this read. In particular, I recommend acquiring an understanding of vector databases first through this article. Step-by-Step Implementation The first step is to ensure all the necessary external Python libraries are installed, in particular these three: !pip install rank_bm25 sentence-transformers requests !pip install rank_bm25 sentence–transformers requests rank_bm25: an implementation of the BM25 lexical search algorithm for information retrieval (BM stands for “Best Matching”). sentence-transformers: provides pre-trained language models for generating text embeddings. In a real setting, you may already have your own vector database containing many document embeddings and not need this, but we will use it here to simulate the construction of a toy vector database and illustrate hybrid search on it. requests: used to fetch the raw dataset package from a public GitHub datasets repository prepared for this example. With these ingredients at hand, we start by loading the dataset and storing the raw texts in a list (we do so because it is a small dataset). import requests import zipfile import io import os # Downloading and extracting the dataset from the compressed file url = “https://github.com/gakudo-ai/open-datasets/raw/refs/heads/main/asia_documents.zip” response = requests.get(url) with zipfile.ZipFile(io.BytesIO(response.content)) as z: z.extractall(“asia_data”) # Loading documents and getting their filenames documents = [] doc_names = [] for file in os.listdir(“asia_data”): if file.endswith(“.txt”): with open(f”asia_data/{file}”, “r”, encoding=”utf-8″) as f: documents.append(f.read()) doc_names.append(file) print(f”Loaded {len(documents)} documents for the knowledge base.”) 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 import requests import zipfile import io import os # Downloading and extracting the dataset from the compressed file url = “https://github.com/gakudo-ai/open-datasets/raw/refs/heads/main/asia_documents.zip” response = requests.get(url) with zipfile.ZipFile(io.BytesIO(response.content)) as z: z.extractall(“asia_data”) # Loading documents and getting their filenames documents = [] doc_names = [] for file in os.listdir(“asia_data”): if file.endswith(“.txt”): with open(f“asia_data/{file}”, “r”, encoding=“utf-8”) as f: documents.append(f.read()) doc_names.append(file) print(f“Loaded {len(documents)} documents for the knowledge base.”) The hybrid search process is divided into three stages: two of them take place in parallel, or independently from each other. The third is where the fusion of both approaches happens, using a merging method called Reciprocal Rank Fusion (RRF). Let’s cover lexical search with BM25 first: from rank_bm25 import BM25Okapi # BM25 requires that each text is tokenized as a (sub)list of words tokenized_corpus = [doc.lower().split() for doc in documents] bm25 = BM25Okapi(tokenized_corpus) def search_bm25(query, top_k=3): tokenized_query = query.lower().split() # Getting scores (lexical relevance to the query) for all documents scores = bm25.get_scores(tokenized_query) # Ranking documents by score ranked_indices = sorted(range(len(scores)), key=lambda i: scores[i], reverse=True) return ranked_indices[:top_k], scores from rank_bm25 import BM25Okapi # BM25 requires that each text is tokenized as a (sub)list of words tokenized_corpus = [doc.lower().split() for doc in documents] bm25 = BM25Okapi(tokenized_corpus) def search_bm25(query, top_k=3): tokenized_query = query.lower().split() # Getting scores (lexical relevance to the query) for all documents scores = bm25.get_scores(tokenized_query) # Ranking documents by score ranked_indices = sorted(range(len(scores)), key=lambda i: scores[i], reverse=True) return ranked_indices[:top_k], scores The lexical search process has been encapsulated in a function called search_bm25(). This function takes two input arguments: a string containing the user’s query to the RAG system, and the number of top results to retrieve. The rank_bm25 library provides a get_scores() method that computes, for each document — treated as a collection of tokens — a lexical relevance score. We then rank documents by decreasing score, select the top-k, and return them. Meanwhile, the semantic search engine first uses a sentence transformer model to obtain embedding vectors for the texts and the user query, then applies a vector similarity metric like cosine similarity to rank texts by semantic relevance and retrieve the most relevant k: from sentence_transformers import SentenceTransformer, util import torch # Loading the pre-trained embedding model model = SentenceTransformer(‘all-MiniLM-L6-v2’) # Pre-compute embeddings for our corpus (our “Vector DB”) # You do not need this step if you already have an external vector database: # you may read and import your document vectors instead doc_embeddings = model.encode(documents, convert_to_tensor=True) def search_semantic(query, top_k=3): # Embedding the user’s query into a vector query_embedding = model.encode(query, convert_to_tensor=True) # Calculating cosine similarity between the query and all documents cosine_scores = util.cos_sim(query_embedding, doc_embeddings)[0] # Ranking documents by similarity ranked_indices = torch.argsort(cosine_scores, descending=True).tolist() return ranked_indices[:top_k], cosine_scores.tolist() 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 from sentence_transformers import SentenceTransformer, util import torch # Loading the pre-trained embedding model model = SentenceTransformer(‘all-MiniLM-L6-v2’) # Pre-compute embeddings for our corpus (our “Vector DB”) # You do not need this step if you already have

Access Denied

Access Denied You don’t have permission to access “http://zeenews.india.com/technology/instagram-posting-mistakes-5-common-errors-that-secretly-kill-creators-reach-in-2026-3053046.html” on this server. Reference #18.5cfdd417.1780707825.8ee7f8c https://errors.edgesuite.net/18.5cfdd417.1780707825.8ee7f8c

Access Denied

Access Denied You don’t have permission to access “http://zeenews.india.com/technology/motorola-edge-70-pro-vs-vivo-v70-price-camera-features-battery-which-smartphone-is-better-to-buy-3052993.html” on this server. Reference #18.eff43717.1780661349.5c56d413 https://errors.edgesuite.net/18.eff43717.1780661349.5c56d413

Access Denied

Access Denied You don’t have permission to access “http://zeenews.india.com/technology/mobile-recharge-expiry-hack-hidden-trick-to-keep-your-prepaid-sim-active-without-buying-rs-299-or-rs-399-plans-3052936.html” on this server. Reference #18.eff43717.1780650558.59a7985b https://errors.edgesuite.net/18.eff43717.1780650558.59a7985b