Ubisoft cancelled games: The French video game publisher Ubisoft has cancelled several game projects as part of a major company restructuring, disappointing gamers around the world. In an announcement on January 21, 2026, the company confirmed that six games have been cancelled, including the highly anticipated Prince of Persia: The Sands of Time Remake. The move comes as Ubisoft reorganises its internal structure into five specialised “Creative Houses,” each focused on different genres and franchises. According to the company, these changes are designed to help improve creative output and place greater focus on the quality of future releases. Six games cancelled Add Zee News as a Preferred Source Among the six games that have been scrapped, the most talked about is Prince of Persia: The Sands of Time Remake, which many fans had been eagerly waiting for. The remake had been in development for several years and was expected to launch eventually, but Ubisoft said it no longer fits the company’s quality and strategic goals. In addition to the remake, Ubisoft has cancelled four unannounced titles, including three new IPs, along with one mobile game that had not been publicly revealed. The cancellations have led to disappointment within the gaming community, especially among those who were hoping to see these projects come to life. Social media posts from fans have expressed sadness and frustration over the news. (Also Read: OnePlus To Be Dismantled? What It Means For Existing Users As India CEO Breaks Silence And Says…) More games delayed Ubisoft has also confirmed that seven other games currently in development will be delayed to allow developers more time to improve quality. One of the delayed titles is believed to be a remake of Assassin’s Creed IV: Black Flag, which has been rumoured for some time. These delays mean players will have to wait longer for these games to be released, likely in 2027. Company restructuring According to reports, the game cancellations are part of a broader reset aimed at strengthening Ubisoft’s creative strategy. The company plans to focus more on its major franchises and new technologies, including AI-driven game development and improved player experiences. Ubisoft has also revised its financial outlook due to the cancellations and delays, expecting lower bookings and continued cost-cutting measures.

Goodbye to Chinese smartphone monopoly? India likely to launch domestic phone brands in 18 months | Technology News

Indian smartphone brands: In a bold and expected move, India is moving closer to launching its own mobile phone brands within the next 12 to 18 months, according to Union Electronics and IT Minister Ashwini Vaishnaw. He made the announcement while speaking at the World Economic Forum in Davos, Switzerland. This development comes as India builds a strong electronics supply network capable of supporting the local production of high-end devices. Experts say this is a major step toward reducing dependence on foreign smartphone makers and boosting domestic innovation. India has already become one of the global leaders in phone assembly and manufacturing. From just two mobile phone units in 2014, the country now has more than 300 production facilities. As of December 2024, more than 99% of phones sold in India were locally made, a sharp rise from 26% in 2014 -15. Add Zee News as a Preferred Source According to industry analysts, India’s electronics manufacturing sector could reach an estimated USD 300 billion by 2026, driven by government incentives and strong export growth. Indian mobile phone exports have surged significantly in recent years, reflecting rising global demand for “Made in India” devices. (Also Read: US exits WHO: From COVID-19 to anti-American narrative, what caused Trump to abandon world body) Government support for domestic brands Union Minister Vaishnaw explained that India’s mature electronics ecosystem now includes many suppliers of phone components, making it possible to build more than just assembled products. He said the groundwork is nearly complete for India to support end-to-end mobile phone brands, from design to production. Officials say political and economic support, including incentives for manufacturing and semiconductor production, will help local companies launch competitive smartphones. More choice for buyers If successful, India’s own phone brands could offer more choices for consumers and reduce reliance on imported smartphones. Analysts also believe this would create new jobs in design, research, and manufacturing. A domestic brand could also improve India’s position in the global tech market and encourage innovation tailored to local needs. This move supports India’s broader “Make in India” strategy and could give a strong boost to India’s developing technology sector.

WEF 2026: India emerges as major AI force backed by reforms, digital infra, says IMF | Technology News

India is emerging as one of the world’s major forces in artificial intelligence (AI), supported by strong reforms, digital public infrastructure, and a skilled technology workforce, said Kristalina Georgieva, Managing Director of the International Monetary Fund, at the World Economic Forum in Davos. The IMF MD pointed to India’s rapidly built digital public infrastructure and deep pool of IT-skilled labour as major strengths, NDTV Profit reported. Georgieva said the IMF holds India in high regard for the pace and quality of its recent economic reforms. Add Zee News as a Preferred Source When asked about comments by Union Electronics and Information Technology Minister Ashwini Vaishnaw, Georgieva said that the Fund believes India’s prospects in AI are “remarkable”. Vaishnaw had recently pushed back strongly against remarks by Georgieva that India is in a “second grouping” of AI powers. Vaishnaw cited a Stanford assessment that showed India ranked third globally on AI preparedness. Georgieva noted that the IMF’s assessment showed AI could boost global growth by up to 0.8 percentage points and that dynamic economies like India stand to gain even more. “India is a very dynamic economy already, and with AI, it would be even more so,” Georgieva said, praising India’s approach to staying competitive while charting its own path on AI development. She confirmed her travel plans to India next month for the AI summit, saying she was “very, very excited” about the visit and described India as “a bright spot on a somewhat cloudy global economic horizon”. She cautioned that globally, expectations from AI are very high, which could cause downturns if they fail to materialise. Georgieva said that in such an environment, countries must focus on strong economic fundamentals, adding that India’s policy focus in this regard is admirable.

Motorola Signature launched in India with 5,200mAh battery: Check price, camera, features, display, and everything you can’t miss | Technology News

Motorola Signature launched: Motorola has officially launched its new flagship smartphone, the Motorola Signature, in India. The smartphone comes with high-end hardware, long-term software support, and a premium design, and is priced at Rs 59,999. The Motorola Signature will be available for purchase exclusively through Flipkart starting January 30. It is offered in two colour options – Pantone Carbon and Pantone Martini Olive. As part of the launch offers, customers using HDFC Bank or Axis Bank cards can avail an instant discount of Rs 5,000, bringing the effective price down to Rs 54,999. Motorola Signature: Camera and battery Add Zee News as a Preferred Source The smartphone features a triple rear camera setup, including a 50MP Sony Lytia 828 primary sensor with OIS, a 50MP ultrawide camera, and a 50MP periscope telephoto lens offering 3x optical zoom. On the front, it has a 50MP autofocus camera equipped in a punch-hole design. It is powered by a 5,200mAh battery with support for 90W wired fast charging, 10W wireless reverse charging, and 5W wired reverse charging. Motorola Signature: Display and performance The smartphone features a 6.8-inch FHD+ LTPO AMOLED display with a dynamic 165Hz refresh rate. The panel supports Dolby Vision and HDR10+ and can reach a peak brightness of up to 6,200 nits. For screen protection, it is equipped with Gorilla Glass Victus 2. The device is powered by Qualcomm’s Snapdragon 8 Gen 5 SoC, paired with LPDDR5X RAM and up to 1TB of UFS 4.1 storage. While the chipset is positioned below the top-tier processors in the segment, it is expected to deliver smooth performance for regular usage, multitasking, and gaming. (Also Read: Wire vs Wireless mouse: Which is faster and better for work and gaming? Features, Performance and speed compared) Motorola Signature: Build and audio The Motorola Signature is IP68 and IP69 certified for water and dust resistance and meets the MIL-STD-810H durability standard. It features a fabric-finished rear panel with an aluminium frame. Audio is handled by stereo speakers tuned by Bose, with support for Dolby Atmos. Motorola Signature: Long-term support The Motorola Signature runs Android 16 out of the box and includes Moto AI features focused on improving productivity and ease of use. Motorola has committed to providing seven years of Android OS and security updates, placing the device alongside brands like Samsung and Google in terms of long-term software support.

India’s digital payments boom: BHIM app records over 300 pc growth in monthly transactions in 2025 | Technology News

The BHIM Payments App witnessed a sharp surge in usage in the calendar year 2025, reflecting India’s growing shift towards UPI-based digital payments. Monthly transactions on the app rose more than four times during the year, highlighting increased trust, ease of use, and expanding payment options for users across the country. Data released by NPCI BHIM Services Limited shows that monthly transactions on the BHIM app grew from 38.97 million in January 2025 to 165.1 million in December 2025. This marks a growth of over 300 per cent in transaction volumes, with the platform recording an average month-on-month growth of around 14 per cent throughout the year. Add Zee News as a Preferred Source Along with higher volumes, the value of transactions also saw a strong jump. In December 2025, transaction value crossed Rs 20,854 crore. Compared to the same period last year, volumes increased by nearly 390 per cent, while transaction value rose by more than 120 per cent. This indicates that users are increasingly using BHIM not only for small daily payments but also for high-value transactions. Delhi emerged as one of the leading markets for the BHIM Payments App in 2025. The growth in the national capital was mainly driven by small-ticket, high-frequency transactions. Peer-to-peer payments accounted for 28 per cent of total transactions, followed by grocery purchases at 18 per cent. Fast-food outlets contributed 7 per cent, eating places 6 per cent, telecom services 4 per cent, service stations 3 per cent, and online marketplaces 2 per cent. The app also gained popularity for IPO mandate authentications and other high-value transactions, underlining its reliability for critical payment and authentication needs. Commenting on the performance, Lalitha Nataraj, Managing Director and CEO of NPCI BHIM Services Limited, said the BHIM Payments App has been designed to offer safe, convenient, and inclusive digital payments, even in areas with low internet connectivity.

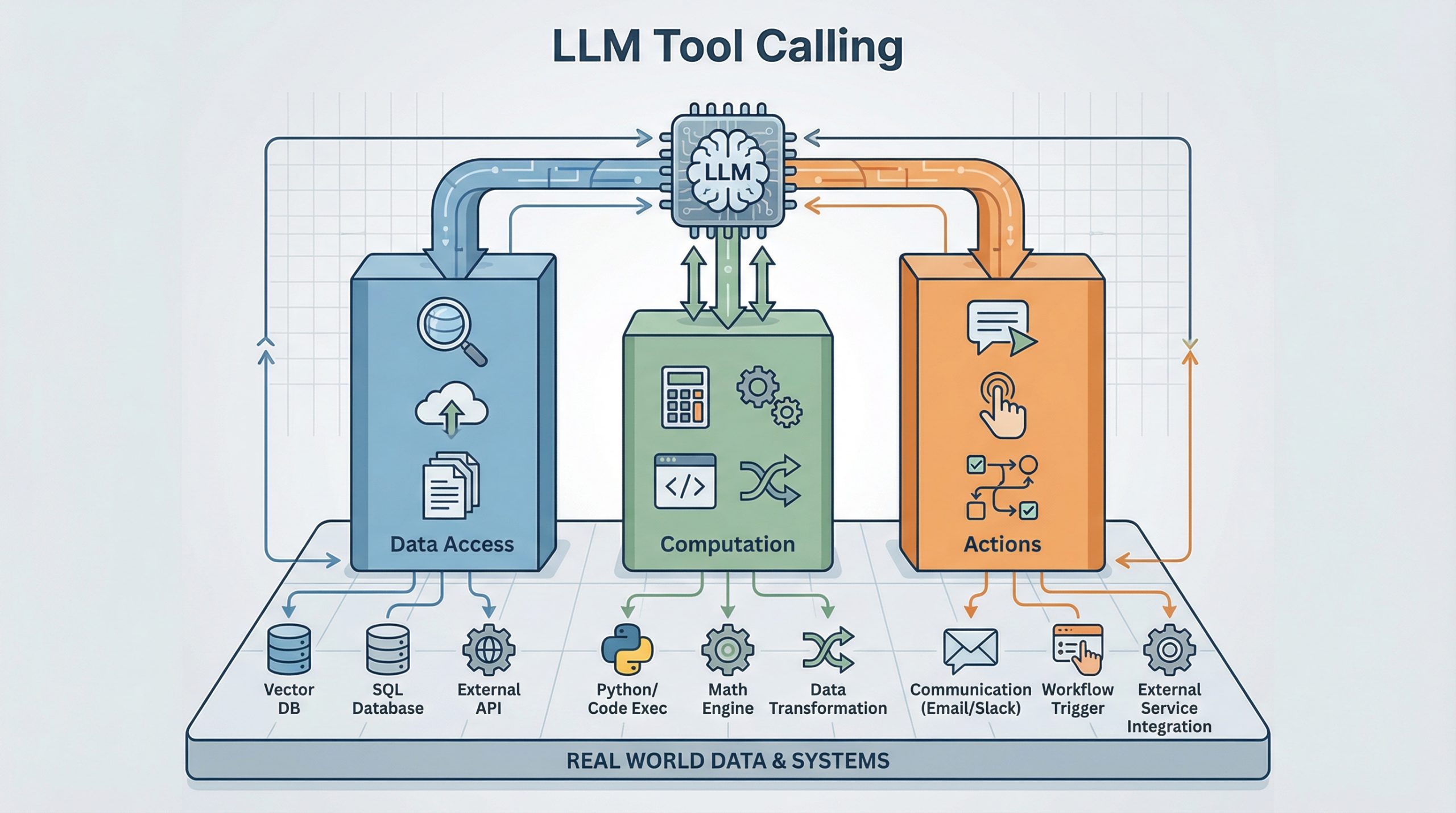

Mastering LLM Tool Calling: The Complete Framework for Connecting Models to the Real World

Mastering LLM Tool Calling: The Complete Framework for Connecting Models to the Real World – MachineLearningMastery.com Mastering LLM Tool Calling: The Complete Framework for Connecting Models to the Real World – MachineLearningMastery.com

Goa ties up with Musk’s Starlink to enhance digital connectivity, disaster resilience | Technology News

The Government of Goa on Thursday announced the signing of a Memorandum of Understanding (MoU) with Elon Musk-led Starlink Satellite Communications to strengthen digital infrastructure and connectivity across the state. Starlink’s partnership with the Department of Information Technology, Electronics & Communications (DITE&C) aims to explore advanced connectivity solutions to support digital inclusion, public infrastructure, coastal safety, and emergency response capabilities across the state. “The Government of Goa is committed to harnessing technology to drive digital transformation and improve the lives of our citizens. This partnership with Starlink is a significant step towards achieving our vision of a digitally empowered Goa,” Goa Chief Minister Dr Pramod Sawant said in a statement. Add Zee News as a Preferred Source “This partnership with Starlink will transform Goa’s governance landscape, leveraging modern technology to drive efficiency and responsiveness. By bridging the digital divide and enhancing public services, we will make Goa an even more attractive hub for investment, tourism, and talent, while ensuring our citizens reap the benefits of digital progress,” added Rohan Khaunte, Minister for ITE&C. Under the MoU, DITE&C and Starlink will explore opportunities for collaboration in key areas, including digital connectivity, disaster resilience, and smart governance in Goa. Starlink Satellite Communications Private Limited, the Indian entity for SpaceX’s Starlink satellite internet services, has expressed interest in piloting initiatives related to connectivity solutions. These include providing satellite broadband connectivity to select locations with limited terrestrial networks, such as government schools, healthcare facilities, and disaster management centres. The company will also enhance emergency preparedness, build capacity through training, and explore affordable tariff structures for socially beneficial use cases. Additionally, it will support smart governance, tourism, and coastal development by providing connectivity solutions for public infrastructure and services. Through this partnership, the Government of Goa reaffirmed its dedication to digital transformation by leveraging technology to drive innovation, economic prosperity, and citizen welfare, and by building a resilient digital ecosystem for a sustainable future. Starlink India is yet to start its satellite services in the country. The satellite-based internet service is expected to be available for Rs 8,600 per month, while new subscribers will need to purchase a hardware kit priced at Rs 34,000.

A Gentle Introduction to Language Model Fine-tuning

import dataclasses import tokenizers import torch import torch.nn as nn import torch.nn.functional as F from torch import Tensor # Model architecture same as training script @dataclasses.dataclass class LlamaConfig: “”“Define Llama model hyperparameters.”“” vocab_size: int = 50000 max_position_embeddings: int = 2048 hidden_size: int = 768 intermediate_size: int = 4*768 num_hidden_layers: int = 12 num_attention_heads: int = 12 num_key_value_heads: int = 3 class RotaryPositionEncoding(nn.Module): “”“Rotary position encoding.”“” def __init__(self, dim: int, max_position_embeddings: int) -> None: super().__init__() self.dim = dim self.max_position_embeddings = max_position_embeddings N = 10_000.0 inv_freq = 1.0 / (N ** (torch.arange(0, dim, 2) / dim)) inv_freq = torch.cat((inv_freq, inv_freq), dim=–1) position = torch.arange(max_position_embeddings) sinusoid_inp = torch.outer(position, inv_freq) self.register_buffer(“cos”, sinusoid_inp.cos()) self.register_buffer(“sin”, sinusoid_inp.sin()) def forward(self, x: Tensor) -> Tensor: batch_size, seq_len, num_heads, head_dim = x.shape device = x.device dtype = x.dtype cos = self.cos.to(device, dtype)[:seq_len].view(1, seq_len, 1, –1) sin = self.sin.to(device, dtype)[:seq_len].view(1, seq_len, 1, –1) x1, x2 = x.chunk(2, dim=–1) rotated = torch.cat((–x2, x1), dim=–1) return (x * cos) + (rotated * sin) class LlamaAttention(nn.Module): “”“Grouped-query attention with rotary embeddings.”“” def __init__(self, config: LlamaConfig) -> None: super().__init__() self.hidden_size = config.hidden_size self.num_heads = config.num_attention_heads self.head_dim = self.hidden_size // self.num_heads self.num_kv_heads = config.num_key_value_heads assert (self.head_dim * self.num_heads) == self.hidden_size self.q_proj = nn.Linear(self.hidden_size, self.num_heads * self.head_dim, bias=False) self.k_proj = nn.Linear(self.hidden_size, self.num_kv_heads * self.head_dim, bias=False) self.v_proj = nn.Linear(self.hidden_size, self.num_kv_heads * self.head_dim, bias=False) self.o_proj = nn.Linear(self.num_heads * self.head_dim, self.hidden_size, bias=False) def forward(self, hidden_states: Tensor, rope: RotaryPositionEncoding) -> Tensor: bs, seq_len, dim = hidden_states.size() query_states = self.q_proj(hidden_states).view(bs, seq_len, self.num_heads, self.head_dim) key_states = self.k_proj(hidden_states).view(bs, seq_len, self.num_kv_heads, self.head_dim) value_states = self.v_proj(hidden_states).view(bs, seq_len, self.num_kv_heads, self.head_dim) attn_output = F.scaled_dot_product_attention( rope(query_states).transpose(1, 2), rope(key_states).transpose(1, 2), value_states.transpose(1, 2), is_causal=True, dropout_p=0.0, enable_gqa=True, ) attn_output = attn_output.transpose(1, 2).reshape(bs, seq_len, self.hidden_size) return self.o_proj(attn_output) class LlamaMLP(nn.Module): “”“Feed-forward network with SwiGLU activation.”“” def __init__(self, config: LlamaConfig) -> None: super().__init__() self.gate_proj = nn.Linear(config.hidden_size, config.intermediate_size, bias=False) self.up_proj = nn.Linear(config.hidden_size, config.intermediate_size, bias=False) self.act_fn = F.silu self.down_proj = nn.Linear(config.intermediate_size, config.hidden_size, bias=False) def forward(self, x: Tensor) -> Tensor: gate = self.act_fn(self.gate_proj(x)) up = self.up_proj(x) return self.down_proj(gate * up) class LlamaDecoderLayer(nn.Module): “”“Single transformer layer for a Llama model.”“” def __init__(self, config: LlamaConfig) -> None: super().__init__() self.input_layernorm = nn.RMSNorm(config.hidden_size, eps=1e–5) self.self_attn = LlamaAttention(config) self.post_attention_layernorm = nn.RMSNorm(config.hidden_size, eps=1e–5) self.mlp = LlamaMLP(config) def forward(self, hidden_states: Tensor, rope: RotaryPositionEncoding) -> Tensor: residual = hidden_states hidden_states = self.input_layernorm(hidden_states) attn_outputs = self.self_attn(hidden_states, rope=rope) hidden_states = attn_outputs + residual residual = hidden_states hidden_states = self.post_attention_layernorm(hidden_states) return self.mlp(hidden_states) + residual class LlamaModel(nn.Module): “”“The full Llama model without any pretraining heads.”“” def __init__(self, config: LlamaConfig) -> None: super().__init__() self.rotary_emb = RotaryPositionEncoding( config.hidden_size // config.num_attention_heads, config.max_position_embeddings, ) self.embed_tokens = nn.Embedding(config.vocab_size, config.hidden_size) self.layers = nn.ModuleList([ LlamaDecoderLayer(config) for _ in range(config.num_hidden_layers) ]) self.norm = nn.RMSNorm(config.hidden_size, eps=1e–5) def forward(self, input_ids: Tensor) -> Tensor: hidden_states = self.embed_tokens(input_ids) for layer in self.layers: hidden_states = layer(hidden_states, rope=self.rotary_emb) return self.norm(hidden_states) class LlamaForPretraining(nn.Module): def __init__(self, config: LlamaConfig) -> None: super().__init__() self.base_model = LlamaModel(config) self.lm_head = nn.Linear(config.hidden_size, config.vocab_size, bias=False) def forward(self, input_ids: Tensor) -> Tensor: hidden_states = self.base_model(input_ids) return self.lm_head(hidden_states) def apply_repetition_penalty(logits: Tensor, tokens: list[int], penalty: float) -> Tensor: “”“Apply repetition penalty to the logits.”“” for tok in tokens: if logits[tok] > 0: logits[tok] /= penalty else: logits[tok] *= penalty return logits @torch.no_grad() def generate(model, tokenizer, prompt, max_tokens=100, temperature=1.0, repetition_penalty=1.0, repetition_penalty_range=10, top_k=50, device=None) -> str: “”“Generate text autoregressively from a prompt. Args: model: The trained LlamaForPretraining model tokenizer: The tokenizer prompt: Input text prompt max_tokens: Maximum number of tokens to generate temperature: Sampling temperature (higher = more random) repetition_penalty: Penalty for repeating tokens repetition_penalty_range: Number of previous tokens to consider for repetition penalty top_k: Only sample from top k most likely tokens device: Device the model is loaded on Returns: Generated text ““” # Turn model to evaluation mode: Norm layer will work differently model.eval() # Get special token IDs bot_id = tokenizer.token_to_id(“[BOT]”) eot_id = tokenizer.token_to_id(“[EOT]”) # Tokenize the prompt into integer tensor prompt_tokens = [bot_id] + tokenizer.encode(” “ + prompt).ids input_ids = torch.tensor([prompt_tokens], dtype=torch.int64, device=device) # Recursively generate tokens generated_tokens = [] for _step in range(max_tokens): # Forward pass through model logits = model(input_ids) # Get logits for the last token next_token_logits = logits[0, –1, :] / temperature # Apply repetition penalty if repetition_penalty != 1.0 and len(generated_tokens) > 0: next_token_logits = apply_repetition_penalty( next_token_logits, generated_tokens[–repetition_penalty_range:], repetition_penalty, ) # Apply top-k filtering if top_k > 0: top_k_logits = torch.topk(next_token_logits, top_k)[0] indices_to_remove = next_token_logits < top_k_logits[–1] next_token_logits[indices_to_remove] = float(“-inf”) # Sample from the filtered distribution probs = F.softmax(next_token_logits, dim=–1) next_token = torch.multinomial(probs, num_samples=1) # Early stop if EOT token is generated if next_token.item() == eot_id: break # Append the new token to input_ids for next iteration input_ids = torch.cat([input_ids, next_token.unsqueeze(0)], dim=1) generated_tokens.append(next_token.item()) # Decode all generated tokens return tokenizer.decode(generated_tokens) checkpoint = “llama_model_final.pth” # saved model checkpoint tokenizer = “bpe_50K.json” # saved tokenizer max_tokens = 100 temperature = 0.9 top_k = 50 penalty = 1.1 penalty_range = 10 # Load tokenizer and model device = torch.device(“cuda” if torch.cuda.is_available() else “cpu”) tokenizer = tokenizers.Tokenizer.from_file(tokenizer) config = LlamaConfig() model = LlamaForPretraining(config).to(device) model.load_state_dict(torch.load(checkpoint, map_location=device)) prompt = “Once upon a time, there was” response = generate( model=model, tokenizer=tokenizer, prompt=prompt, max_tokens=max_tokens, temperature=temperature, top_k=top_k, repetition_penalty=penalty, repetition_penalty_range=penalty_range, device=device, ) print(prompt) print(“-“ * 20) print(response)

Wire vs Wireless mouse: Which is faster and better for work and gaming? Features, Performance and speed compared | Technology News

Wire vs Wireless mouse: In today’s world of computers and laptops, choosing the right mouse may seem like a casual choice, but it can make a big difference in how smoothly you work or play games. Wired and wireless mice are the two main options, and each offers different features. Experts say that both types are good, but the best choice depends on how you plan to use your mouse. A wired mouse connects to your computer with a cable. You plug it into a USB port, and it works right away without any additional setup. Because of this direct link, wired mice offer a steady and reliable connection at all times. On the other hand, a wireless mouse connects through Bluetooth or a small USB receiver plugged into your laptop. This means you don’t need to worry about cables, making it easy to carry and work around. Wireless mice may need to be paired before use and rely on batteries or internal rechargeable power. Add Zee News as a Preferred Source Which is faster? When it comes to speed and performance, wired mice usually have the edge. Because there is no wireless signal involved, data travels instantly from the mouse to the computer. This means lower latency – the tiny delay between moving the mouse and seeing the cursor move on screen. For most everyday tasks, such as browsing or office work, the difference between wired and wireless may be hard to notice. However, for activities like competitive gaming or detailed graphic design, that slight delay can matter. Many wired gaming mice also support very high polling rates, reporting position updates thousands of times per second. Wireless mouse technology has improved a lot in recent years, and high-end models now offer performance close to wired ones. However, wired mice typically still win in terms of raw speed and response. (Also Read: Ubisoft cancelled games: Six titles scrapped, including Prince of Persia: The sands of time remake; fans disappointed) Convenience and features Wireless mice have an edge in flexibility and comfort. Without a cable dragging on the desk, you get a cleaner setup and more freedom to move the mouse around, which is especially useful if you use a laptop or travel often. Wireless models also help reduce desk clutter and feel more modern. Many come with long battery life and can last weeks between charges. However, they do require battery management, and sometimes a lost dongle can be an issue. Wired mice, in contrast, never need charging and usually cost less. Their simple plug-and-use nature makes them dependable for everyday use without extra steps. Which one wins? There is no single best choice for everyone. If you prioritise precision, low latency, and constant performance, a wired mouse is the better option. But if you value mobility, desk tidiness, and convenience, a wireless mouse may suit you better. In short, the choice of mouse depends on how you use your computer or laptop – whether for work, gaming, or everyday tasks. Each type has its place, and both continue to improve with new technology.

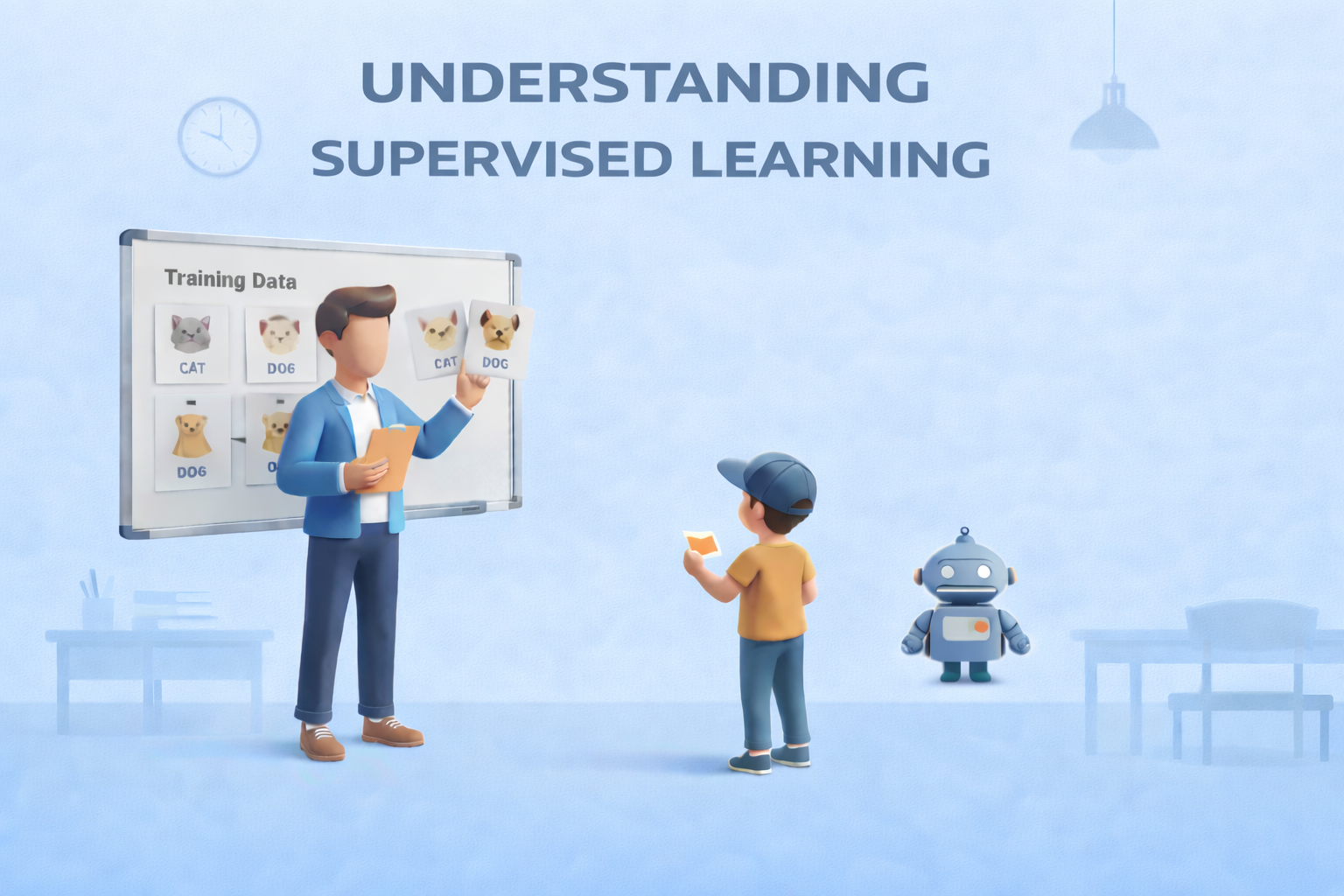

Supervised Learning: The Foundation of Predictive Modeling

Supervised Learning: The Foundation of Predictive ModelingImage by Author Editor’s note: This article is a part of our series on visualizing the foundations of machine learning. Welcome to the latest entry in our series on visualizing the foundations of machine learning. In this series, we will aim to break down important and often complex technical concepts into intuitive, visual guides to help you master the core principles of the field. This entry focuses on supervised learning, the foundation of predictive modeling. The Foundation of Predictive Modeling Supervised learning is widely regarded as the foundation of predictive modeling in machine learning. But why? At its core, it is a learning paradigm in which a model is trained on labeled data — examples where both the input features and the correct outputs (ground truth) are known. By learning from these labeled examples, the model can make accurate predictions on new, unseen data. A helpful way to understand supervised learning is through the analogy of learning with a teacher. During training, the model is shown examples along with the correct answers, much like a student receiving guidance and correction from an instructor. Each prediction the model makes is compared to the ground truth label, feedback is provided, and adjustments are made to reduce future mistakes. Over time, this guided process helps the model internalize the relationship between inputs and outputs. The objective of supervised learning is to learn a reliable mapping from features to labels. This process revolves around three essential components: First is the training data, which consists of labeled examples and serves as the foundation for learning Second is the learning algorithm, which iteratively adjusts model parameters to minimize prediction error on the training data Finally, the trained model emerges from this process, capable of generalizing what it has learned to make predictions on new data Supervised learning problems generally fall into two major categories: Regression tasks focus on predicting continuous values, such as house prices or temperature readings; Classification tasks, on the other hand, involve predicting discrete categories, such as identifying spam versus non-spam emails or recognizing objects in images. Despite their differences, both rely on the same core principle of learning from labeled examples. Supervised learning plays a central role in many real-world machine learning applications. It typically requires large, high-quality datasets with reliable ground truth labels, and its success depends on how well the trained model can generalize beyond the data it was trained on. When applied effectively, supervised learning enables machines to make accurate, actionable predictions across a wide range of domains. The visualization below provides a concise summary of this information for quick reference. You can download a PDF of the infographic in high resolution here. Supervised Learning: Visualizing the Foundations of Machine Learning (click to enlarge)Image by Author Machine Learning Mastery Resources These are some selected resources for learning more about supervised learning: Supervised and Unsupervised Machine Learning Algorithms – This beginner-level article explains the differences between supervised, unsupervised, and semi-supervised learning, outlining how labeled and unlabeled data are used and highlighting common algorithms for each approach.Key takeaway: Knowing when to use labeled versus unlabeled data is fundamental to choosing the right learning paradigm. Simple Linear Regression Tutorial for Machine Learning – This practical, beginner-friendly tutorial introduces simple linear regression, explaining how a straight-line model is used to describe and predict the relationship between a single input variable and a numerical output.Key takeaway: Simple linear regression models relationships using a line defined by learned coefficients. Linear Regression for Machine Learning – This introductory article provides a broader overview of linear regression, covering how the algorithm works, key assumptions, and how it is applied in real-world machine learning workflows.Key takeaway: Linear regression serves as a core baseline algorithm for numerical prediction tasks. 4 Types of Classification Tasks in Machine Learning – This article explains the four primary types of classification problems — binary, multi-class, multi-label, and imbalanced classification — using clear explanations and practical examples.Key takeaway: Correctly identifying the type of classification problem guides model selection and evaluation strategy. One-vs-Rest and One-vs-One for Multi-Class Classification – This practical tutorial explains how binary classifiers can be extended to multi-class problems using One-vs-Rest and One-vs-One strategies, with guidance on when to use each.Key takeaway: Multi-class problems can be solved by decomposing them into multiple binary classification tasks. Be on the lookout for for additional entries in our series on visualizing the foundations of machine learning. About Matthew Mayo Matthew Mayo (@mattmayo13) holds a master’s degree in computer science and a graduate diploma in data mining. As managing editor of KDnuggets & Statology, and contributing editor at Machine Learning Mastery, Matthew aims to make complex data science concepts accessible. His professional interests include natural language processing, language models, machine learning algorithms, and exploring emerging AI. He is driven by a mission to democratize knowledge in the data science community. Matthew has been coding since he was 6 years old.