Artificial intelligence would not replace human jobs but can only reshape work by automating tasks, said leading business executives from technology companies at the ongoing World Economic Forum 2026 in Davos. Kian Katanforoosh, Founder and Chief Executive Officer of Workera, said language matters when describing AI. “Personally, I’m not a fan of calling AI agents or co-workers,” he told a WEF session, arguing that AI excels at tasks but can’t outperform humans at “entire jobs.” Humans, by contrast, perform “hundreds of tasks at times.” “Predictions that AI would wholesale replace jobs have so far been wrong,” he added. Add Zee News as a Preferred Source Munjal Shah, co-founder and CEO of Hippocratic AI, agreed that AI will augment human employees at a massive scale rather than replace them. He forecast a future of “8 billion people and 80 billion AIs,” saying most systems will enable new use cases rather than replacing existing human roles. He pointed to an AI system that called thousands of people during a heatwave, guiding them to cooler locations and offering health advice. Getting it right required rigorous testing. “We have models that check models that check models,” he said. Kate Kallot, Founder and CEO of Amini, said AI firmly remains a “tool” that cannot make value-based decisions. It “can’t choose the best outcomes” because it doesn’t yet have the right inputs, Kallot said. Christoph Schweizer, CEO of BCG, said the experience of working with AI can feel like collaborating with a co-worker. “You are now in a reality where it feels like a co-worker, whether you call it that or not,” he said. Schweizer argued that success depends on how companies change their organizations, not just their tools. “They will succeed if they really change how their people work,” he said. He urged that AI be treated as “a CEO problem” that cannot be delegated. Enrique Lores, President and Chief Executive Officer of HP, urged balance in AI usage, with restraint in being more demanding of AI co-workers than of human employees. In HP’s call centres, AI sometimes gives the wrong answer; yet overall accuracy is higher than before, and customer satisfaction has improved, he added.

The Beginner’s Guide to Computer Vision with Python

In this article, you will learn how to complete three beginner-friendly computer vision tasks in Python — edge detection, simple object detection, and image classification — using widely available libraries. Topics we will cover include: Installing and setting up the required Python libraries. Detecting edges and faces with classic OpenCV tools. Training a compact convolutional neural network for image classification. Let’s explore these techniques. The Beginner’s Guide to Computer Vision with PythonImage by Editor Introduction Computer vision is an area of artificial intelligence that gives computer systems the ability to analyze, interpret, and understand visual data, namely images and videos. It encompasses everything from classical tasks like image filtering, edge detection, and feature extraction, to more advanced tasks such as image and video classification and complex object detection, which require building machine learning and deep learning models. Thankfully, Python libraries like OpenCV and TensorFlow make it possible — even for beginners — to create and experiment with their own computer vision solutions using just a few lines of code. This article is designed to guide beginners interested in computer vision through the implementation of three fundamental computer vision tasks: Image processing for edge detection Simple object detection, like faces Image classification For each task, we provide a minimal working example in Python that uses freely available or built-in data, accompanied by the necessary explanations. You can reliably run this code in a notebook-friendly environment such as Google Colab, or locally in your own IDE. Setup and Preparation An important prerequisite for using the code provided in this article is to install several Python libraries. If you run the code in a notebook, paste this command into an initial cell (use the prefix “!” in notebooks): pip install opencv-python tensorflow scikit-image matplotlib numpy pip install opencv–python tensorflow scikit–image matplotlib numpy Image Processing With OpenCV OpenCV is a Python library that offers a range of tools for efficiently building computer vision applications—from basic image transformations to simple object detection tasks. It is characterized by its speed and broad range of functionalities. One of the primary task areas supported by OpenCV is image processing, which focuses on applying transformations to images, generally with two goals: improving their quality or extracting useful information. Examples include converting color images to grayscale, detecting edges, smoothing to reduce noise, and thresholding to separate specific regions (e.g. foreground from background). The first example in this guide uses a built-in sample image provided by the scikit-image library to detect edges in the grayscale version of an originally full-color image. from skimage import data import cv2 import matplotlib.pyplot as plt # Load a sample RGB image (astronaut) from scikit-image image = data.astronaut() # Convert RGB (scikit-image) to BGR (OpenCV convention), then to grayscale image = cv2.cvtColor(image, cv2.COLOR_RGB2BGR) gray = cv2.cvtColor(image, cv2.COLOR_BGR2GRAY) # Canny edge detection edges = cv2.Canny(gray, 100, 200) # Display plt.figure(figsize=(10, 4)) plt.subplot(1, 2, 1) plt.imshow(gray, cmap=”gray”) plt.title(“Grayscale Image”) plt.axis(“off”) plt.subplot(1, 2, 2) plt.imshow(edges, cmap=”gray”) plt.title(“Edge Detection”) plt.axis(“off”) plt.show() 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 from skimage import data import cv2 import matplotlib.pyplot as plt # Load a sample RGB image (astronaut) from scikit-image image = data.astronaut() # Convert RGB (scikit-image) to BGR (OpenCV convention), then to grayscale image = cv2.cvtColor(image, cv2.COLOR_RGB2BGR) gray = cv2.cvtColor(image, cv2.COLOR_BGR2GRAY) # Canny edge detection edges = cv2.Canny(gray, 100, 200) # Display plt.figure(figsize=(10, 4)) plt.subplot(1, 2, 1) plt.imshow(gray, cmap=“gray”) plt.title(“Grayscale Image”) plt.axis(“off”) plt.subplot(1, 2, 2) plt.imshow(edges, cmap=“gray”) plt.title(“Edge Detection”) plt.axis(“off”) plt.show() The process applied in the code above is simple, yet it illustrates a very common image processing scenario: Load and preprocess an image for analysis: convert the RGB image to OpenCV’s BGR convention and then to grayscale for further processing. Functions like COLOR_RGB2BGR and COLOR_BGR2GRAY make this straightforward. Use the built-in Canny edge detection algorithm to identify edges in the image. Plot the results: the grayscale image used for edge detection and the resulting edge map. The results are shown below: Edge detection with OpenCV Object Detection With OpenCV Time to go beyond classic pixel-level processing and identify higher-level objects within an image. OpenCV makes this possible with pre-trained models like Haar cascades, which can be applied to many real-world images and work well for simple detection use cases, e.g. detecting human faces. The code below uses the same astronaut image as in the previous section, converts it to grayscale, and applies a Haar cascade trained for identifying frontal faces. The cascade’s metadata is contained in haarcascade_frontalface_default.xml. from skimage import data import cv2 import matplotlib.pyplot as plt # Load the sample image and convert to BGR (OpenCV convention) image = data.astronaut() image = cv2.cvtColor(image, cv2.COLOR_RGB2BGR) # Haar cascade is an OpenCV classifier trained for detecting faces face_cascade = cv2.CascadeClassifier( cv2.data.haarcascades + “haarcascade_frontalface_default.xml” ) # The model requires grayscale images gray = cv2.cvtColor(image, cv2.COLOR_BGR2GRAY) # Detect faces faces = face_cascade.detectMultiScale( gray, scaleFactor=1.1, minNeighbors=5 ) # Draw bounding boxes output = image.copy() for (x, y, w, h) in faces: cv2.rectangle(output, (x, y), (x + w, y + h), (0, 255, 0), 2) # Display plt.imshow(cv2.cvtColor(output, cv2.COLOR_BGR2RGB)) plt.title(“Face Detection”) plt.axis(“off”) plt.show() 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 from skimage import data import cv2 import matplotlib.pyplot as plt # Load the sample image and convert to BGR (OpenCV convention) image = data.astronaut() image = cv2.cvtColor(image, cv2.COLOR_RGB2BGR) # Haar cascade is an OpenCV classifier trained for detecting faces face_cascade = cv2.CascadeClassifier( cv2.data.haarcascades + “haarcascade_frontalface_default.xml” ) # The model requires grayscale images gray = cv2.cvtColor(image, cv2.COLOR_BGR2GRAY) # Detect faces faces = face_cascade.detectMultiScale( gray, scaleFactor=1.1, minNeighbors=5 ) # Draw bounding boxes output = image.copy() for (x, y, w, h) in faces: cv2.rectangle(output, (x, y), (x +

OnePlus To Be Dismantled? What It Means For Existing Users As India CEO Breaks Silence And Says… | Technology News

OnePlus Dismantled In India: Chinese smartphone brand OnePlus appears to be entering an uncertain phase. Once known for disrupting the market with bold launches and strong fan-driven buzz, the brand has recently found itself at the centre of reports claiming it is being “dismantled” by parent company Oppo. However, the company has strongly refuted these claims. Responding to a report by Android Headlines, OnePlus India CEO Robin Liu said the reports are false and misleading. He clarified that the company is not shutting down and that its operations in India continue as usual. Liu also stressed that there are no plans by the parent group to wind down the OnePlus brand. The claims originated from Android Headlines, which stated that OnePlus is being “wound down and put on life support”. The publication said its conclusions are based on an investigation spanning three continents, along with market data from four independent analyst firms. Add Zee News as a Preferred Source Official statement from OnePlus pic.twitter.com/I0Ii0SOUUo — OnePlus Club (@OnePlusClub) January 21, 2026 According to the report, OnePlus may not disappear overnight. Instead, it could gradually lose its distinct identity, following a path similar to brands such as BlackBerry, Micromax, Nokia, HTC and LG, which slowly faded from relevance. OnePlus’s Strategic Shift Since 2021 This is not the first major shift for OnePlus. In 2021, the company merged parts of its design and research teams with Oppo as part of a broader restructuring. Since then, OnePlus has steadily moved away from its original positioning as a disruptive “flagship killer” that once challenged Samsung Galaxy and Apple iPhone devices. At the time, the company said the move would help it share resources, accelerate product development and continue operating as an independent brand. OnePlus Shipments Fall Sharply in 2024 Recent market data suggests growing pressure. In 2024, OnePlus shipments fell by more than 20 percent, dropping from around 17 million units to 13–14 million. In India, its market share declined from 6.1 percent to 3.9 percent, while in China it slipped from 2 percent to 1.6 percent. (Also Read: iQOO 15R Confirmed To Launch In India, Could Feature 7,600mAh Battery; Check Expected Display, Chipset, Camera, And Other Specs) During the same period, Oppo recorded a 2.8 percent increase. According to Omdia analysts, this growth was driven entirely by Oppo, with key areas such as product strategy, research and development, and market decisions becoming increasingly centralised under the parent brand. Importantly, the report does not suggest that OnePlus is shutting down or exiting key markets such as India. India accounts for more than half of OnePlus’s annual sales, and the brand continues to remain active despite a shrinking market share. Is OnePlus Undergoing Internal Restructuring? In fact, OnePlus recently hosted a high-profile launch event in India for the OnePlus 15R and Pad Go 2, backed by significant marketing spend. The company has also signed several celebrity partnerships, including cricketers Jasprit Bumrah and Smriti Mandhana, racing driver Kush Maini and singer Armaan Malik. Taken together, the developments point to internal restructuring and closer alignment with Oppo as part of a broader global reset. For now, these changes do not appear to be slowing OnePlus’s push in markets like India. (Also Read: Vivo X200T India Launch Date Officially Confirmed For Jan 27; Check Expected Camera, Display, Battery, Chipset, Price And Other Specs) What It Means For Existing OnePlus Users For existing users, there is little cause for concern. OnePlus continues to roll out new products, with more devices reportedly in the pipeline. This indicates that inventory, spare parts and after-sales support will remain available. Warranties are expected to stay valid, and users can continue to expect regular Android updates and security patches in the near future.

AI Impact Summit 2026: India Highlights Three Key Objectives At Davos; IT Minister Meets Google Cloud CEO-Details | Technology News

AI Impact Summit 2026 In India: As India prepares to host the AI Impact Summit in New Delhi next month, the spotlight is firmly on the country’s growing role on the global technology stage. Union Minister Ashwini Vaishnaw, speaking at Davos, emphasised that the summit is built around three clear goals, reflecting India’s steady rise as a trusted global partner, driven by its push for sovereign AI models, robust safety frameworks, and a rapidly strengthening semiconductor ecosystem. Key Objectives Of Upcoming AI Impact Summit The AI Impact Summit has been designed around three clear goals. The first is impact, focusing on how AI models, applications, and the wider AI ecosystem can boost efficiency, raise productivity, and create broader benefits for the economy. The second goal is accessibility, with a special focus on making AI more affordable and usable for India and the Global South. Add Zee News as a Preferred Source Referring to India’s success with UPI and the Digital Public Infrastructure stack, Union Minister Ashwini Vaishnaw said the world is now watching to see if India can build a similar, scalable, and cost-effective framework for AI. The third goal is safety. He stressed the importance of addressing concerns around AI by creating strong guardrails, clear guidelines, and built-in safety features. Vaishnaw added that India should also develop its own regulatory and safety framework for AI. The AI Impact Summit, scheduled for next month, will bring together global policymakers and technology leaders, and is expected to feature major investment announcements along with the launch of India’s AI models. India Crosses 2 Lakh Startups India is now home to nearly 2 lakh startups and ranks among the top three startup ecosystems in the world. Union Minister Ashwini Vaishnaw said that 24 Indian startups are currently working on chip design, one of the toughest areas for new companies. Of these, 18 have already secured venture capital funding, reflecting strong investor confidence in India’s deep-tech potential. The minister also shared details of India’s semiconductor strategy. He pointed out that around 75 per cent of global chip demand falls in the 28nm to 90nm range, which is used in sectors such as electric vehicles, automobiles, railways, defence, telecom equipment, and a large portion of consumer electronics. Ashwini Vaishnaw said India is aiming to build strong manufacturing capabilities in this segment first before moving to more advanced technologies. In collaboration with industry partners like IBM, India has a clear roadmap to progress from 28nm to 7nm chips by 2030, and further to 3nm by 2032. IT Minister Ashwini Vaishnaw Meets Google Cloud CEO Ashwini Vaishnaw also met Google Cloud CEO Thomas Kurian in Davos, where Google reaffirmed its growing commitment to India’s AI ecosystem. This includes plans for a $15 billion AI data centre in Vizag, Andhra Pradesh, along with expanded partnerships with Indian startups. During his visit, Vaishnaw also met Meta’s Chief Global Affairs Officer, Joel Kaplan, and discussed measures to ensure the safety of social media users, particularly in addressing the risks posed by deepfakes and AI-generated content. (With IANS Inputs)

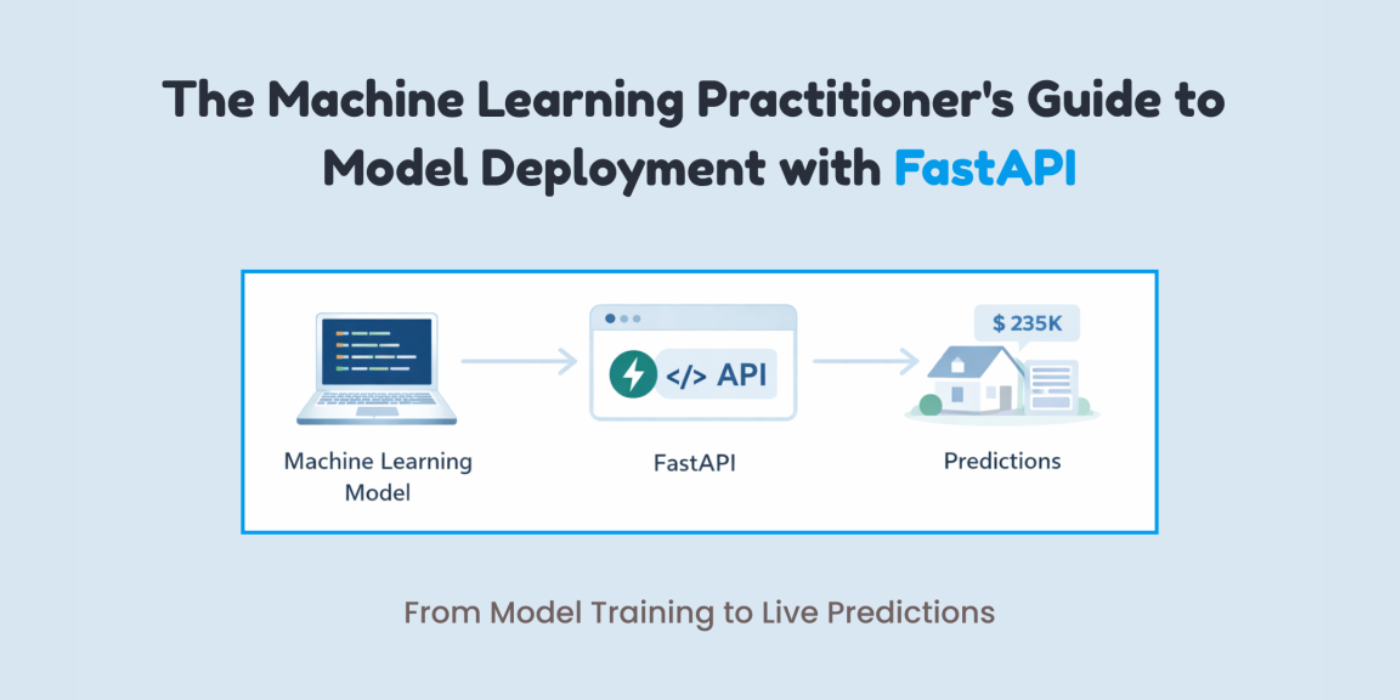

The Machine Learning Practitioner’s Guide to Model Deployment with FastAPI

In this article, you will learn how to package a trained machine learning model behind a clean, well-validated HTTP API using FastAPI, from training to local testing and basic production hardening. Topics we will cover include: Training, saving, and loading a scikit-learn pipeline for inference Building a FastAPI app with strict input validation via Pydantic Exposing, testing, and hardening a prediction endpoint with health checks Let’s explore these techniques. The Machine Learning Practitioner’s Guide to Model Deployment with FastAPIImage by Author If you’ve trained a machine learning model, a common question comes up: “How do we actually use it?” This is where many machine learning practitioners get stuck. Not because deployment is hard, but because it is often explained poorly. Deployment is not about uploading a .pkl file and hoping it works. It simply means allowing another system to send data to your model and get predictions back. The easiest way to do this is by putting your model behind an API. FastAPI makes this process simple. It connects machine learning and backend development in a clean way. It is fast, provides automatic API documentation with Swagger UI, validates input data for you, and keeps the code easy to read and maintain. If you already use Python, FastAPI feels natural to work with. In this article, you will learn how to deploy a machine learning model using FastAPI step by step. In particular, you will learn: How to train, save, and load a machine learning model How to build a FastAPI app and define valid inputs How to create and test a prediction endpoint locally How to add basic production features like health checks and dependencies Let’s get started! Step 1: Training & Saving the Model The first step is to train your machine learning model. I am training a model to learn how different house features influence the final price. You can use any model. Create a file called train_model.py: import pandas as pd from sklearn.linear_model import LinearRegression from sklearn.pipeline import Pipeline from sklearn.preprocessing import StandardScaler import joblib # Sample training data data = pd.DataFrame({ “rooms”: [2, 3, 4, 5, 3, 4], “age”: [20, 15, 10, 5, 12, 7], “distance”: [10, 8, 5, 3, 6, 4], “price”: [100, 150, 200, 280, 180, 250] }) X = data[[“rooms”, “age”, “distance”]] y = data[“price”] # Pipeline = preprocessing + model pipeline = Pipeline([ (“scaler”, StandardScaler()), (“model”, LinearRegression()) ]) pipeline.fit(X, y) 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 import pandas as pd from sklearn.linear_model import LinearRegression from sklearn.pipeline import Pipeline from sklearn.preprocessing import StandardScaler import joblib # Sample training data data = pd.DataFrame({ “rooms”: [2, 3, 4, 5, 3, 4], “age”: [20, 15, 10, 5, 12, 7], “distance”: [10, 8, 5, 3, 6, 4], “price”: [100, 150, 200, 280, 180, 250] }) X = data[[“rooms”, “age”, “distance”]] y = data[“price”] # Pipeline = preprocessing + model pipeline = Pipeline([ (“scaler”, StandardScaler()), (“model”, LinearRegression()) ]) pipeline.fit(X, y) After training, you have to save the model. # Save the entire pipeline joblib.dump(pipeline, “house_price_model.joblib”) # Save the entire pipeline joblib.dump(pipeline, “house_price_model.joblib”) Now, run the following line in the terminal: You now have a trained model plus preprocessing pipeline, safely stored. Step 2: Creating a FastAPI App This is easier than you think. Create a file called main.py: from fastapi import FastAPI from pydantic import BaseModel import joblib app = FastAPI(title=”House Price Prediction API”) # Load model once at startup model = joblib.load(“house_price_model.joblib”) from fastapi import FastAPI from pydantic import BaseModel import joblib app = FastAPI(title=“House Price Prediction API”) # Load model once at startup model = joblib.load(“house_price_model.joblib”) Your model is now: Loaded once Kept in memory Ready to serve predictions This is already better than most beginner deployments. Step 3: Defining What Input Your Model Expects This is where many deployments break. Your model does not accept “JSON.” It accepts numbers in a specific structure. FastAPI uses Pydantic to enforce this cleanly. You might be wondering what Pydantic is: Pydantic is a data validation library that FastAPI uses to make sure the input your API receives matches exactly what your model expects. It automatically checks data types, required fields, and formats before the request ever reaches your model. class HouseInput(BaseModel): rooms: int age: float distance: float class HouseInput(BaseModel): rooms: int age: float distance: float This does two things for you: Validates incoming data Documents your API automatically This ensures no more “why is my model crashing?” surprises. Step 4: Creating the Prediction Endpoint Now you have to make your model usable by creating a prediction endpoint. @app.post(“/predict”) def predict_price(data: HouseInput): features = [[ data.rooms, data.age, data.distance ]] prediction = model.predict(features) return { “predicted_price”: round(prediction[0], 2) } @app.post(“/predict”) def predict_price(data: HouseInput): features = [[ data.rooms, data.age, data.distance ]] prediction = model.predict(features) return { “predicted_price”: round(prediction[0], 2) } That’s your deployed model. You can now send a POST request and get predictions back. Step 5: Running Your API Locally Run this command in your terminal: uvicorn main:app –reload uvicorn main:app —reload Open your browser and go to: http://127.0.0.1:8000/docs http://127.0.0.1:8000/docs You’ll see: If you are confused about what it means, you are basically seeing: Interactive API docs A form to test your model Real-time validation Step 6: Testing with Real Input To test it out, click on the following arrow: After this, click on Try it out. Now test it with some data. I am using the following values: { “rooms”: 4, “age”: 8, “distance”: 5 } { “rooms”: 4, “age”: 8, “distance”: 5 } Now, click on Execute to get the response. The response is: { “predicted_price”: 246.67 } { “predicted_price”: 246.67 } Your model is now accepting real data, returning predictions, and ready to integrate with apps, websites, or other services. Step 7: Adding a Health Check You don’t need Kubernetes on day one, but do consider: Error handling (bad input

AI Must Be Multilingual, Voice-Enabled To Ensure Better Healthcare Services: Officials | Technology News

New Delhi: For artificial intelligence (AI) to deliver meaningful public value in a linguistically diverse country like India, it must be multilingual and voice-enabled, ensuring that language does not become a barrier to accessing healthcare services, according to Amitabh Nag, CEO, Digital India BHASHINI Division (DIBD). Nag said that language AI can significantly enhance citizen engagement, grievance redressal mechanisms, clinical documentation, and the overall accessibility of digital public health platforms. He participated at an event by DIBD in Bhubaneswar which brought together senior officials from the Union and state governments, technical institutions, and implementing agencies to review progress and accelerate the adoption of digital health initiatives across the country. Nag highlighted that as digital health systems scale across the country, the adoption of artificial intelligence becomes a natural progression. Add Zee News as a Preferred Source A key highlight was the signing of an MoU between the National Health Authority and the Digital India BHASHINI Division to enable multilingual translation services and AI-powered language support across NHA’s digital health platforms, including AB PM-JAY and ABDM. Kiran Gopal Vaska, Joint Secretary, Ayushman Bharat Digital Mission (ABDM), highlighted the practical benefits of language AI in healthcare delivery. He noted that AI-enabled tools such as voice-to-text and natural language processing can help address the time constraints faced by doctors by enabling seamless patient–doctor interactions, while allowing electronic health records to be created automatically, thereby improving efficiency and strengthening digital health systems. The Digital India BHASHINI Division will support the National Health Authority in deploying multilingual and voice-enabled solutions across beneficiary-facing and administrative platforms, with provisions for responsible data governance, secure system integration, and continuous improvement of language models through real-world usage and feedback. The deliberations at the event were aligned with the national objective of advancing digital health through AI-driven innovation and inclusive language access, with a focus on ensuring that digital health platforms are usable, accessible, and effective across linguistic and geographic boundaries.

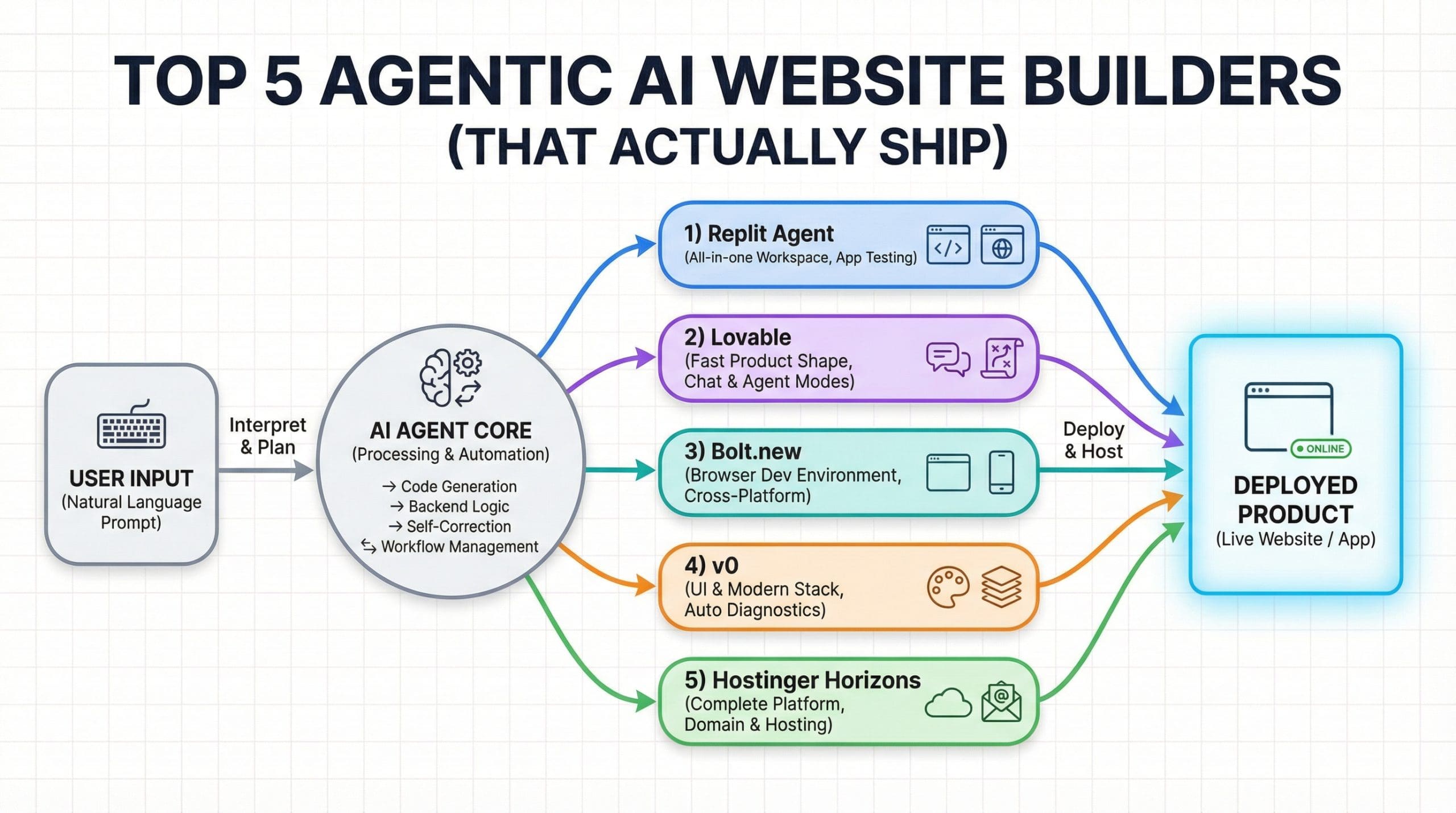

Top 5 Agentic AI Website Builders (That Actually Ship)

Top 5 Agentic AI Website Builders (That Actually Ship)Image by Editor Introduction I have been building a payment platform using vibe coding, and I do not have a frontend background. Instead of spending weeks learning UI frameworks, I started using tools like v0 and other agentic website builders to create professional dashboards, landing pages, and wallet interfaces. That experience sparked my curiosity. I wanted to understand which AI website builders actually work end to end—not just tools that generate a pretty frontend, but ones that can also handle backend logic, connect data, and deploy a working product in minutes. After testing and researching what is available, I put together this list of the top agentic AI website builders that go beyond design. These tools help you build real applications, manage the backend, and ship fast without getting stuck in setup or configuration. 1. Replit Agent Replit Agent is an advanced AI-powered tool designed to transform natural language descriptions into fully functional applications, allowing users to build software from scratch in a matter of minutes. By leveraging industry-leading models, the Agent streamlines the entire development process, handling tasks such as environment setup, database structuring, and dependency management, while enabling users to seamlessly add new features or modify existing ones simply by describing their intent. Replit Agent interfaceImage by Author Key Features App Testing: Simulates a real user navigating the application in a browser to automatically detect and fix issues during development Max Autonomy: Enables the Agent to self-supervise and work on long task lists for extended periods (up to 200 minutes) without human intervention Agents & Automations: Allows users to build intelligent chatbots and automated workflows that interact with external platforms like Slack and Telegram Build Modes: Offers flexible development paths, letting users choose between starting with a visual design prototype or building full functionality immediately Checkpoints: Creates comprehensive snapshots of the project after every request to ensure work is saved and enable easy rollbacks when needed 2. Lovable Lovable offers versatile operating modes that allow users to build faster and smarter by selecting the specific environment that best fits their immediate needs, from autonomous execution to conversational reasoning. These modes enable Lovable to act with precision and reduce friction during development, whether the user requires complex task handling or high-level product planning. Lovable development interfaceImage by Author Key Features Agent Mode: Enables Lovable to autonomously interpret requests, explore the codebase, refactor code, and execute complex development tasks without manual intervention Chat Mode: Acts as a conversational partner for debugging and product planning, allowing users to reason through problems and strategies without directly modifying the code Plan Implementation: Generates step-by-step plans within Chat mode that can be instantly converted into actionable code changes by clicking the “Implement the plan” button Proactive Debugging: Automatically inspects logs, network activity, and files to identify issues and implement fixes proactively during development Real-time Capabilities: Can search the web in real time to fetch documentation or assets, and can generate or edit images for the application 3. Bolt.new Bolt.new is an AI-powered development tool that enables users to build websites, full-stack web applications, and mobile apps simply by describing their ideas in a chat interface. Designed for both beginners and experienced developers, Bolt streamlines the creation process by transforming natural language prompts into working products in minutes, while still offering full flexibility to edit code directly in a built-in editor. Bolt.new development environmentImage by Author Key Features AI Agent Selection: Choose which large language model powers your builds, with options like the powerful Claude Agent for production-quality apps or the legacy v1 Agent for quick prototyping Cross-Platform Development: Generate a wide range of applications from a single prompt, including landing pages, complex web apps, and mobile applications via Expo integration Bolt Cloud: Use an all-in-one platform for building and running apps, removing the need to manage separate services for databases, hosting, and domains Integrated Databases: Automatically create and connect databases, view data in a friendly table, and manage authentication without leaving the project Instant Hosting: Deploy projects to a live URL in seconds with a free .bolt.host subdomain, requiring no external configuration or third-party accounts 4. v0 v0 is an AI-powered development platform designed to turn natural language descriptions into production-ready, full-stack web applications. By utilizing an intelligent agent that can search the web, inspect sites, and automatically fix errors, v0 enables users to take a concept to working software in minutes, facilitating collaboration and integration with external tools. v0 development platformImage by Author Key Features End-to-End Development: Goes beyond simple mockups by building both high-fidelity user interfaces and the necessary backend logic to create rich, data-driven applications Intelligent Agent: Includes autonomous capabilities such as web searching, site inspection, and integration with external tools to streamline the building process Automated Diagnostics: Uses intelligent diagnostics to automatically detect and fix errors in the code, acting as an AI pair programmer One-Click Deployment: Allows users to deploy applications instantly to secure, scalable infrastructure powered by Vercel Modern Stack Integration: Generates code compatible with modern development stacks, including Next.js, Tailwind CSS, and shadcn/ui 5. Hostinger Horizons Hostinger Horizons is an all-in-one agentic website and web app builder designed for people who want to ship fast without dealing with code or complex setups. You describe what you want to build, and Horizons handles design, features, integrations, SEO, and deployment in one flow. It supports everything from simple landing pages to full web apps with user accounts, payments, and analytics, while still giving you access to the underlying code if you want more control. The result is a practical AI builder that focuses on launching real, working products rather than just generating layouts. Hostinger Horizons platformImage by Author Key Features Domain & hosting: Free domain for one year on most plans, plus fully managed hosting included Professional email: One or more free email mailboxes per website, depending on the plan Built-in deployments: One-click publishing with automatic updates and version restore Integrations out of the box: Payments, user accounts, analytics, subscriptions,

PLI Booster: India’s Electronics Exports Cross Rs 4.15 Lakh Crore For 1st Time In 2025, Up 37 Per Cent | Technology News

New Delhi: India’s electronics exports has exceeded $47 billion, or more than Rs 4.15 lakh crore, for the first time in 2025, according to official data. As per official data, the electronics exports marked a 37 per cent rise — from $34.93 billion in the prior 12‑month period in 2024. Nearly two‑thirds of the total exports, at roughly $30 billion, came from smartphone shipments supported by the government’s production‑linked incentive (PLI) scheme, which also hit an all‑time high in 2025. Electronics exports reached $4.17 billion in December 2025, up 16.8 per cent from $3.58 billion in December 2024. Electronics exports exceeded the $4 billion mark in seven of the 12 months of 2025, underscoring sustained global demand for Indian‑made devices. The export figure of smartphones in 2025 represents about 38 per cent of the country’s smartphone exports over the past five years, according to a recent report. Add Zee News as a Preferred Source The data showed that India’s smartphone shipments abroad totalled nearly $79.03 billion from 2021 to 2025, with CY25 delivering the highest 12‑month export tally on record. Apple’s iPhone consignments accounted for roughly 75 per cent of the total during this period, valued at over $22 billion. India’s electronics exports are expected to expand further due to the semiconductor manufacturing push, Union Minister Ashwini Vaishnaw recently said. “Momentum will continue in 2026 as four semiconductor plants come into commercial production,” he said in a social media post earlier this week. Official estimates showed that electronic production reached around Rs 11.3 crore in 2024–25 period. For the first time since domestic production began in 2021, US tech giant Apple Inc’s iPhone exports from India crossed Rs 2 lakh crore in 2025, and surged nearly 85 per cent over 2024 exports, as per industry data. India became the world’s second-largest mobile phone producer, with over 99 per cent of phones sold domestically now Made in India moves up the manufacturing value chain. The smartphone PLI scheme is scheduled to conclude in March 2026, though the government is reportedly exploring ways to extend support.

The Complete Guide to Data Augmentation for Machine Learning

In this article, you will learn practical, safe ways to use data augmentation to reduce overfitting and improve generalization across images, text, audio, and tabular datasets. Topics we will cover include: How augmentation works and when it helps. Online vs. offline augmentation strategies. Hands-on examples for images (TensorFlow/Keras), text (NLTK), audio (librosa), and tabular data (NumPy/Pandas), plus the critical pitfalls of data leakage. Alright, let’s get to it. The Complete Guide to Data Augmentation for Machine LearningImage by Author Suppose you’ve built your machine learning model, run the experiments, and stared at the results wondering what went wrong. Training accuracy looks great, maybe even impressive, but when you check validation accuracy… not so much. You can solve this issue by getting more data. But that is slow, expensive, and sometimes just impossible. It’s not about inventing fake data. It’s about creating new training examples by subtly modifying the data you already have without changing its meaning or label. You’re showing your model the same concept in multiple forms. You are teaching what’s important and what can be ignored. Augmentation helps your model generalize instead of simply memorizing the training set. In this article, you’ll learn how data augmentation works in practice and when to use it. Specifically, we’ll cover: What data augmentation is and why it helps reduce overfitting The difference between offline and online data augmentation How to apply augmentation to image data with TensorFlow Simple and safe augmentation techniques for text data Common augmentation methods for audio and tabular datasets Why data leakage during augmentation can silently break your model Offline vs Online Data Augmentation Augmentation can happen before training or during training. Offline augmentation expands the dataset once and saves it. Online augmentation generates new variations every epoch. Deep learning pipelines usually prefer online augmentation because it exposes the model to effectively unbounded variation without increasing storage. Data Augmentation for Image Data Image data augmentation is the most intuitive place to start. A dog is still a dog if it’s slightly rotated, zoomed, or viewed under different lighting conditions. Your model needs to see these variations during training. Some common image augmentation techniques are: Rotation Flipping Resizing Cropping Zooming Shifting Shearing Brightness and contrast changes These transformations do not change the label—only the appearance. Let’s demonstrate with a simple example using TensorFlow and Keras: 1. Importing Libraries import tensorflow as tf from tensorflow.keras.datasets import mnist from tensorflow.keras.layers import Dense, Flatten, Conv2D, MaxPooling2D, Dropout from tensorflow.keras.utils import to_categorical from tensorflow.keras.preprocessing.image import ImageDataGenerator from tensorflow.keras.models import Sequential import tensorflow as tf from tensorflow.keras.datasets import mnist from tensorflow.keras.layers import Dense, Flatten, Conv2D, MaxPooling2D, Dropout from tensorflow.keras.utils import to_categorical from tensorflow.keras.preprocessing.image import ImageDataGenerator from tensorflow.keras.models import Sequential 2. Loading MNIST dataset (X_train, y_train), (X_test, y_test) = mnist.load_data() # Normalize pixel values X_train = X_train / 255.0 X_test = X_test / 255.0 # Reshape to (samples, height, width, channels) X_train = X_train.reshape(-1, 28, 28, 1) X_test = X_test.reshape(-1, 28, 28, 1) # One-hot encode labels y_train = to_categorical(y_train, 10) y_test = to_categorical(y_test, 10) (X_train, y_train), (X_test, y_test) = mnist.load_data() # Normalize pixel values X_train = X_train / 255.0 X_test = X_test / 255.0 # Reshape to (samples, height, width, channels) X_train = X_train.reshape(–1, 28, 28, 1) X_test = X_test.reshape(–1, 28, 28, 1) # One-hot encode labels y_train = to_categorical(y_train, 10) y_test = to_categorical(y_test, 10) Output: Downloading data from https://storage.googleapis.com/tensorflow/tf-keras-datasets/mnist.npz Downloading data from https://storage.googleapis.com/tensorflow/tf-keras-datasets/mnist.npz 3. Defining ImageDataGenerator for augmentation datagen = ImageDataGenerator( rotation_range=15, # rotate images by ±15 degrees width_shift_range=0.1, # 10% horizontal shift height_shift_range=0.1, # 10% vertical shift zoom_range=0.1, # zoom in/out by 10% shear_range=0.1, # apply shear transformation horizontal_flip=False, # not needed for digits fill_mode=”nearest” # fill missing pixels after transformations ) datagen = ImageDataGenerator( rotation_range=15, # rotate images by ±15 degrees width_shift_range=0.1, # 10% horizontal shift height_shift_range=0.1, # 10% vertical shift zoom_range=0.1, # zoom in/out by 10% shear_range=0.1, # apply shear transformation horizontal_flip=False, # not needed for digits fill_mode=‘nearest’ # fill missing pixels after transformations ) 4. Building a Simple CNN Model model = Sequential([ Conv2D(32, (3, 3), activation=’relu’, input_shape=(28, 28, 1)), MaxPooling2D((2, 2)), Conv2D(64, (3, 3), activation=’relu’), MaxPooling2D((2, 2)), Flatten(), Dropout(0.3), Dense(64, activation=’relu’), Dense(10, activation=’softmax’) ]) model.compile(optimizer=”adam”, loss=”categorical_crossentropy”, metrics=[‘accuracy’]) model = Sequential([ Conv2D(32, (3, 3), activation=‘relu’, input_shape=(28, 28, 1)), MaxPooling2D((2, 2)), Conv2D(64, (3, 3), activation=‘relu’), MaxPooling2D((2, 2)), Flatten(), Dropout(0.3), Dense(64, activation=‘relu’), Dense(10, activation=‘softmax’) ]) model.compile(optimizer=‘adam’, loss=‘categorical_crossentropy’, metrics=[‘accuracy’]) 5. Training the model batch_size = 64 epochs = 5 history = model.fit( datagen.flow(X_train, y_train, batch_size=batch_size, shuffle=True), steps_per_epoch=len(X_train)//batch_size, epochs=epochs, validation_data=(X_test, y_test) ) batch_size = 64 epochs = 5 history = model.fit( datagen.flow(X_train, y_train, batch_size=batch_size, shuffle=True), steps_per_epoch=len(X_train)//batch_size, epochs=epochs, validation_data=(X_test, y_test) ) Output: 6. Visualizing Augmented Images import matplotlib.pyplot as plt # Visualize five augmented variants of the first training sample plt.figure(figsize=(10, 2)) for i, batch in enumerate(datagen.flow(X_train[:1], batch_size=1)): plt.subplot(1, 5, i + 1) plt.imshow(batch[0].reshape(28, 28), cmap=’gray’) plt.axis(‘off’) if i == 4: break plt.show() import matplotlib.pyplot as plt # Visualize five augmented variants of the first training sample plt.figure(figsize=(10, 2)) for i, batch in enumerate(datagen.flow(X_train[:1], batch_size=1)): plt.subplot(1, 5, i + 1) plt.imshow(batch[0].reshape(28, 28), cmap=‘gray’) plt.axis(‘off’) if i == 4: break plt.show() Output: Data Augmentation for Textual Data Text is more delicate. You can’t randomly replace words without thinking about meaning. But small, controlled changes can help your model generalize. A simple example using synonym replacement (with NLTK): import nltk from nltk.corpus import wordnet import random nltk.download(“wordnet”) nltk.download(“omw-1.4”) def synonym_replacement(sentence): words = sentence.split() if not words: return sentence idx = random.randint(0, len(words) – 1) synsets = wordnet.synsets(words[idx]) if synsets and synsets[0].lemmas(): replacement = synsets[0].lemmas()[0].name().replace(“_”, ” “) words[idx] = replacement return ” “.join(words) text = “The movie was really good” print(synonym_replacement(text)) 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 import nltk from nltk.corpus import wordnet import random nltk.download(“wordnet”) nltk.download(“omw-1.4”) def synonym_replacement(sentence): words = sentence.split() if

Elon Musk Seeks Up To $134bn From OpenAI, Microsoft In Damages Over Fraudulent Partnership | Technology News

New Delhi: Tesla CEO and founder of AI firm xAI Elon Musk has asked a US federal court to award him $79 billion to $134 billion in damages, alleging that OpenAI and Microsoft defrauded him by abandoning OpenAI’s nonprofit mission and partnering with the software giant. Elon Musk’s lawyers filed the damages request a day after a judge denied OpenAI and Microsoft’s final bid to avoid a jury trial scheduled for late April in Oakland, California, according to multiple reports. The filing cited calculations that showed Musk is entitled to a share of OpenAI’s current $500 billion valuation as he donated $38 million in seed funding during the founding stage of the company in 2015. “Just as an early investor in a startup company may realise gains many orders of magnitude greater than the investor’s initial investment, the wrongful gains that OpenAI and Microsoft have earned — and which Musk is now entitled to disgorge — are much larger than Musk’s initial contributions,” the filing said. Add Zee News as a Preferred Source According to court papers, Musk’s side argued that $65.5 billion to $109.43 billions of alleged wrongful gains were made by OpenAI and $13.3 billion to $25.06 billion by Microsoft from Musk’s financial and non-monetary contributions, including technical and business advice. OpenAI and Microsoft have denied the allegations. Musk left OpenAI’s board in 2018, launched his own AI company in 2023, and sued OpenAI in 2024, challenging co-founder Sam Altman’s move to operate the company as a for‑profit entity. “Musk’s lawsuit continues to be baseless and a part of his ongoing pattern of harassment, and we look forward to demonstrating this at trial,” OpenAI said in a statement, adding, “this latest unserious demand is aimed solely at furthering this harassment campaign.” Meanwhile, Musk’s AI firm xAI is also suing Apple and OpenAI over an earlier integration of ChatGPT into Siri and Apple Intelligence as an optional add-on. Elon Musk alleged that Apple’s App Store practices disadvantage rivals such as Grok, and the lawsuit has survived initial dismissal.