New Delhi: Agentic artificial intelligence (AI) systems are rapidly reshaping how banks manage frontline sales, offering a potential breakthrough for relationship managers long burdened by inefficient systems, weak leads, and heavy administrative workloads, according to a McKinsey report. “In frontline sales, the potential is vast. Agentic AI makes it possible to automate the complex workflows characteristic of financial services—something bankers have long wanted to do but have never fully succeeded in,” the report noted. At leading global banks, agentic AI is already being deployed across prospecting, lead nurturing, and account management, delivering measurable gains in productivity and revenue within months. Unlike traditional generative AI, which responds to prompts, agentic AI can independently interpret objectives, break them into tasks, interact with systems and people, execute actions, and continuously adapt with minimal human input. Add Zee News as a Preferred Source The shift comes at a critical time for the banking sector, which is facing margin pressure, slowing growth, and rising cost-to-income ratios. Industry research indicates that when banks redesign an entire frontline domain end-to-end using agentic AI, revenues per relationship manager can rise by 3 to 15 per cent, while the cost to serve can fall by 20 to 40 per cent. “As banks face margin pressure, slowing growth, and rising cost-to-income ratios, agentic AI represents not just a productivity tool but a new operating model for relationship management,” the report said. Frontline bankers have long cited poor-quality leads, excessive compliance requirements, and fragmented technology systems as key obstacles to effective selling. Many relationship managers spend more time updating customer relationship management systems and preparing reports than engaging with clients. This imbalance has contributed to high burnout and attrition across sales teams. Agentic AI offers a way to rebalance this equation. Intelligent agents can continuously scan markets, analyse structured and unstructured data, prioritise high-potential prospects, and automate follow-ups. In sales outreach, agents can personalise communications at scale, nurture thousands of leads simultaneously, and escalate only qualified opportunities to human bankers. This allows relationship managers to focus on higher-value conversations and complex client needs. Banks piloting these systems have reported significant operational improvements. AI-driven market mapping has expanded sales pipelines by roughly 30 per cent in some institutions, while automated lead nurturing has doubled or tripled the number of qualified leads. In parallel, AI-powered account intelligence tools have reduced meeting preparation time and improved the quality of client interactions. With routine tasks handled by agents, bankers can act more as trusted advisors, concentrating on insight-led discussions, strategic problem-solving, and long-term relationship building. However, the report cautions that capturing the full value of agentic AI requires more than deploying isolated tools. Banks must reimagine frontline operating models end-to-end, invest in robust data foundations, establish clear governance, and upskill employees to work effectively alongside AI agents. With revenue uplift and productivity gains now visible, agentic AI is increasingly seen not as an experiment but as a new operating paradigm for frontline banking.

AI Investment Surge To Accelerate In 2026, Offsetting Tariff Impact On US Economy: Report | Technology News

New Delhi: Artificial intelligence-related investments are set to accelerate sharply in 2026 as companies expand spending to keep pace with the fast-growing AI revolution, according to a report. It also said the surge in AI-driven private-sector spending is significantly cushioning the negative impact of tariff hikes on the US economy. Fitch Ratings, in a report, said, “Corporate plans suggest another AI-related investment increase in 2026. AI-driven private-sector spending is significantly cushioning the negative impact of tariffs.” It highlighted that while global growth is expected to slow, the resilience shown by the US is partly due to strong AI-linked investment momentum. Add Zee News as a Preferred Source The report noted that world GDP growth is projected to ease to 2.5 per cent in 2025, down from 2.9 per cent in 2024. The US economy growth is also expected to dip to 1.8 per cent in 2025 from 2.8per cent in 2024. Fitch had earlier anticipated a sharper deceleration in the US following the steep rise in tariff rates. However, the report stated that the “tariff shock” turned out milder than expected as it coincided with a major upturn in private-sector spending linked to the AI boom. The report added that the sharp rise in IT investment seen in national accounts is further supported by data from the largest US technology companies. Capital spending by AI hyperscalers, including the “Magnificent 7”, has doubled since 2023 to USD 400 billion as companies pour money into data centres. Corporate plans also indicate another wave of AI-related investment growth in 2026. It said the AI boom is already having a clear macroeconomic impact. In the first half of 2025, IT capital spending accounted for nearly 90 per cent of US GDP growth, reflecting the scale at which AI is reshaping investment patterns. The AI-fuelled equity market rally could also add 0.4 percentage points to consumption, providing additional support to the economy. The report noted that the strong momentum in IT capex has so far not been accompanied by a rise in corporate leverage at the aggregate level. Upward revisions in private capital expenditure forecasts are helping soften the drag caused by tariff increases. Overall, Fitch stated that the ongoing surge in AI-related investments is emerging as a crucial counterbalance to economic pressures arising from higher tariffs, while also laying the foundation for long-term structural transformation.

5G Services Now Available In 99.9% Of Districts: Minister | Technology News

New Delhi: The 5G services have been rolled out in all states and union territories (UTs) across the country and presently, these are available in 99.9 per cent of the districts, according to the government. As of October 31, telecom service providers (TSPs) have installed 5.08 lakh 5G Base Transceiver Stations (BTSs) across rural and urban areas of the country. It is to mention that more than 31 lakh Base Transceiver Stations (BTSs) have been installed across the country, informed Minister of State for Communications and Rural development, Dr. Pemmasani Chandra Sekhar, while replying to a question in the Rajya Sabha. To reduce call drops and improve internet connectivity in underserved areas, the government has taken several initiatives. Add Zee News as a Preferred Source These are BharatNet project for providing broadband connectivity in Gram Panchayats (GPs) and villages; scheme for providing mobile services in Left Wing Extremism (LWE) affected areas and in Aspirational Districts; 4G Saturation scheme to provide 4G mobile coverage in all uncovered villages; launch of GatiShakti Sanchar portal and RoW (Right of Way) Rules to streamline RoW permissions and clearance of installation of telecom infrastructure; and time-bound permission for use of street furniture for installation of small cells and telecommunication line. According to the minister, the telecom infrastructure are being deployed by private TSPs as well as state-led service providers. Further, telecom infrastructure are being shared by private and state-led service providers based on techno-commercial feasibility, he mentioned. Meanwhile, seven dedicated Working Groups constituted earlier by the Centre under the Bharat 6G Alliance have presented their progress and roadmap. Union Communications Minister Jyotiraditya Scindia said that technology, spectrum, devices, applications and sustainability verticals must align seamlessly for innovations to mature and scale. He said monthly joint reviews between working groups are essential to ensure that breakthroughs in one domain translate into actionable outcomes in others. The minister pointed out that spectrum policy will be central to India’s 6G strategy and noted that India has already undertaken significant spectrum refarming, with more planned ahead.

Top 5 Agentic AI LLM Models

In 2025, “using AI” no longer just means chatting with a model, and you’ve probably already noticed that shift yourself.

Starlink India’s Massive Promise: How Satellite Internet Works And Why Starlink Is Different From Everything Else | Technology News

Internet connectivity in India is deeply unequal, from the fast fiber lines in cities to the remarkably slow and unreliable networks that starve millions of villages. Starlink – the satellite internet service from billionaire Elon Musk-led SpaceX – will soon launch in India, promising to change this all over with a radical new way to deliver direct internet access from space. Starlink is not yet another service; it is a very different way of looking at connectivity. A recent leak on the Starlink India website briefly flashed tentative pricing, hinting at a high-end offering: an estimated monthly fee of about Rs 8,600 and a hardware kit at approximately Rs 34,000. Starlink later clarified these plans were uploaded by mistake and are incorrect, adding that the official India plan has not been announced yet. However, several key regulatory approvals are pending, including official launch dates, which are still not certain despite Starlink having received preliminary approval from the central government. Add Zee News as a Preferred Source How Satellite Internet Works And Why Starlink Is Different Traditional satellite internet involves big satellites at high orbits, about 36,000 km from Earth. The signal is delayed because of the great distance, therefore presenting high latency. Starlink changes this completely. Low Earth Orbit: Starlink deploys thousands of small satellites orbiting at a much lower altitude of about 550 kilometers. This greatly reduced distance minimizes the travel time of data, therefore contributing to much lower latency and improved speed of the internet. The System: At one end, the Starlink system comprises a smart dish installed on the user’s roof and the constellation of LEO satellites. Data goes from the user’s Wi-Fi router to the dish, which links directly to the nearest Starlink satellite. From there, the data either goes to a ground station or jumps via laser links from one satellite to another before reaching the global internet. Global Constellation: Currently, there are over 8,500 Starlink LEO satellites in orbit actively working around the globe, and the total number launched has already approached 9,000. In fact, this dense network ensures continuous signal coverage virtually everywhere. Bridging India’s Connectivity Gap Indeed, the Starlink model is all the more exciting for India, where laying fiber optic cables across challenging terrain-mountains, forests, remote villages, and border areas-is often difficult or impossible. Starlink bypasses infrastructure, whereas traditional internet needs multi-layered ground infrastructure in the form of fiber, towers, and undersea cables. The dish connects directly with the satellite network for high-speed internet at places that are unreachable by cables. Easy Installation: Your dish is smart, it self-locates and adjusts for the right satellite, without repeated engineering visits. Reliability: Starlink boasts an impressive 99.9% uptime. Since it’s satellite-based, it’s immune to the common problems of traditional internet-service hiccups, like fiber cuts that could leave you with no service for hours. Market Impact And Challenges In India While Starlink is often viewed as a solution to all of India’s internet problems, experts warn against this expectation. This calls for a focus on connectivity, not on speeds. While Starlink offers decent speed, it may not match the full range of fiber speeds offered in Indian cities. The true value of Starlink is in connection where the laying of optical fiber is difficult. For rural schools, health centers, and government offices in difficult terrains, the Starlink may be invaluable for telemedicine, e-governance, and digital payments. Affordability Hurdle: The service is pricey, and high costs for the hardware and monthly fees will restrict immediate mass adoption in India’s price-sensitive market. Challenges: The service is not without its shortcomings. Inclement rain, heavy cloud cover, or obstruction on the roof will now and then weaken the signal. With Starlink’s entry into India, it is a signal that the future of connectivity is being built in space. Much like how cell phone networks revolutionised communication, satellite internet can bring critical digital access to areas currently receiving a perpetual “No Signal” message. ALSO READ | What Is The Trump Gold Card? New USD 1M Visa Offers Expedited Path To Permanent US Residency

Pretrain a BERT Model from Scratch

import dataclasses import datasets import torch import torch.nn as nn import tqdm @dataclasses.dataclass class BertConfig: “”“Configuration for BERT model.”“” vocab_size: int = 30522 num_layers: int = 12 hidden_size: int = 768 num_heads: int = 12 dropout_prob: float = 0.1 pad_id: int = 0 max_seq_len: int = 512 num_types: int = 2 class BertBlock(nn.Module): “”“One transformer block in BERT.”“” def __init__(self, hidden_size: int, num_heads: int, dropout_prob: float): super().__init__() self.attention = nn.MultiheadAttention(hidden_size, num_heads, dropout=dropout_prob, batch_first=True) self.attn_norm = nn.LayerNorm(hidden_size) self.ff_norm = nn.LayerNorm(hidden_size) self.dropout = nn.Dropout(dropout_prob) self.feed_forward = nn.Sequential( nn.Linear(hidden_size, 4 * hidden_size), nn.GELU(), nn.Linear(4 * hidden_size, hidden_size), ) def forward(self, x: torch.Tensor, pad_mask: torch.Tensor) -> torch.Tensor: # self-attention with padding mask and post-norm attn_output, _ = self.attention(x, x, x, key_padding_mask=pad_mask) x = self.attn_norm(x + attn_output) # feed-forward with GeLU activation and post-norm ff_output = self.feed_forward(x) x = self.ff_norm(x + self.dropout(ff_output)) return x class BertPooler(nn.Module): “”“Pooler layer for BERT to process the [CLS] token output.”“” def __init__(self, hidden_size: int): super().__init__() self.dense = nn.Linear(hidden_size, hidden_size) self.activation = nn.Tanh() def forward(self, x: torch.Tensor) -> torch.Tensor: x = self.dense(x) x = self.activation(x) return x class BertModel(nn.Module): “”“Backbone of BERT model.”“” def __init__(self, config: BertConfig): super().__init__() # embedding layers self.word_embeddings = nn.Embedding(config.vocab_size, config.hidden_size, padding_idx=config.pad_id) self.type_embeddings = nn.Embedding(config.num_types, config.hidden_size) self.position_embeddings = nn.Embedding(config.max_seq_len, config.hidden_size) self.embeddings_norm = nn.LayerNorm(config.hidden_size) self.embeddings_dropout = nn.Dropout(config.dropout_prob) # transformer blocks self.blocks = nn.ModuleList([ BertBlock(config.hidden_size, config.num_heads, config.dropout_prob) for _ in range(config.num_layers) ]) # [CLS] pooler layer self.pooler = BertPooler(config.hidden_size) def forward(self, input_ids: torch.Tensor, token_type_ids: torch.Tensor, pad_id: int = 0 ) -> tuple[torch.Tensor, torch.Tensor]: # create attention mask for padding tokens pad_mask = input_ids == pad_id # convert integer tokens to embedding vectors batch_size, seq_len = input_ids.shape position_ids = torch.arange(seq_len, device=input_ids.device).unsqueeze(0) position_embeddings = self.position_embeddings(position_ids) type_embeddings = self.type_embeddings(token_type_ids) token_embeddings = self.word_embeddings(input_ids) x = token_embeddings + type_embeddings + position_embeddings x = self.embeddings_norm(x) x = self.embeddings_dropout(x) # process the sequence with transformer blocks for block in self.blocks: x = block(x, pad_mask) # pool the hidden state of the `[CLS]` token pooled_output = self.pooler(x[:, 0, :]) return x, pooled_output class BertPretrainingModel(nn.Module): def __init__(self, config: BertConfig): super().__init__() self.bert = BertModel(config) self.mlm_head = nn.Sequential( nn.Linear(config.hidden_size, config.hidden_size), nn.GELU(), nn.LayerNorm(config.hidden_size), nn.Linear(config.hidden_size, config.vocab_size), ) self.nsp_head = nn.Linear(config.hidden_size, 2) def forward(self, input_ids: torch.Tensor, token_type_ids: torch.Tensor, pad_id: int = 0 ) -> tuple[torch.Tensor, torch.Tensor]: # Process the sequence with the BERT model backbone x, pooled_output = self.bert(input_ids, token_type_ids, pad_id) # Predict the masked tokens for the MLM task and the classification for the NSP task mlm_logits = self.mlm_head(x) nsp_logits = self.nsp_head(pooled_output) return mlm_logits, nsp_logits # Training parameters epochs = 10 learning_rate = 1e–4 batch_size = 32 # Load dataset and set up dataloader dataset = datasets.Dataset.from_parquet(“wikitext-2_train_data.parquet”) def collate_fn(batch: list[dict]): “”“Custom collate function to handle variable-length sequences in dataset.”“” # always at max length: tokens, segment_ids; always singleton: is_random_next input_ids = torch.tensor([item[“tokens”] for item in batch]) token_type_ids = torch.tensor([item[“segment_ids”] for item in batch]).abs() is_random_next = torch.tensor([item[“is_random_next”] for item in batch]).to(int) # variable length: masked_positions, masked_labels masked_pos = [(idx, pos) for idx, item in enumerate(batch) for pos in item[“masked_positions”]] masked_labels = torch.tensor([label for item in batch for label in item[“masked_labels”]]) return input_ids, token_type_ids, is_random_next, masked_pos, masked_labels dataloader = torch.utils.data.DataLoader(dataset, batch_size=batch_size, shuffle=True, collate_fn=collate_fn, num_workers=8) # train the model device = torch.device(“cuda” if torch.cuda.is_available() else “cpu”) model = BertPretrainingModel(BertConfig()).to(device) model.train() optimizer = torch.optim.AdamW(model.parameters(), lr=learning_rate) scheduler = torch.optim.lr_scheduler.StepLR(optimizer, step_size=1, gamma=0.1) loss_fn = nn.CrossEntropyLoss() for epoch in range(epochs): pbar = tqdm.tqdm(dataloader, desc=f“Epoch {epoch+1}/{epochs}”) for batch in pbar: # get batched data input_ids, token_type_ids, is_random_next, masked_pos, masked_labels = batch input_ids = input_ids.to(device) token_type_ids = token_type_ids.to(device) is_random_next = is_random_next.to(device) masked_labels = masked_labels.to(device) # extract output from model mlm_logits, nsp_logits = model(input_ids, token_type_ids) # MLM loss: masked_positions is a list of tuples of (B, S), extract the # corresponding logits from tensor mlm_logits of shape (B, S, V) batch_indices, token_positions = zip(*masked_pos) mlm_logits = mlm_logits[batch_indices, token_positions] mlm_loss = loss_fn(mlm_logits, masked_labels) # Compute the loss for the NSP task nsp_loss = loss_fn(nsp_logits, is_random_next) # backward with total loss total_loss = mlm_loss + nsp_loss pbar.set_postfix(MLM=mlm_loss.item(), NSP=nsp_loss.item(), Total=total_loss.item()) optimizer.zero_grad() total_loss.backward() optimizer.step() scheduler.step() pbar.update(1) pbar.close() # Save the model torch.save(model.state_dict(), “bert_pretraining_model.pth”) torch.save(model.bert.state_dict(), “bert_model.pth”)

Apple iPhone 16, iPhone 16 Pro Max Get Massive Discount On THIS Platform; Check Camera, Battery, Display, Price And Other Specs | Technology News

Apple iPhone 16 Pro Max Flipkart Price: Flipkart’s Buy Buy 2025 sale is going to end with in couple of days. This sale is offering some of the biggest year end deals on popular gadgets. One of the best offers in this sale is on Apple’s iPhone 16 and iPhone 16 Pro Max. Flipkart is giving straight discounts, bank offers and exchange deals, which means you can get the iPhone 16 for less than Rs 40,000 if you combine all the benefits. At the same time, Flipkart is also offering a huge discount of more than Rs 10,000 on the iPhone 16 Pro Max. This makes it a great chance to buy Apple’s premium phone at a lower price than usual. These offers are not expected to stay for long, so interested buyers should make their purchase before the deals end. Apple iPhone 16 Specifications Add Zee News as a Preferred Source The premium smartphone features a 6.1 inch Super Retina XDR OLED display with HDR10 support and offers up to 1600 nits peak brightness outdoors, making the screen clear and bright even in sunlight. It runs on Apple’s A16 Bionic chipset, built on TSMC’s advanced 3nm process for faster performance and better efficiency. The phone is backed by a 3561mAh battery and comes with 8GB RAM along with 128GB or 256GB storage options. In the camera department, the iPhone 16 includes a dual camera setup with a 48MP primary sensor and a 12MP ultrawide sensor, while the front houses a 12MP camera for selfies and video calls. Apple iPhone 16 (128 GB Variant) Discount The iPhone 16 base model, which comes with 8GB RAM and 128GB storage, is now available at Apple for Rs 69,900. This is much lower than its original price of Rs 79,900, giving buyers a direct discount of Rs 10,000. With bank discounts and exchange offers, the price can drop even further, going down to around Rs 40,000. Consumers can also get a special flat discount of Rs 8,501 and choose no cost EMI options, making the deal even more affordable. If you use the exchange option, you can get up to Rs 57,400 off, making the deal even more affordable. Apple iPhone 16 Pro Max Specifcations The iPhone features a large 6.9 inch Super Retina XDR all screen OLED display with a sharp 2868×1320 pixel resolution at 460 ppi, offering bright and detailed visuals. It is powered by the A18 Pro chip, which includes a new 6 core CPU with two performance cores and four efficiency cores for smooth and powerful performance. The phone comes with a triple camera setup on the back, including a 48MP main camera with dual pixel PDAF and sensor shift OIS, a 12MP telephoto lens with 3D sensor shift OIS and 5x optical zoom, and a 48MP ultrawide camera. For selfies, it has a 12MP front camera. The device is equipped with a 4685mAh Li Ion battery that provides reliable all day usage. Apple iPhone 16 Pro Max (256 GB Variant) Discount The Apple iPhone 16 Pro Max (256 GB variant) is currently priced at Rs 1,34,900, which is Rs 10,000 lower than its original launch price of Rs 1,44,900. Buyers can also take advantage of additional bank offers, including 5 percent cashback on Axis Bank Flipkart Debit Cards (up to Rs 750) and 5 percent cashback on Flipkart SBI Credit Cards (up to Rs 4,000 per calendar quarter). The deal becomes even better with the exchange option, where users can get up to Rs 57,400 off depending on the device they trade in.

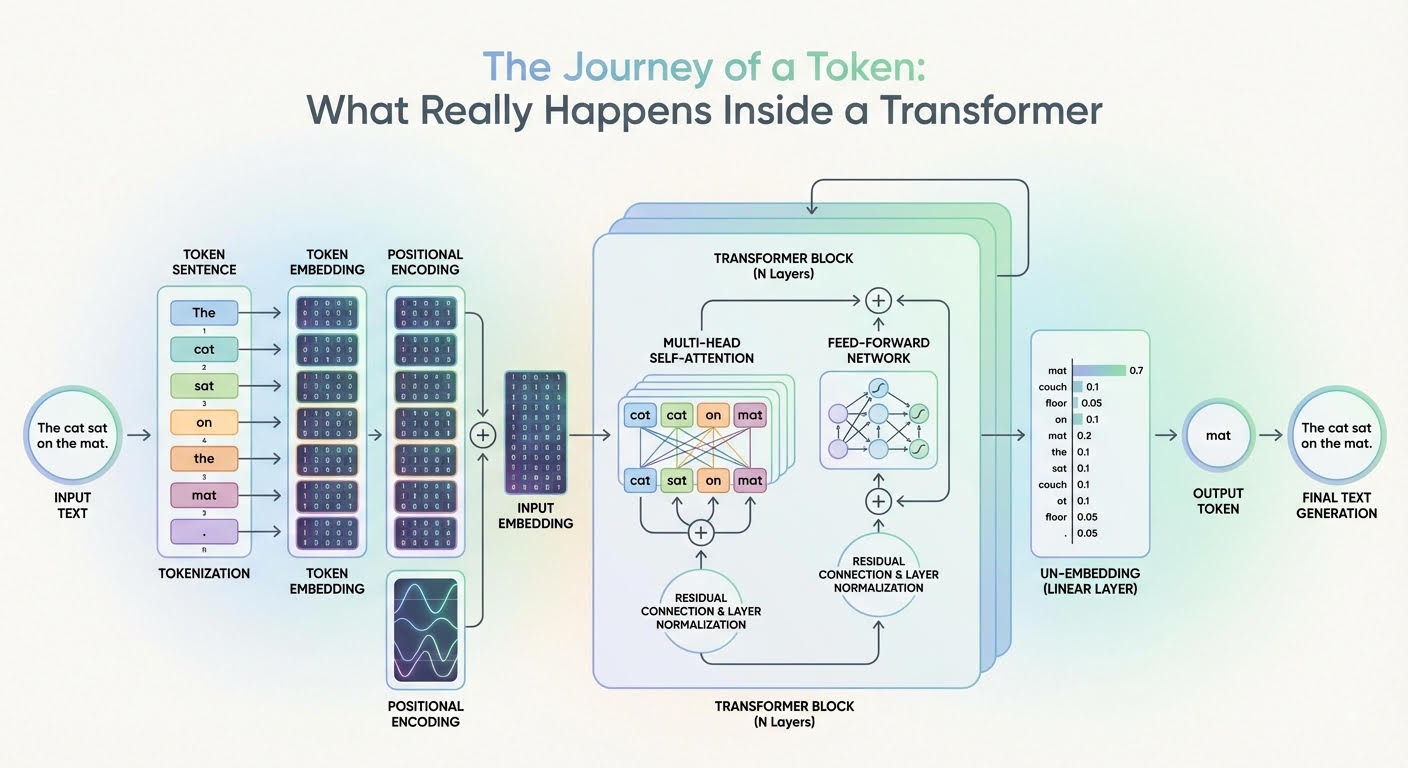

The Journey of a Token: What Really Happens Inside a Transformer

In this article, you will learn how a transformer converts input tokens into context-aware representations and, ultimately, next-token probabilities. Topics we will cover include: How tokenization, embeddings, and positional information prepare inputs What multi-headed attention and feed-forward networks contribute inside each layer How the final projection and softmax produce next-token probabilities Let’s get our journey underway. The Journey of a Token: What Really Happens Inside a Transformer (click to enlarge)Image by Editor The Journey Begins Large language models (LLMs) are based on the transformer architecture, a complex deep neural network whose input is a sequence of token embeddings. After a deep process — that looks like a parade of numerous stacked attention and feed-forward transformations — it outputs a probability distribution that indicates which token should be generated next as part of the model’s response. But how can this journey from inputs to outputs be explained for a single token in the input sequence? In this article, you will learn what happens inside a transformer model — the architecture behind LLMs — at the token level. In other words, we will see how input tokens or parts of an input text sequence turn into generated text outputs, and the rationale behind the changes and transformations that take place inside the transformer. The description of this journey through a transformer model will be guided by the above diagram that shows a generic transformer architecture and how information flows and evolves through it. Entering the Transformer: From Raw Input Text to Input Embedding Before entering the depths of the transformer model, a few transformations already happen to the text input, primarily so it is represented in a form that is fully understandable by the internal layers of the transformer. Tokenization The tokenizer is an algorithmic component typically working in symbiosis with the LLM’s transformer model. It takes the raw text sequence, e.g. the user prompt, and splits it into discrete tokens (often subword units or bytes, sometimes whole words), with each token in the source language being mapped to an identifier i. Token Embeddings There is a learned embedding table E with shape |V| × d (vocabulary size by embedding dimension). Looking up the identifiers for a sequence of length n yields an embedding matrix X with shape n × d. That is, each token identifier is mapped to a d-dimensional embedding vector that forms one row of X. Two embedding vectors will be similar to each other if they are associated with tokens that have similar meanings, e.g. king and emperor, or vice versa. Importantly, at this stage, each token embedding carries semantic and lexical information for that single token, without incorporating information about the rest of the sequence (at least not yet). Positional Encoding Before fully entering the core parts of the transformer, it is necessary to inject within each token embedding vector — i.e. inside each row of the embedding matrix X — information about the position of that token in the sequence. This is also called injecting positional information, and it is typically done with trigonometric functions like sine and cosine, although there are techniques based on learned positional embeddings as well. A nearly-residual component is summed to the previous embedding vector e_t associated with a token, as follows: \[x_t^{(0)} = e_t + p_{\text{pos}}(t)\] with p_pos(t) typically being a trigonometric-based function of the token position t in the sequence. As a result, an embedding vector that formerly encoded “what a token is” only now encodes “what the token is and where in the sequence it sits”. This is equivalent to the “input embedding” block in the above diagram. Now, time to enter the depths of the transformer and see what happens inside! Deep Inside the Transformer: From Input Embedding to Output Probabilities Let’s explain what happens to each “enriched” single-token embedding vector as it goes through one transformer layer, and then zoom out to describe what happens across the entire stack of layers. The formula \[h_t^{(0)} = x_t^{(0)}\] is used to denote a token’s representation at layer 0 (the first layer), whereas more generically we will use ht(l) to denote the token’s embedding representation at layer l. Multi-headed Attention The first major component inside each replicated layer of the transformer is the multi-headed attention. This is arguably the most influential component in the entire architecture when it comes to identifying and incorporating into each token’s representation a lot of meaningful information about its role in the entire sequence and its relationships with other tokens in the text, be it syntactic, semantic, or any other sort of linguistic relationship. Multiple heads in this so-called attention mechanism are each specialized in capturing different linguistic aspects and patterns in the token and the entire sequence it belongs to simultaneously. The result of having a token representation ht(l) (with positional information injected a priori, don’t forget!) traveling through this multi-headed attention inside a layer is a context-enriched or context-aware token representation. By using residual connections and layer normalizations across the transformer layer, newly generated vectors become stabilized blends of their own previous representations and the multi-headed attention output. This helps improve coherence throughout the entire process, which is applied repeatedly across layers. Feed-forward Neural Network Next comes something relatively less complex: a few feed-forward neural network (FFN) layers. For instance, these can be per-token multilayer perceptrons (MLPs) whose goal is to further transform and refine the token features that are gradually being learned. The main difference between the attention stage and this one is that attention mixes and incorporates, in each token representation, contextual information from across all tokens, but the FFN step is applied independently on each token, refining the contextual patterns already integrated to yield useful “knowledge” from them. These layers are also supplemented with residual connections and layer normalizations, and as a result of this process, we have at the end of a transformer layer an updated representation ht(l+1) that will become the input to the next transformer layer, thereby entering another multi-headed attention block. The whole process

iPhone 17 Series, iPhone 16, And MacBook Pro Models Get Huge Discount In Apple Holiday Season Sale- Details Here | Technology News

Apple Holiday Season Sale: As India enters the holiday season, Apple has rolled out its Holiday Season sale worldwide, including in India. The offers are now available on Apple’s official website. While direct price cuts on the newest devices are rare, customers can still save a lot through instant cashback and no-cost EMI options. Apple is offering discounts on all its products, including the latest iPhones, MacBooks, Watches, iPads, and AirPods. Adding further, banks like American Express, Axis Bank, and ICICI Bank are giving extra benefits. Shoppers can get cashback up to Rs 10,000 and enjoy no-cost EMIs for up to six months, depending on the product and the card used. To make it more lucrative for Apple users, Apple is offering 3 months of free Apple Music subscription to those who buy an Apple Watch. People can also claim Apple TV subscription of 3 months for free if you purchase an Apple device via Apple.in. Add Zee News as a Preferred Source Apple iPhone 17 Series And iPhone 16: Discount Offers The iPhone 17 series is now listed on Apple’s official website, Apple.in, with an instant cashback of Rs 5,000 on select bank cards. However, the standard iPhone 17 is out of stock on most stores, including Croma, Amazon, Flipkart, and Vijay Sales. Apple.in is still a reliable place to buy it, though it only gives Rs 1,000 as a card discount. Those who can wait may get better deals when stock increases. The iPhone 17 Pro, originally priced at Rs 1,34,900, comes with a Rs 5,000 instant discount on ICICI, American Express, and Axis Bank card users. Meanwhile, Apple also gives Rs 4,000 cashback on the iPhone 16 and iPhone 16 Plus. The other stores like Flipkart, Reliance Digital, and Vijay Sales are offering higher discounts of up to Rs 9,000. Apple MacBook Air M4, MacBook Pro M4: Discount Offers Apple’s official India website shows that the 13-inch MacBook Air M4 is available with an instant cashback of Rs 10,000. Originally priced at Rs 99,900, the effective price comes down to Rs 89,900. The same Rs 10,000 cashback is also offered on the 14-inch and 16-inch MacBook Pro models. On the other hand, the 14-inch MacBook Pro M4, launched at Rs 1,69,900, is now available for Rs 1,59,900. The 16-inch MacBook Pro M4 Pro, originally Rs 2,49,900, can now be bought for Rs 2,39,900. Apple Watch Series 11, iPad: Discount Offers The Apple Watch Series 11 is available with a Rs 4,000 bank discount, while the Apple Watch SE 3 comes with Rs 2,000 off. Both AirPods Pro 3 and AirPods 4 offer Rs 1,000 cashback. The latest iPad Air models, including the 11-inch and 13-inch versions, have a Rs 4,000 discount, while the standard iPad and iPad mini are available with Rs 3,000 off. These offers make it easier for buyers to save on Apple’s latest gadgets.

Fine-Tuning a BERT Model – MachineLearningMastery.com

import collections import dataclasses import functools import torch import torch.nn as nn import torch.optim as optim import tqdm from datasets import load_dataset from tokenizers import Tokenizer from torch import Tensor # BERT config and model defined previously @dataclasses.dataclass class BertConfig: “”“Configuration for BERT model.”“” vocab_size: int = 30522 num_layers: int = 12 hidden_size: int = 768 num_heads: int = 12 dropout_prob: float = 0.1 pad_id: int = 0 max_seq_len: int = 512 num_types: int = 2 class BertBlock(nn.Module): “”“One transformer block in BERT.”“” def __init__(self, hidden_size: int, num_heads: int, dropout_prob: float): super().__init__() self.attention = nn.MultiheadAttention(hidden_size, num_heads, dropout=dropout_prob, batch_first=True) self.attn_norm = nn.LayerNorm(hidden_size) self.ff_norm = nn.LayerNorm(hidden_size) self.dropout = nn.Dropout(dropout_prob) self.feed_forward = nn.Sequential( nn.Linear(hidden_size, 4 * hidden_size), nn.GELU(), nn.Linear(4 * hidden_size, hidden_size), ) def forward(self, x: Tensor, pad_mask: Tensor) -> Tensor: # self-attention with padding mask and post-norm attn_output, _ = self.attention(x, x, x, key_padding_mask=pad_mask) x = self.attn_norm(x + attn_output) # feed-forward with GeLU activation and post-norm ff_output = self.feed_forward(x) x = self.ff_norm(x + self.dropout(ff_output)) return x class BertPooler(nn.Module): “”“Pooler layer for BERT to process the [CLS] token output.”“” def __init__(self, hidden_size: int): super().__init__() self.dense = nn.Linear(hidden_size, hidden_size) self.activation = nn.Tanh() def forward(self, x: Tensor) -> Tensor: x = self.dense(x) x = self.activation(x) return x class BertModel(nn.Module): “”“Backbone of BERT model.”“” def __init__(self, config: BertConfig): super().__init__() # embedding layers self.word_embeddings = nn.Embedding(config.vocab_size, config.hidden_size, padding_idx=config.pad_id) self.type_embeddings = nn.Embedding(config.num_types, config.hidden_size) self.position_embeddings = nn.Embedding(config.max_seq_len, config.hidden_size) self.embeddings_norm = nn.LayerNorm(config.hidden_size) self.embeddings_dropout = nn.Dropout(config.dropout_prob) # transformer blocks self.blocks = nn.ModuleList([ BertBlock(config.hidden_size, config.num_heads, config.dropout_prob) for _ in range(config.num_layers) ]) # [CLS] pooler layer self.pooler = BertPooler(config.hidden_size) def forward(self, input_ids: Tensor, token_type_ids: Tensor, pad_id: int = 0, ) -> tuple[Tensor, Tensor]: # create attention mask for padding tokens pad_mask = input_ids == pad_id # convert integer tokens to embedding vectors batch_size, seq_len = input_ids.shape position_ids = torch.arange(seq_len, device=input_ids.device).unsqueeze(0) position_embeddings = self.position_embeddings(position_ids) type_embeddings = self.type_embeddings(token_type_ids) token_embeddings = self.word_embeddings(input_ids) x = token_embeddings + type_embeddings + position_embeddings x = self.embeddings_norm(x) x = self.embeddings_dropout(x) # process the sequence with transformer blocks for block in self.blocks: x = block(x, pad_mask) # pool the hidden state of the `[CLS]` token pooled_output = self.pooler(x[:, 0, :]) return x, pooled_output # Define new BERT model for question answering class BertForQuestionAnswering(nn.Module): “”“BERT model for SQuAD question answering.”“” def __init__(self, config: BertConfig): super().__init__() self.bert = BertModel(config) # Two outputs: start and end position logits self.qa_outputs = nn.Linear(config.hidden_size, 2) def forward(self, input_ids: Tensor, token_type_ids: Tensor, pad_id: int = 0, ) -> tuple[Tensor, Tensor]: # Get sequence output from BERT (batch_size, seq_len, hidden_size) seq_output, pooled_output = self.bert(input_ids, token_type_ids, pad_id=pad_id) # Project to start and end logits logits = self.qa_outputs(seq_output) # (batch_size, seq_len, 2) start_logits = logits[:, :, 0] # (batch_size, seq_len) end_logits = logits[:, :, 1] # (batch_size, seq_len) return start_logits, end_logits # Load SQuAD dataset for question answering dataset = load_dataset(“squad”) # Load the pretrained BERT tokenizer TOKENIZER_PATH = “wikitext-2_wordpiece.json” tokenizer = Tokenizer.from_file(TOKENIZER_PATH) # Setup collate function to tokenize question-context pairs for the model def collate(batch: list[dict], tokenizer: Tokenizer, max_len: int, ) -> tuple[Tensor, Tensor, Tensor, Tensor]: “”“Collate question-context pairs for the model.”“” cls_id = tokenizer.token_to_id(“[CLS]”) sep_id = tokenizer.token_to_id(“[SEP]”) pad_id = tokenizer.token_to_id(“[PAD]”) input_ids_list = [] token_type_ids_list = [] start_positions = [] end_positions = [] for item in batch: # Tokenize question and context question, context = item[“question”], item[“context”] question_ids = tokenizer.encode(question).ids context_ids = tokenizer.encode(context).ids # Build input: [CLS] question [SEP] context [SEP] input_ids = [cls_id, *question_ids, sep_id, *context_ids, sep_id] token_type_ids = [0] * (len(question_ids)+2) + [1] * (len(context_ids)+1) # Truncate or pad to max length if len(input_ids) > max_len: input_ids = input_ids[:max_len] token_type_ids = token_type_ids[:max_len] else: input_ids.extend([pad_id] * (max_len – len(input_ids))) token_type_ids.extend([1] * (max_len – len(token_type_ids))) # Find answer position in tokens: Answer may not be in the context start_pos = end_pos = 0 if len(item[“answers”][“text”]) > 0: answers = tokenizer.encode(item[“answers”][“text”][0]).ids # find the context offset of the answer in context_ids for i in range(len(context_ids) – len(answers) + 1): if context_ids[i:i+len(answers)] == answers: start_pos = i + len(question_ids) + 2 end_pos = start_pos + len(answers) – 1 break if end_pos >= max_len: start_pos = end_pos = 0 # answer is clipped, hence no answer input_ids_list.append(input_ids) token_type_ids_list.append(token_type_ids) start_positions.append(start_pos) end_positions.append(end_pos) input_ids_list = torch.tensor(input_ids_list) token_type_ids_list = torch.tensor(token_type_ids_list) start_positions = torch.tensor(start_positions) end_positions = torch.tensor(end_positions) return (input_ids_list, token_type_ids_list, start_positions, end_positions) batch_size = 16 max_len = 384 # Longer for Q&A to accommodate context collate_fn = functools.partial(collate, tokenizer=tokenizer, max_len=max_len) train_loader = torch.utils.data.DataLoader(dataset[“train”], batch_size=batch_size, shuffle=True, collate_fn=collate_fn) val_loader = torch.utils.data.DataLoader(dataset[“validation”], batch_size=batch_size, shuffle=False, collate_fn=collate_fn) # Create Q&A model with a pretrained foundation BERT model device = torch.device(“cuda” if torch.cuda.is_available() else “cpu”) config = BertConfig() model = BertForQuestionAnswering(config) model.to(device) model.bert.load_state_dict(torch.load(“bert_model.pth”, map_location=device)) # Training setup loss_fn = nn.CrossEntropyLoss() optimizer = optim.AdamW(model.parameters(), lr=2e–5) num_epochs = 3 for epoch in range(num_epochs): model.train() # Training with tqdm.tqdm(train_loader, desc=f“Epoch {epoch+1}/{num_epochs}”) as pbar: for batch in pbar: # get batched data input_ids, token_type_ids, start_positions, end_positions = batch input_ids = input_ids.to(device) token_type_ids = token_type_ids.to(device) start_positions = start_positions.to(device) end_positions = end_positions.to(device) # forward pass start_logits, end_logits = model(input_ids, token_type_ids) # backward pass optimizer.zero_grad() start_loss = loss_fn(start_logits, start_positions) end_loss = loss_fn(end_logits, end_positions) loss = start_loss + end_loss loss.backward() optimizer.step() # update progress bar pbar.set_postfix(loss=float(loss)) pbar.update(1) # Validation: Keep track of the average loss and accuracy model.eval() val_loss, num_matches, num_batches, num_samples = 0, 0, 0, 0 with torch.no_grad(): for batch in val_loader: # get batched data input_ids, token_type_ids, start_positions, end_positions = batch input_ids = input_ids.to(device) token_type_ids = token_type_ids.to(device) start_positions = start_positions.to(device) end_positions = end_positions.to(device) # forward pass on validation data start_logits, end_logits = model(input_ids, token_type_ids) # compute loss start_loss = loss_fn(start_logits, start_positions) end_loss = loss_fn(end_logits, end_positions) loss = start_loss + end_loss val_loss += loss.item() num_batches += 1 # compute accuracy pred_start = start_logits.argmax(dim=–1) pred_end = end_logits.argmax(dim=–1) match = (pred_start == start_positions) & (pred_end == end_positions) num_matches += match.sum().item() num_samples += len(start_positions) avg_loss = val_loss / num_batches acc = num_matches / num_samples print(f“Validation {epoch+1}/{num_epochs}: acc {acc:.4f}, avg loss {avg_loss:.4f}”) # Save the fine-tuned model torch.save(model.state_dict(), f“bert_model_squad.pth”)