Access Denied You don’t have permission to access “http://zeenews.india.com/technology/elon-musk-offers-to-pay-tsa-staff-salaries-amid-us-funding-impasse-3029247.html” on this server. Reference #18.c4f43717.1774162969.4fbeea6c https://errors.edgesuite.net/18.c4f43717.1774162969.4fbeea6c

Access Denied

Access Denied You don’t have permission to access “http://zeenews.india.com/technology/oneplus-nord-buds-4-pro-with-54-hour-battery-and-ai-calling-launched-in-india-at-rs-3999-check-specs-and-price-3029019.html” on this server. Reference #18.54fdd417.1774115747.4b6c4945 https://errors.edgesuite.net/18.54fdd417.1774115747.4b6c4945

Access Denied

Access Denied You don’t have permission to access “http://zeenews.india.com/technology/india-electronics-expo-2026-begins-from-march-23-in-delhi-amid-export-boom-3029091.html” on this server. Reference #18.eff43717.1774111037.3409e0ea https://errors.edgesuite.net/18.eff43717.1774111037.3409e0ea

Access Denied

Access Denied You don’t have permission to access “http://zeenews.india.com/technology/us-new-ai-policy-push-signals-shift-for-india-3029094.html” on this server. Reference #18.eff43717.1774106642.33bde079 https://errors.edgesuite.net/18.eff43717.1774106642.33bde079

Access Denied

Access Denied You don’t have permission to access “http://zeenews.india.com/technology/how-to-charge-your-phone-using-laptop-without-charger-is-it-safe-for-battery-speed-and-performance-3028985.html” on this server. Reference #18.5cfdd417.1774090103.11c403d7 https://errors.edgesuite.net/18.5cfdd417.1774090103.11c403d7

Access Denied

Access Denied You don’t have permission to access “http://zeenews.india.com/technology/no-more-language-barriers-whatsapp-to-offer-auto-translate-message-feature-in-21-languages-for-iphone-users-3028960.html” on this server. Reference #18.c4f43717.1774085312.48652ad4 https://errors.edgesuite.net/18.c4f43717.1774085312.48652ad4

Access Denied

Access Denied You don’t have permission to access “http://zeenews.india.com/technology/samsung-galaxy-s25-ultra-gets-massive-price-cut-in-india-after-galaxy-s26-series-launch-check-specs-and-other-features-3028926.html” on this server. Reference #18.eff43717.1774080123.3154d31a https://errors.edgesuite.net/18.eff43717.1774080123.3154d31a

Access Denied

Access Denied You don’t have permission to access “http://zeenews.india.com/technology/elon-musk-held-liable-for-misleading-twitter-shareholders-in-2022-takeover-bid-3028929.html” on this server. Reference #18.eff43717.1774076142.3107d7fd https://errors.edgesuite.net/18.eff43717.1774076142.3107d7fd

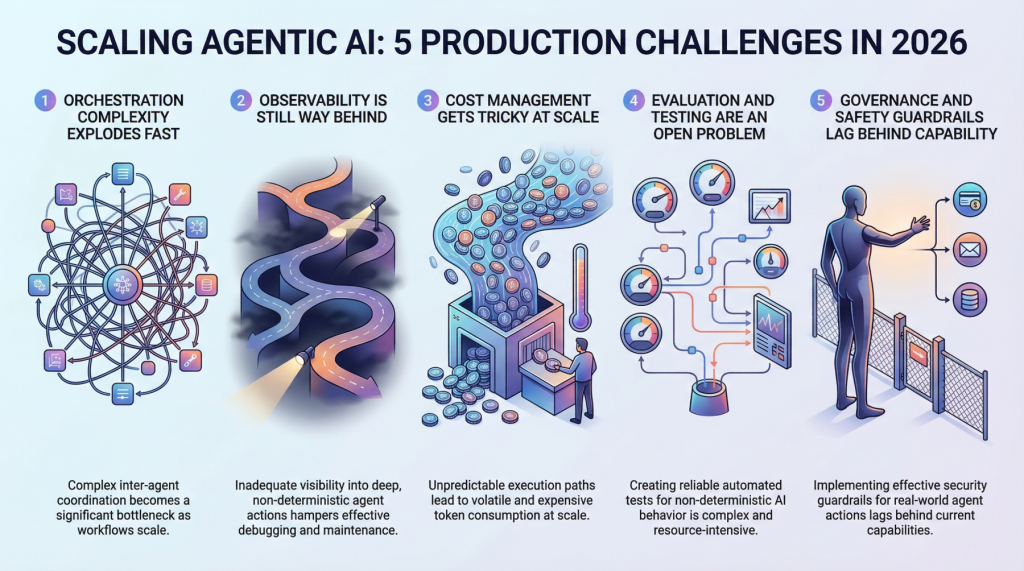

5 Production Scaling Challenges for Agentic AI in 2026

In this article, you will learn about five major challenges teams face when scaling agentic AI systems from prototype to production in 2026. Topics we will cover include: Why orchestration complexity grows rapidly in multi-agent systems. How observability, evaluation, and cost control remain difficult in production environments. Why governance and safety guardrails are becoming essential as agentic systems take real-world actions. Let’s not waste any more time. 5 Production Scaling Challenges for Agentic AI in 2026Image by Editor Introduction Everyone’s building agentic AI systems right now, for better or for worse. The demos look incredible, the prototypes feel magical, and the pitch decks practically write themselves. But here’s what nobody’s tweeting about: getting these things to actually work at scale, in production, with real users and real stakes, is a completely different game. The gap between a slick demo and a reliable production system has always existed in machine learning, but agentic AI stretches it wider than anything we’ve seen before. These systems make decisions, take actions, and chain together complex workflows autonomously. That’s powerful, and it’s also terrifying when things go sideways at scale. So let’s talk about the five biggest headaches teams are running into as they try to scale agentic AI in 2026. 1. Orchestration Complexity Explodes Fast When you’ve got a single agent handling a narrow task, orchestration feels manageable. You define a workflow, set some guardrails, and things mostly behave. But production systems rarely stay that simple. The moment you introduce multi-agent architectures in which agents delegate to other agents, retry failed steps, or dynamically choose which tools to call, you’re dealing with orchestration complexity that grows almost exponentially. Teams are finding that the coordination overhead between agents becomes the bottleneck, not the individual model calls. You’ve got agents waiting on other agents, race conditions popping up in async pipelines, and cascading failures that are genuinely hard to reproduce in staging environments. Traditional workflow engines weren’t designed for this level of dynamic decision-making, and most teams end up building custom orchestration layers that quickly become the hardest part of the entire stack to maintain. The real kicker is that these systems behave differently under load. An orchestration pattern that works beautifully at 100 requests per minute can completely fall apart at 10,000. Debugging that gap requires a kind of systems thinking that most machine learning teams are still developing. 2. Observability Is Still Way Behind You can’t fix what you can’t see, and right now, most teams can’t see nearly enough of what their agentic systems are doing in production. Traditional machine learning monitoring tracks things like latency, throughput, and model accuracy. Those metrics still matter, but they barely scratch the surface of agentic workflows. When an agent takes a 12-step journey to answer a user query, you need to understand every decision point along the way. Why did it choose Tool A over Tool B? Why did it retry step 4 three times? Why did the final output completely miss the mark, despite every intermediate step looking fine? The tracing infrastructure for this kind of deep observability is still immature. Most teams cobble together some combination of LangSmith, custom logging, and a lot of hope. What makes it harder is that agentic behavior is non-deterministic by nature. The same input can produce wildly different execution paths, which means you can’t just snapshot a failure and replay it reliably. Building robust observability for systems that are inherently unpredictable remains one of the biggest unsolved problems in the space. 3. Cost Management Gets Tricky at Scale Here’s something that catches a lot of teams off guard: agentic systems are expensive to run. Each agent action typically involves one or more LLM calls, and when agents are chaining together dozens of steps per request, the token costs add up shockingly fast. A workflow that costs $0.15 per execution sounds fine until you’re processing 500,000 requests a day. Smart teams are getting creative with cost optimization. They’re routing simpler sub-tasks to smaller, cheaper models while reserving the heavy hitters for complex reasoning steps. They’re caching intermediate results aggressively and building kill switches that terminate runaway agent loops before they burn through budget. But there’s a constant tension between cost efficiency and output quality, and finding the right balance requires ongoing experimentation. The billing unpredictability is what really stresses out engineering leads. Unlike traditional APIs, where you can estimate costs pretty accurately, agentic systems have variable execution paths that make cost forecasting genuinely difficult. One edge case can trigger a chain of retries that costs 50 times more than the normal path. 4. Evaluation and Testing Are an Open Problem How do you test a system that can take a different path every time it runs? That’s the question keeping machine learning engineers up at night. Traditional software testing assumes deterministic behavior, and traditional machine learning evaluation assumes a fixed input-output mapping. Agentic AI breaks both assumptions simultaneously. Teams are experimenting with a range of approaches. Some are building LLM-as-a-judge pipelines in which a separate model evaluates the agent’s outputs. Others are creating scenario-based test suites that check for behavioral properties rather than exact outputs. A few are investing in simulation environments where agents can be stress-tested against thousands of synthetic scenarios before hitting production. But none of these approaches feels truly mature yet. The evaluation tooling is fragmented, benchmarks are inconsistent, and there’s no industry consensus on what “good” even looks like for a complex agentic workflow. Most teams end up relying heavily on human review, which obviously doesn’t scale. 5. Governance and Safety Guardrails Lag Behind Capability Agentic AI systems can take real actions in the real world. They can send emails, modify databases, execute transactions, and interact with external services. The safety implications of that autonomy are significant, and governance frameworks haven’t kept pace with how quickly these capabilities are being deployed. The challenge is implementing guardrails that are robust enough to prevent harmful actions without being so restrictive that they kill the usefulness of

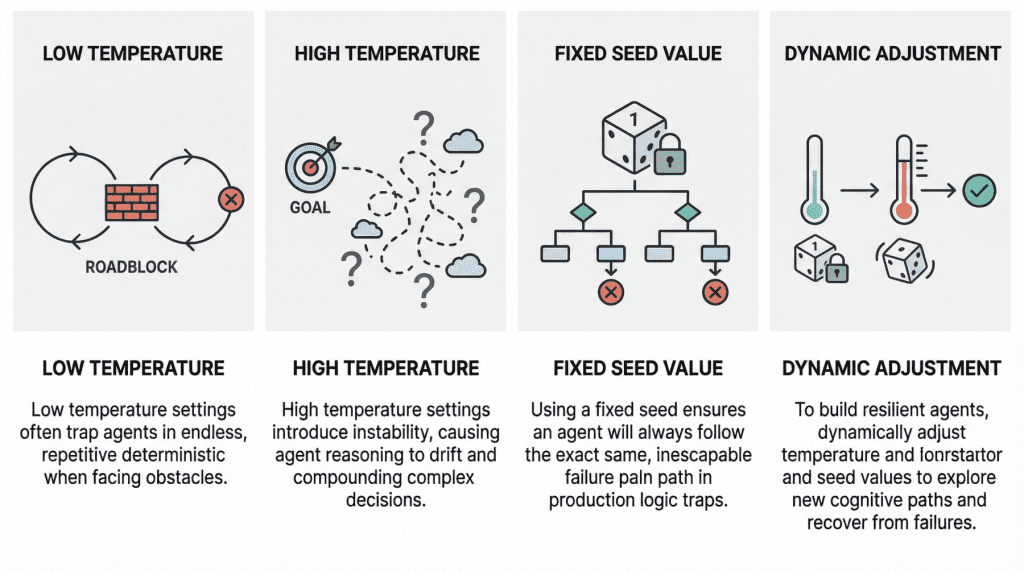

Why Agents Fail: The Role of Seed Values and Temperature in Agentic Loops

In this article, you will learn how temperature and seed values influence failure modes in agentic loops, and how to tune them for greater resilience. Topics we will cover include: How low and high temperature settings can produce distinct failure patterns in agentic loops. Why fixed seed values can undermine robustness in production environments. How to use temperature and seed adjustments to build more resilient and cost-effective agent workflows. Let’s not waste any more time. Why Agents Fail: The Role of Seed Values and Temperature in Agentic LoopsImage by Editor Introduction In the modern AI landscape, an agent loop is a cyclic, repeatable, and continuous process whereby an entity called an AI agent — with a certain degree of autonomy — works toward a goal. In practice, agent loops now wrap a large language model (LLM) inside them so that, instead of reacting only to single-user prompt interactions, they implement a variation of the Observe-Reason-Act cycle defined for classic software agents decades ago. Agents are, of course, not infallible, and they may sometimes fail, in some cases due to poor prompting or a lack of access to the external tools they need to reach a goal. However, two invisible steering mechanisms can also influence failure: temperature and seed value. This article analyzes both from the perspective of failure in agent loops. Let’s take a closer look at how these settings may relate to failure in agentic loops through a gentle discussion backed by recent research and production diagnoses. Temperature: “Reasoning Drift” Vs. “Deterministic Loop” Temperature is an inherent parameter of LLMs, and it controls randomness in their internal behavior when selecting the words, or tokens, that make up the model’s response. The higher its value (closer to 1, assuming a range between 0 and 1), the less deterministic and more unpredictable the model’s outputs become, and vice versa. In agentic loops, because LLMs sit at the core, understanding temperature is crucial to understanding unique, well-documented failure modes that may arise, particularly when the temperature is extremely low or high. A low-temperature (near 0) agent often yields the so-called deterministic loop failure. In other words, the agent’s behavior becomes too rigid. Suppose the agent comes across a “roadblock” on its path, such as a third-party API consistently returning an error. With a low temperature and exceedingly deterministic behavior, it lacks the kind of cognitive randomness or exploration needed to pivot. Recent studies have scientifically analyzed this phenomenon. The practical consequences typically observed range from agents finalizing missions prematurely to failing to coordinate when their initial plans encounter friction, thus ending up in loops of the same attempts over and over without any progress. At the opposite end of the spectrum, we have high-temperature (0.8 or above) agentic loops. As with standalone LLMs, high temperature introduces a much broader range of possibilities when sampling each element of the response. In a multi-step loop, however, this highly probabilistic behavior may compound in a dangerous way, turning into a trait known as reasoning drift. In essence, this behavior boils down to instability in decision-making. Introducing high-temperature randomness into complex agent workflows may cause agent-based models to lose their way — that is, lose their original selection criteria for making decisions. This may include symptoms such as hallucinations (fabricated reasoning chains) or even forgetting the user’s initial goal. Seed Value: Reproducibility Seed values are the mechanisms that initialize the pseudo-random generator used to build the model’s outputs. Put more simply, the seed value is like the starting position of a die that is rolled to kickstart the model’s word-selection mechanism governing response generation. Regarding this setting, the main problem that usually causes failure in agent loops is using a fixed seed in production. A fixed seed is reasonable in a testing environment, for example, for the sake of reproducibility in tests and experiments, but allowing it to make its way into production introduces a significant vulnerability. An agent may inadvertently enter a logic trap when it operates with a fixed seed. In such a situation, the system may automatically trigger a recovery attempt, but even then, the fixed seed is almost synonymous with guaranteeing that the agent will take the same reasoning path doomed to failure over and over again. In practical terms, imagine an agent tasked with debugging a failed deployment by inspecting logs, proposing a fix, and then retrying the operation. If the loop runs with a fixed seed, the stochastic choices made by the model during each reasoning step may remain effectively “locked” into the same pattern every time recovery is triggered. As a result, the agent may keep selecting the same flawed interpretation of the logs, calling the same tool in the same order, or generating the same ineffective fix despite repeated retries. What looks like persistence at the system level is, in reality, repetition at the cognitive level. This is why resilient agent architectures often treat the seed as a controllable recovery lever: when the system detects that the agent is stuck, changing the seed can help force exploration of a different reasoning trajectory, increasing the chances of escaping a local failure mode rather than reproducing it indefinitely. A summary of the role of seed values and temperature in agentic loopsImage by Editor Best Practices For Resilient And Cost-Effective Loops Having learned about the impact that temperature and seed value may have in agent loops, one might wonder how to make these loops more resilient to failure by carefully setting these two parameters. Basically, breaking out of failure in agentic loops often entails changing the seed value or temperature as part of retry efforts to seek a different cognitive path. Resilient agents usually implement approaches that dynamically adjust these parameters in edge cases, for instance by temporarily raising the temperature or randomizing the seed if an analysis of the agent’s state suggests it is stuck. The bad news is that this can become very expensive to test when commercial APIs are used, which is why open-weight models, local models,