Access Denied You don’t have permission to access “http://zeenews.india.com/technology/big-action-on-online-betting-as-govt-bans-300-more-illegal-gambling-apps-3028738.html” on this server. Reference #18.95a2dfad.1774013147.2daf2ac0 https://errors.edgesuite.net/18.95a2dfad.1774013147.2daf2ac0

Everything You Need to Know About Recursive Language Models

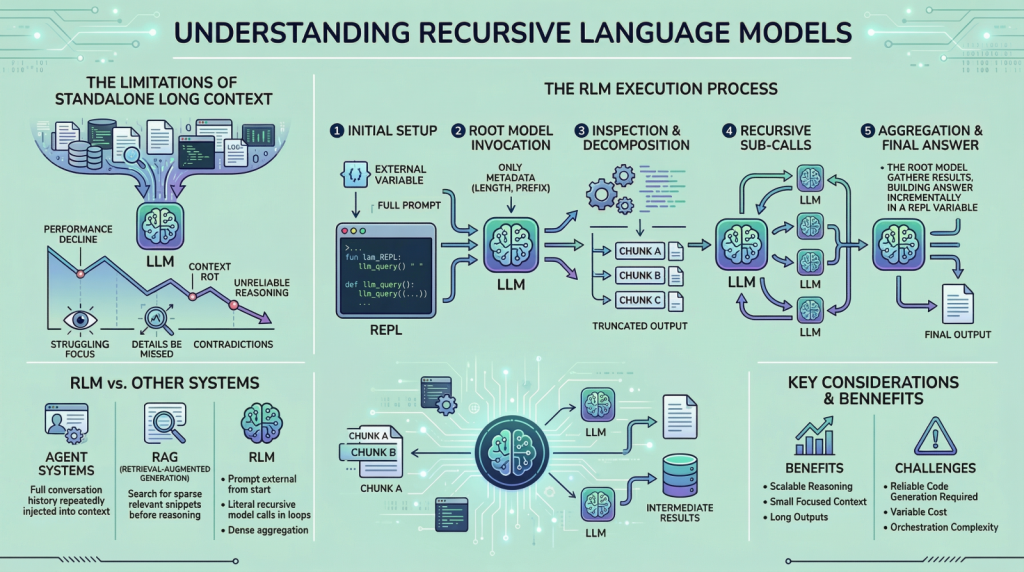

In this article, you will learn what recursive language models are, why they matter for long-input reasoning, and how they differ from standard long-context prompting, retrieval, and agentic systems. Topics we will cover include: Why long context alone does not solve reasoning over very large inputs How recursive language models use an external runtime and recursive sub-calls to process information The main tradeoffs, limitations, and practical use cases of this approach Let’s get right to it. Everything You Need to Know About Recursive Language ModelsImage by Editor Introduction If you are here, you have probably heard about recent work on recursive language models. The idea has been trending across LinkedIn and X, and it led me to study the topic more deeply and share what I learned with you. I think we can all agree that large language models (LLMs) have improved rapidly over the past few years, especially in their ability to handle large inputs. This progress has led many people to assume that long context is largely a solved problem, but it is not. If you have tried giving models very long inputs close to, or equal to, their context window, you might have noticed that they become less reliable. They often miss details present in the provided information, contradict earlier statements, or produce shallow answers instead of doing careful reasoning. This issue is often referred to as “context rot”, which is quite an interesting name. Recursive language models (RLMs) are a response to this problem. Instead of pushing more and more text into a single forward pass of a language model, RLMs change how the model interacts with long inputs in the first place. In this article, we will look at what they are, how they work, and the kinds of problems they are designed to solve. Why Long Context Is Not Enough You can skip this section if you already understand the motivation from the introduction. But if you are curious, or if the idea did not fully click the first time, let me break it down further. The way these LLMs work is fairly simple. Everything we want the model to consider is given to it as a single prompt, and based on that information, the model generates the output token by token. This works well when the prompt is short. However, when it becomes very long, performance starts to degrade. This is not necessarily due to memory limits. Even if the model can see the complete prompt, it often fails to use it effectively. Here are some reasons that may contribute to this behavior: These LLMs are mainly transformer-based models with an attention mechanism. As the prompt grows longer, attention becomes more diffuse. The model struggles to focus sharply on what matters when it has to attend to tens or hundreds of thousands of tokens. Another reason is the presence of heterogeneous information mixed together, such as logs, documents, code, chat history, and intermediate outputs. Lastly, many tasks are not just about retrieving or finding a relevant snippet in a huge body of content. They often involve aggregating information across the entire input. Because of the problems discussed above, people proposed ideas such as summarization and retrieval. These approaches do help in some cases, but they are not universal solutions. Summaries are lossy by design, and retrieval assumes that relevance can be identified reliably before reasoning begins. Many real-world tasks violate these assumptions. This is why RLMs suggest a different approach. Instead of forcing the model to absorb the entire prompt at once, they let the model actively explore and process the prompt. Now that we have the basic background, let us look more closely at how this works. How a Recursive Language Model Works in Practice In an RLM setup, the prompt is treated as part of the external environment. This means the model does not read the entire input directly. Instead, the input sits outside the model, often as a variable, and the model is given only metadata about the prompt along with instructions on how to access it. When the model needs information, it issues commands to examine specific parts of the prompt. This simple design keeps the model’s internal context small and focused, even when the underlying input is extremely large. To understand RLMs more concretely, let us walk through a typical execution step by step. Step 1: Initializing a Persistent REPL Environment At the beginning of an RLM run, the system initializes a runtime environment, typically a Python REPL. This environment contains: A variable holding the full user prompt, which may be arbitrarily large A function (for example, llm_query(…) or sub_RLM(…)) that allows the system to invoke additional language model calls on selected pieces of text From the user’s perspective, the interface remains simple, with a textual input and an output, but internally the REPL acts as scaffolding that enables scalable reasoning. Step 2: Invoking the Root Model with Prompt Metadata Only The root language model is then invoked, but it does not receive the full prompt. Instead, it is given: Constant-size metadata about the prompt, such as its length or a short prefix Instructions describing the task Access instructions for interacting with the prompt via the REPL environment By withholding the full prompt, the system forces the model to interact with the input intentionally, rather than passively absorbing it into the context window. From this point onward, the model interacts with the prompt indirectly. Step 3: Inspecting and Decomposing the Prompt via Code Execution The model might begin by inspecting the structure of the input. For example, it can print the first few lines, search for headings, or split the text into chunks based on delimiters. These operations are performed by generating code, which is then executed in the environment. The outputs of these operations are truncated before being shown to the model, ensuring that the context window is not overwhelmed. Step 4: Issuing Recursive Sub-Calls on Selected Slices Once the model understands the structure of the

7 Readability Features for Your Next Machine Learning Model

In this article, you will learn how to extract seven useful readability and text-complexity features from raw text using the Textstat Python library. Topics we will cover include: How Textstat can quantify readability and text complexity for downstream machine learning tasks. How to compute seven commonly used readability metrics in Python. How to interpret these metrics when using them as features for classification or regression models. Let’s not waste any more time. 7 Readability Features for Your Next Machine Learning ModelImage by Editor Introduction Unlike fully structured tabular data, preparing text data for machine learning models typically entails tasks like tokenization, embeddings, or sentiment analysis. While these are undoubtedly useful features, the structural complexity of text — or its readability, for that matter — can also constitute an incredibly informative feature for predictive tasks such as classification or regression. Textstat, as its name suggests, is a lightweight and intuitive Python library that can help you obtain statistics from raw text. Through readability scores, it provides input features for models that can help distinguish between a casual social media post, a children’s fairy tale, or a philosophy manuscript, to name a few. This article introduces seven insightful examples of text analysis that can be easily conducted using the Textstat library. Before we get started, make sure you have Textstat installed: While the analyses described here can be scaled up to a large text corpus, we will illustrate them with a toy dataset consisting of a small number of labeled texts. Bear in mind, however, that for downstream machine learning model training and inference, you will need a sufficiently large dataset for training purposes. import pandas as pd import textstat # Create a toy dataset with three markedly different texts data = { ‘Category’: [‘Simple’, ‘Standard’, ‘Complex’], ‘Text’: [ “The cat sat on the mat. It was a sunny day. The dog played outside.”, “Machine learning algorithms build a model based on sample data, known as training data, to make predictions.”, “The thermodynamic properties of the system dictate the spontaneous progression of the chemical reaction, contingent upon the activation energy threshold.” ] } df = pd.DataFrame(data) print(“Environment set up and dataset ready!”) import pandas as pd import textstat # Create a toy dataset with three markedly different texts data = { ‘Category’: [‘Simple’, ‘Standard’, ‘Complex’], ‘Text’: [ “The cat sat on the mat. It was a sunny day. The dog played outside.”, “Machine learning algorithms build a model based on sample data, known as training data, to make predictions.”, “The thermodynamic properties of the system dictate the spontaneous progression of the chemical reaction, contingent upon the activation energy threshold.” ] } df = pd.DataFrame(data) print(“Environment set up and dataset ready!”) 1. Applying the Flesch Reading Ease Formula The first text analysis metric we will explore is the Flesch Reading Ease formula, one of the earliest and most widely used metrics for quantifying text readability. It evaluates a text based on the average sentence length and the average number of syllables per word. While it is conceptually meant to take values in the 0 – 100 range — with 0 meaning unreadable and 100 meaning very easy to read — its formula is not strictly bounded, as shown in the examples below: df[‘Flesch_Ease’] = df[‘Text’].apply(textstat.flesch_reading_ease) print(“Flesch Reading Ease Scores:”) print(df[[‘Category’, ‘Flesch_Ease’]]) df[‘Flesch_Ease’] = df[‘Text’].apply(textstat.flesch_reading_ease) print(“Flesch Reading Ease Scores:”) print(df[[‘Category’, ‘Flesch_Ease’]]) Output: Flesch Reading Ease Scores: Category Flesch_Ease 0 Simple 105.880000 1 Standard 45.262353 2 Complex -8.045000 Flesch Reading Ease Scores: Category Flesch_Ease 0 Simple 105.880000 1 Standard 45.262353 2 Complex –8.045000 This is what the actual formula looks like: $$ 206.835 – 1.015 \left( \frac{\text{total words}}{\text{total sentences}} \right) – 84.6 \left( \frac{\text{total syllables}}{\text{total words}} \right) $$ Unbounded formulas like Flesch Reading Ease can hinder the proper training of a machine learning model, which is something to take into consideration during later feature engineering tasks. 2. Computing Flesch-Kincaid Grade Levels Unlike the Reading Ease score, which provides a single readability value, the Flesch-Kincaid Grade Level assesses text complexity using a scale similar to US school grade levels. In this case, higher values indicate greater complexity. Be warned, though: this metric also behaves similarly to the Flesch Reading Ease score, such that extremely simple or complex texts can yield scores below zero or arbitrarily high values, respectively. df[‘Flesch_Grade’] = df[‘Text’].apply(textstat.flesch_kincaid_grade) print(“Flesch-Kincaid Grade Levels:”) print(df[[‘Category’, ‘Flesch_Grade’]]) df[‘Flesch_Grade’] = df[‘Text’].apply(textstat.flesch_kincaid_grade) print(“Flesch-Kincaid Grade Levels:”) print(df[[‘Category’, ‘Flesch_Grade’]]) Output: Flesch-Kincaid Grade Levels: Category Flesch_Grade 0 Simple -0.266667 1 Standard 11.169412 2 Complex 19.350000 Flesch–Kincaid Grade Levels: Category Flesch_Grade 0 Simple –0.266667 1 Standard 11.169412 2 Complex 19.350000 3. Computing the SMOG Index Another measure with origins in assessing text complexity is the SMOG Index, which estimates the years of formal education required to comprehend a text. This formula is somewhat more bounded than others, as it has a strict mathematical floor slightly above 3. The simplest of our three example texts falls at the absolute minimum for this measure in terms of complexity. It takes into account factors such as the number of polysyllabic words, that is, words with three or more syllables. df[‘SMOG_Index’] = df[‘Text’].apply(textstat.smog_index) print(“SMOG Index Scores:”) print(df[[‘Category’, ‘SMOG_Index’]]) df[‘SMOG_Index’] = df[‘Text’].apply(textstat.smog_index) print(“SMOG Index Scores:”) print(df[[‘Category’, ‘SMOG_Index’]]) Output: SMOG Index Scores: Category SMOG_Index 0 Simple 3.129100 1 Standard 11.208143 2 Complex 20.267339 SMOG Index Scores: Category SMOG_Index 0 Simple 3.129100 1 Standard 11.208143 2 Complex 20.267339 4. Calculating the Gunning Fog Index Like the SMOG Index, the Gunning Fog Index also has a strict floor, in this case equal to zero. The reason is straightforward: it quantifies the percentage of complex words along with average sentence length. It is a popular metric for analyzing business texts and ensuring that technical or domain-specific content is accessible to a wider audience. df[‘Gunning_Fog’] = df[‘Text’].apply(textstat.gunning_fog) print(“Gunning Fog Index:”) print(df[[‘Category’, ‘Gunning_Fog’]]) df[‘Gunning_Fog’] = df[‘Text’].apply(textstat.gunning_fog) print(“Gunning Fog Index:”) print(df[[‘Category’, ‘Gunning_Fog’]]) Output: Gunning Fog Index: Category Gunning_Fog 0 Simple 2.000000 1 Standard 11.505882 2 Complex 26.000000 Gunning Fog Index: Category Gunning_Fog 0 Simple 2.000000 1 Standard 11.505882 2 Complex 26.000000 5. Calculating the Automated

Access Denied

Access Denied You don’t have permission to access “http://zeenews.india.com/technology/ai-wave-is-coming-venk-krishnan-urges-india-to-boost-innovation-and-infrastructure-3028102.html” on this server. Reference #18.c4f43717.1773836203.2ea438e3 https://errors.edgesuite.net/18.c4f43717.1773836203.2ea438e3

Access Denied

Access Denied You don’t have permission to access “http://zeenews.india.com/technology/global-foldable-smartphone-market-likely-to-grow-20-in-2026-3028041.html” on this server. Reference #18.c4f43717.1773827873.2db2735e https://errors.edgesuite.net/18.c4f43717.1773827873.2db2735e

Access Denied

Access Denied You don’t have permission to access “http://zeenews.india.com/technology/what-is-the-meaning-of-s-g-t-p-letters-at-end-of-some-text-messages-secret-behind-sms-codes-you-must-know-3027924.html” on this server. Reference #18.c4f43717.1773820360.2cca3e01 https://errors.edgesuite.net/18.c4f43717.1773820360.2cca3e01

Access Denied

Access Denied You don’t have permission to access “http://zeenews.india.com/technology/vivo-t5x-5g-launched-in-india-with-7200mah-battery-check-camera-display-price-and-bank-discount-offers-3027660.html” on this server. Reference #18.c4f43717.1773753923.26ef4043 https://errors.edgesuite.net/18.c4f43717.1773753923.26ef4043

Access Denied

Access Denied You don’t have permission to access “http://zeenews.india.com/technology/apple-turns-50-from-steve-jobs-garage-to-world-s-most-powerful-tech-gadgets-know-story-behind-iphone-ipad-macbook-and-airpods-empire-3027650.html” on this server. Reference #18.85277368.1773738503.5fa3a2 https://errors.edgesuite.net/18.85277368.1773738503.5fa3a2

Access Denied

Access Denied You don’t have permission to access “http://zeenews.india.com/technology/oneplus-nord-6-india-launch-tipped-for-april-could-debut-with-9000mah-battery-and-snapdragon-8s-gen-4-chipset-check-expected-specs-and-price-3027300.html” on this server. Reference #18.c4f43717.1773684048.1fe752a8 https://errors.edgesuite.net/18.c4f43717.1773684048.1fe752a8

Access Denied

Access Denied You don’t have permission to access “http://zeenews.india.com/technology/goistats-app-records-3-lakh-hits-till-feb-2026-17475-downloads-check-features-google-play-and-apple-app-store-ratings-3027447.html” on this server. Reference #18.eff43717.1773676714.168ff3a0 https://errors.edgesuite.net/18.eff43717.1773676714.168ff3a0