iQOO Z11 Turbo: The Chinese smartphone maker iQOO has launched the iQOO Z11 Turbo in China as the latest addition to its Z-series lineup. The device was introduced on Thursday and is now available for purchase through the Vivo online store in the country. The phone comes in four colour options and multiple RAM and storage variants. The iQOO Z11 Turbo is offered in five configurations and colour options include Polar Night Black, Skylight White, Canglang Fuguang, and Halo Powder. Variant (RAM + Storage) wise expected prices are listed below: Add Zee News as a Preferred Source 12GB + 256GB CNY 2,699 at Rs 35,999 16GB + 256GB CNY 2,999 at Rs 39,000 12GB + 512GB CNY 3,199 at Rs 41,000 16GB + 512GB CNY 3,499 at Rs 45,000 16GB + 1TB CNY 3,999 at Rs 52,000 The smartphone features a 6.59-inch amoled display with 1.5K resolution and a 144Hz refresh rate. It supports HDR content and offers a high screen-to-body ratio of over 94 percent. The phone runs on Android 16-based OriginOS 6 and supports dual SIM functionality. iQOO has also confirmed IP68 and IP69 ratings, making the device resistant to dust and water.

No More Use Of ChatGPT On WhatsApp: Meta’s New Rules End Access For 50M Users – Check How To Save Your Chat History | Technology News

OpenAI’s popular AI chatbot, ChatGPT, can no longer be used on WhatsApp starting today, January 15, 2026. This change comes after Meta, WhatsApp’s parent company, updated its business API policies to restrict general-purpose AI chatbots like ChatGPT. Over 50 million users who enjoyed chatting, creating, and learning via WhatsApp now won’t be able to use ChatGPT on WhatsApp. In October 2025, Meta introduced new rules to limit AI companies from using WhatsApp as a main hub for broad AI assistants. The policy blocks services that run open-ended conversations or share user data for AI training. OpenAI confirmed the end of support, saying they preferred to stay but must follow the terms. This affects text chats and calls to the number +1 (800) 242-8478. Users in India and worldwide will experience this OpenAI update, as WhatsApp has billions of active users. Many relied on ChatGPT for quick answers, image generation, and web searches right in their chats. Add Zee News as a Preferred Source (Also Read: BGMI 4.2 Update Release Date & Time: Primewood Genesis Theme, Royal Enfield Bikes, New Modes, Abilities, And More – Check How To Download) How To Save Your Chat History? OpenAI has urged users to act fast to keep their conversations. Visit the ChatGPT contact profile in WhatsApp and click the link to connect your account. This links your phone number to ChatGPT and moves past chats to the official app. WhatsApp does not allow direct exports, so this is the only way before access cuts off completely. After linking, users can unlink their number if they want. However, ChatGPT is free and easy to use on Android, iOS apps, desktop, and web. OpenAI also launched its Atlas browser for Mac, with more platforms coming. Paid users get extra tools like agent mode for tasks such as research or tab cleanup.

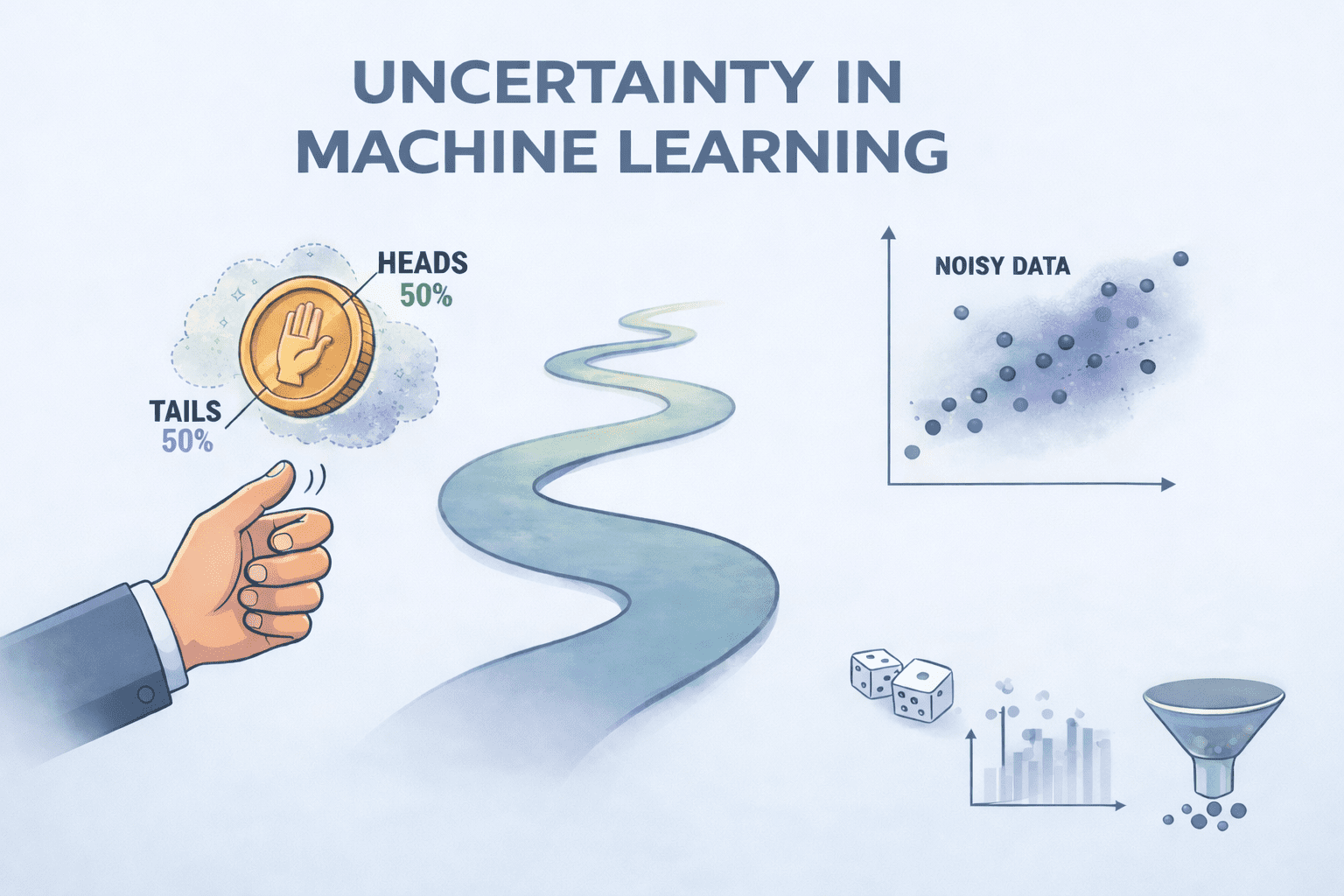

Uncertainty in Machine Learning: Probability & Noise

Uncertainty in Machine Learning: Probability & NoiseImage by Author Editor’s note: This article is a part of our series on visualizing the foundations of machine learning. Welcome to the latest entry in our series on visualizing the foundations of machine learning. In this series, we will aim to break down important and often complex technical concepts into intuitive, visual guides to help you master the core principles of the field. This entry focuses on the uncertainty, probability, and noise in machine learning. Uncertainty in Machine Learning Uncertainty is an unavoidable part of machine learning, arising whenever models attempt to make predictions about the real world. At its core, uncertainty reflects a lack of complete knowledge about an outcome and is most often quantified using probability. Rather than being a flaw, uncertainty is something models must explicitly account for in order to produce reliable and trustworthy predictions. A useful way to think about uncertainty is through the lens of probability and the unknown. Much like flipping a fair coin, where the outcome is uncertain even though the probabilities are well defined, machine learning models frequently operate in environments where multiple outcomes are possible. As data flows through a model, predictions branch into different paths, influenced by randomness, incomplete information, and variability in the data itself. The goal of working with uncertainty is not to eliminate it, but to measure and manage it. This involves understanding several key components: Probability provides a mathematical framework for expressing how likely an event is to occur Noise represents irrelevant or random variation in data that obscures the true signal and can be either random or systematic Together, these factors shape the uncertainty present in a model’s predictions. Not all uncertainty is the same. Aleatoric uncertainty stems from inherent randomness in the data and cannot be reduced, even with more information. Epistemic uncertainty, on the other hand, arises from a lack of knowledge about the model or data-generating process and can often be reduced by collecting more data or improving the model. Distinguishing between these two types is essential for interpreting model behavior and deciding how to improve performance. To manage uncertainty, machine learning practitioners rely on several strategies. Probabilistic models output full probability distributions rather than single point estimates, making uncertainty explicit. Ensemble methods combine predictions from multiple models to reduce variance and better estimate uncertainty. Data cleaning and validation further improve reliability by reducing noise and correcting errors before training. Uncertainty is inherent in real-world data and machine learning systems. By recognizing its sources and incorporating it directly into modeling and decision-making, practitioners can build models that are not only more accurate, but also more robust, transparent, and trustworthy. The visualizer below provides a concise summary of this information for quick reference. You can find a PDF of the infographic in high resolution here. Uncertainty, Probability & Noise: Visualizing the Foundations of Machine Learning (click to enlarge)Image by Author Machine Learning Mastery Resources These are some selected resources for learning more about probability and noise: A Gentle Introduction to Uncertainty in Machine Learning – This article explains what uncertainty means in machine learning, explores the main causes such as noise in data, incomplete coverage, and imperfect models, and describes how probability provides the tools to quantify and manage that uncertainty.Key takeaway: Probability is essential for understanding and managing uncertainty in predictive modeling. Probability for Machine Learning (7-Day Mini-Course) – This structured crash course guides readers through the key probability concepts needed in machine learning, from basic probability types and distributions to Naive Bayes and entropy, with practical lessons designed to build confidence applying these ideas in Python.Key takeaway: Building a solid foundation in probability enhances your ability to apply and interpret machine learning models. Understanding Probability Distributions for Machine Learning with Python – This tutorial introduces important probability distributions used in machine learning, shows how they apply to tasks like modeling residuals and classification, and provides Python examples to help practitioners understand and use them effectively.Key takeaway: Mastering probability distributions helps you model uncertainty and choose appropriate statistical tools throughout the machine learning workflow. Be on the lookout for for additional entries in our series on visualizing the foundations of machine learning. About Matthew Mayo Matthew Mayo (@mattmayo13) holds a master’s degree in computer science and a graduate diploma in data mining. As managing editor of KDnuggets & Statology, and contributing editor at Machine Learning Mastery, Matthew aims to make complex data science concepts accessible. His professional interests include natural language processing, language models, machine learning algorithms, and exploring emerging AI. He is driven by a mission to democratize knowledge in the data science community. Matthew has been coding since he was 6 years old.

BGMI 4.2 Update Release Date & Time: Primewood Genesis Theme, Royal Enfield Bikes, New Modes, Abilities, And More – Check How To Download | Technology News

BGMI 4.2 Update: Krafton India is set to release the BGMI 4.2 update on January 15, 2026. The update will be rolled out in phases to avoid server overload. Android and iOS users will receive the update on the same day, but at different time windows. For Android users, the update will begin appearing on the Google Play Store from 6:30 AM IST, with wider availability expected by 11:30 AM to 12:30 PM. iOS users can expect the update between 8:30 AM and 9:30 AM IST, with the rollout completing by 12:30 PM. The update size is expected to be between 0.9GB and 1.5GB. The new update introduces a new Primewood Genesis theme and, in collaboration with Royal Enfield, players will be able to ride the Bullet 350 and Continental GT 650 in the battlegrounds. The new Primewood Genesis theme features nature-inspired environments resembling magical forests. Players will encounter special plants, high-loot zones, and interactive elements such as the Tree of Life, which can be used as cover. Some plants provide weapons and supplies, while poisonous flowers pose a threat during combat. Add Zee News as a Preferred Source New Vehicles And Movement Options Several new mobility features have been added to the game. The Scorpion vehicle allows players to shoot while driving, while the Sacred Deer offers faster movement and escape options. Flora Wings enable players to glide through the air and land quickly. New companions like the Thorn Scorpion and Cherry Blossom Deer come with special abilities. The Prime Eye feature adds skills such as Barrier, Teleport, and Heal. (Also Read: Worried About Your Smartphone’s Battery Health? Check Which Charger Is Best: 30W, 60W, Or 90W–Does Charging Speed Affect Battery Life?) Weapon And Gameplay Changes Weapon balance has been adjusted in the update. The AKM and M762 have received buffs, while shotguns have been slightly weakened. A new weapon, the Honey Badger, has been introduced and can heal players after securing kills. Gameplay improvements include better gyroscope aiming, smoother controls, and the ability to deploy parachutes just before landing. India-Specific Additions The update includes features tailored for Indian players, such as Bhojpuri voice packs, Royal Enfield bikes, and special in-game events offering free UC rewards. An auto-reconnect feature has also been added to handle sudden internet disconnections.

Watchdog Asks X To Set Up Minor Protection Measures For AI Chatbot Grok | Technology News

Seoul: South Korea’s media watchdog said on Wednesday it has asked U.S.-based social media platform X to come up with measures to protect minor users from sexual content generated by the artificial intelligence (AI) model Grok. The Korea Media and Communications Commission (KMCC) said it delivered the request to the operator amid growing concerns over deepfake sexual content that can be generated by AI platforms, reports Yonhap news agency. “We have asked the operator of X to prevent potential illegal activities on Grok and submit measures to protect teenagers from harmful content, including limiting or managing their access,” the KMCC said in a release. Add Zee News as a Preferred Source Under the South Korean law, operators of social network platforms, including X, are required to designate an official in charge of minor protection and submit an annual report, the commission said. The KMCC said the request was made in line with the regulation, noting it has pointed out that creating, circulating or saving sexual deepfake content generated without consent is subject to criminal punishment. “We intend to proactively support the sound and safe development of new technologies,” KMCC Chairperson Kim Jong-cheol said in a release. “As for side effects and negative impacts, we plan to introduce reasonable regulations and revamp policies to prevent the circulation of illegal information, including sexual abuse content, and require AI service providers to protect minors,” Kim said. Meanwhile, Elon Musk-run X Corp has acknowledged the presence of obscene imagery on its platform, mostly created by its Grok AI, stating that it will comply with Indian laws and remove such content. The Indian government had directed X to conduct a comprehensive review of Grok’s technical and governance frameworks to prevent the generation of unlawful content. It said Grok must enforce strict user policies, including suspension and termination of violators. All offending content should be immediately removed without tampering with evidence, it said.

Training a Model with Limited Memory using Mixed Precision and Gradient Checkpointing

Training a language model is memory-intensive, not only because the model itself is large but also because training data batches often contain long sequences. Training a model with limited memory is challenging. In this article, you will learn techniques that enable model training in memory-constrained environments. In particular, you will learn about: Low-precision floating-point numbers and mixed-precision training Using gradient checkpointing Let’s get started! Training a Model with Limited Memory using Mixed Precision and Gradient CheckpointingPhoto by Meduana. Some rights reserved. Overview This article is divided into three parts; they are: Floating-point Numbers Automatic Mixed Precision Training Gradient Checkpointing Let’s get started! Floating-Point Numbers The default data type in PyTorch is the IEEE 754 32-bit floating-point format, also known as single precision. It is not the only floating-point type you can use. For example, most CPUs support 64-bit double-precision floating-point, and GPUs often support half-precision floating-point as well. The table below lists some floating-point types: Data Type PyTorch Type Total Bits Sign Bit Exponent Bits Mantissa Bits Min Value Max Value eps IEEE 754 double precision torch.float64 64 1 11 52 -1.79769e+308 1.79769e+308 2.22045e-16 IEEE 754 single precision torch.float32 32 1 8 23 -3.40282e+38 3.40282e+38 1.19209e-07 IEEE 754 half precision torch.float16 16 1 5 10 -65504 65504 0.000976562 bf16 torch.bfloat16 16 1 8 7 -3.38953e+38 3.38953e+38 0.0078125 fp8 (e4m3) torch.float8_e4m3fn 8 1 4 3 -448 448 0.125 fp8 (e5m2) torch.float8_e5m2 8 1 5 2 -57344 57344 0.25 fp8 (e8m0) torch.float8_e8m0fnu 8 1 8 0 1.70141e+38 5.87747e-39 1.0 fp6 (e3m2) 6 1 3 2 -28 28 0.25 fp6 (e2m3) 6 1 2 3 -7.5 7.5 0.125 fp4 (e2m1) 4 1 2 1 -6 6 Floating-point numbers are binary representations of real numbers. Each consists of a sign bit, several bits for the exponent, and several bits for the mantissa. They are laid out as shown in the figure below. When sorted by their binary representation, floating-point numbers retain their order by real-number value. Floating-point number representation. Figure from Wikimedia. Different floating-point types have different ranges and precisions. Not all types are supported by all hardware. For example, fp4 is only supported in Nvidia’s Blackwell architecture. PyTorch supports only a few data types. You can run the following code to print information about various floating-point types: import torch from tabulate import tabulate # float types: float_types = [ torch.float64, torch.float32, torch.float16, torch.bfloat16, torch.float8_e4m3fn, torch.float8_e5m2, torch.float8_e8m0fnu, ] # collect finfo for each type table = [] for dtype in float_types: info = torch.finfo(dtype) try: typename = info.dtype except: typename = str(dtype) table.append([typename, info.max, info.min, info.smallest_normal, info.eps]) headers = [‘data type’, ‘max’, ‘min’, ‘smallest normal’, ‘eps’] print(tabulate(table, headers=headers)) 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 import torch from tabulate import tabulate # float types: float_types = [ torch.float64, torch.float32, torch.float16, torch.bfloat16, torch.float8_e4m3fn, torch.float8_e5m2, torch.float8_e8m0fnu, ] # collect finfo for each type table = [] for dtype in float_types: info = torch.finfo(dtype) try: typename = info.dtype except: typename = str(dtype) table.append([typename, info.max, info.min, info.smallest_normal, info.eps]) headers = [‘data type’, ‘max’, ‘min’, ‘smallest normal’, ‘eps’] print(tabulate(table, headers=headers)) Pay attention to the min and max values for each type, as well as the eps value. The min and max values indicate the range a type can support (the dynamic range). If you train a model with such a type, but the model weights exceed this range, you will get overflow or underflow, usually causing the model to output NaN or Inf. The eps value is the smallest positive number such that the type can differentiate between 1+eps and 1. This is a metric for precision. If your model’s gradient updates are smaller than eps, you will likely observe the vanishing gradient problem. Therefore, float32 is a good default choice for deep learning: it has a wide dynamic range and high precision. However, each float32 number requires 4 bytes of memory. As a compromise, you can use float16 to save memory, but you are likely to encounter overflow or underflow issues since the dynamic range is much smaller. The Google Brain team identified this problem and proposed bfloat16, a 16-bit floating-point format with the same dynamic range as float32. As a trade-off, the precision is an order of magnitude worse than float16. It turns out that dynamic range is more important than precision for deep learning, making bfloat16 highly useful. When you create a tensor in PyTorch, you can specify the data type. For example: x = torch.tensor([1.0, 2.0, 3.0], dtype=torch.float16) print(x) x = torch.tensor([1.0, 2.0, 3.0], dtype=torch.float16) print(x) There is a straightforward way to change the default to a different type, such as bfloat16. This is handy for model training. All you need to do is set the following line before you create any model or optimizer: # set default dtype to bfloat16 torch.set_default_dtype(torch.bfloat16) # set default dtype to bfloat16 torch.set_default_dtype(torch.bfloat16) Just by doing this, you force all your model weights and gradients to be bfloat16 type. This saves half of the memory. In the previous article, you were advised to set the batch size to 8 to fit a GPU with only 12GB of VRAM. With bfloat16, you should be able to set the batch size to 16. Note that using 8-bit float or lower-precision types may not work. This is because you need hardware support and PyTorch to perform the corresponding mathematical operations. You can try the following code (requires a CUDA device) and find that you will need extra effort to operate on 8-bit float: dtype = torch.float8_e4m3fn # Define a tensor with float8 will see # NotImplementedError: “normal_kernel_cuda” not implemented for ‘Float8_e4m3fn’ x = torch.randn(16, 16, dtype=dtype, device=”cuda”) # Create in float32 and convert to float8 works x = torch.randn(16, 16, device=”cuda”).to(dtype) # But matmul is not supported. You will see # NotImplementedError: “addmm_cuda” not implemented for ‘Float8_e4m3fn’ y = x @ x.T # The correct way to run matrix multiplication on 8-bit

Can Public Wi-Fi At Railway Stations Expose Your Online Search? Here’s How To Protect Your Sensitive Data | Technology News

Public Wi-Fi Safety: You’re at your favourite cafe, sipping coffee, at a railway station, and logging onto the free public Wi-Fi. It feels convenient, fast, and easy. But have you ever wondered who might be watching your online activity? Every click, search, and login could leave a digital trail. Public Wi-Fi networks are like open windows into your online life, and while they promise connection, they might also invite unseen eyes. Can the owner of that café or any public Wi-Fi provider actually see what you are doing online? In this article, we will uncover the truth behind these invisible spectators. Public Wi-Fi Networks: ISPs Monitor All Unencrypted Traffic Public Wi-Fi is everywhere and crucial these days, from metro stations to airports, because our devices always need an internet connection, but internet service providers (ISPs) can see all unencrypted activity on their networks, meaning every click, search, and login can be logged. This does not mean they are constantly watching, but the possibility is real, which is why browsing personal content on company Wi-Fi can be risky. Where you connect also matters, as airports and railway stations may have extra security to detect unusual activity, yet their high traffic can make these networks less safe than smaller public Wi-Fi spots. Add Zee News as a Preferred Source Are Public Wi-Fi Connections Safe? Public Wi-Fi is not as safe as private networks because it often has no password and weak encryption. This makes it an easy target for “man-in-the-middle” attacks, where hackers can intercept your internet data. They might capture sensitive information like credit card numbers, passwords, and the websites you visit, even though they cannot see exactly what you do on those sites. On top of that, some cybercriminals create “fake hotspots” that look real. If you connect to them, they can quietly monitor your activity and steal your data without you even realizing it. (Also Read: What Is AI Voice Scam? Indore School Teacher Duped Of Rs 1,00,000; Here’s How To Avoid) Public Wi-Fi Safety: How To Protect Your Sensitive Data You should always use a trusted VPN like Norton or Surfshark on public Wi-Fi to keep your data encrypted and safe from snoopers. Turn off auto-connect and file or printer sharing to avoid unauthorized access. Always confirm the network name with staff to avoid fake hotspots. Keep your device firewall on and enable two-factor authentication for extra protection. Avoid sensitive activities like online banking or shopping, log out after use, and clear your browser cache to remove any traces of your activity. These steps help you stay secure on open networks.

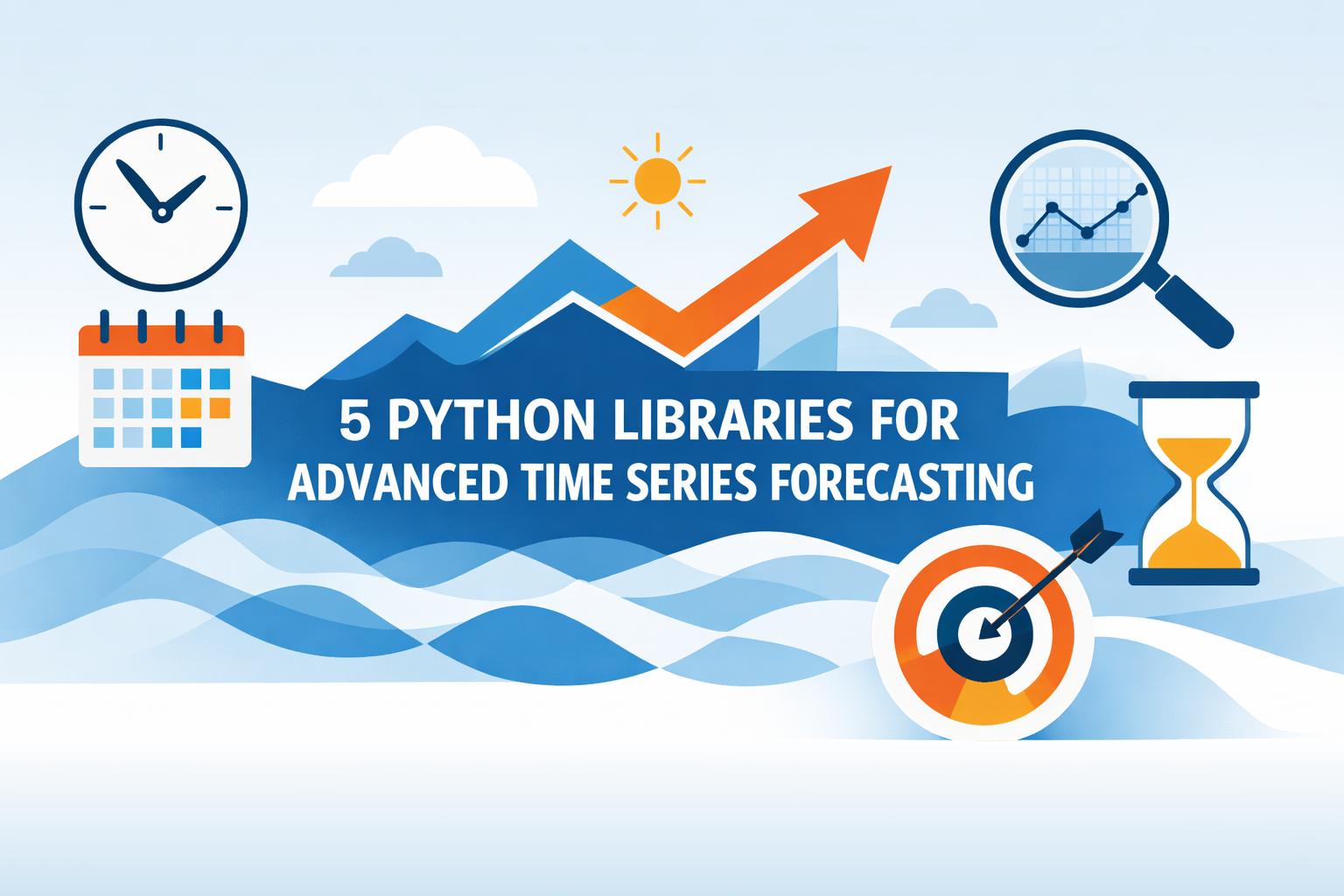

5 Python Libraries for Advanced Time Series Forecasting

5 Python Libraries for Advanced Time Series ForecastingImage by Editor Introduction Predicting the future has always been the holy grail of analytics. Whether it is optimizing supply chain logistics, managing energy grid loads, or anticipating financial market volatility, time series forecasting is often the engine driving critical decision-making. However, while the concept is simple — using historical data to predict future values — the execution is notoriously difficult. Real-world data rarely adheres to the clean, linear trends found in introductory textbooks. Fortunately, Python’s ecosystem has evolved to meet this demand. The landscape has shifted from purely statistical packages to a rich array of libraries that integrate deep learning, machine learning pipelines, and classical econometrics. But with so many options, choosing the right framework can be overwhelming. This article cuts through the noise to focus on 5 powerhouse Python libraries designed specifically for advanced time series forecasting. We move beyond the basics to explore tools capable of handling high-dimensional data, complex seasonality, and exogenous variables. For each library, we provide a high-level overview of its standout features and a concise “Hello World” code snippet to familiarize yourself immediately. 1. Statsmodels statsmodels provides best-in-class models for non-stationary and multivariate time series forecasting, primarily based on methods from statistics and econometrics. It also offers explicit control over seasonality, exogenous variables, and trend components. This example shows how to import and use the library’s SARIMAX model (Seasonal AutoRegressive Integrated Moving Average with eXogenous regressors): from statsmodels.tsa.statespace.sarimax import SARIMAX model = SARIMAX(y, exog=X, order=(1,1,1), seasonal_order=(1,1,1,12)) res = model.fit() forecast = res.forecast(steps=12, exog=X_future) from statsmodels.tsa.statespace.sarimax import SARIMAX model = SARIMAX(y, exog=X, order=(1,1,1), seasonal_order=(1,1,1,12)) res = model.fit() forecast = res.forecast(steps=12, exog=X_future) 2. Sktime Fan of scikit-learn? Good news! sktime mimics the popular machine learning library’s style framework-wise, and it is suited for advanced forecasting tasks, enabling panel and multivariate forecasting through machine-learning model reduction and pipeline composition. For instance, the make_reduction() function takes a machine-learning model as a base component and applies recursion to perform predictions multiple steps ahead. Note that fh is the “forecasting horizon,” allowing prediction of n steps, and X_future is meant to contain future values for exogenous attributes, should the model utilize them. from sktime.forecasting.compose import make_reduction from sklearn.ensemble import RandomForestRegressor forecaster = make_reduction(RandomForestRegressor(), strategy=”recursive”) forecaster.fit(y_train, X_train) y_pred = forecaster.predict(fh=[1,2,3], X=X_future) from sktime.forecasting.compose import make_reduction from sklearn.ensemble import RandomForestRegressor forecaster = make_reduction(RandomForestRegressor(), strategy=“recursive”) forecaster.fit(y_train, X_train) y_pred = forecaster.predict(fh=[1,2,3], X=X_future) 3. Darts The Darts library stands out for its simplicity compared to other frameworks. Its high-level API combines classical and deep learning models to address probabilistic and multivariate forecasting problems. It also captures past and future covariates effectively. This example shows how to use Darts’ implementation of the N-BEATS model (Neural Basis Expansion Analysis for Interpretable Time Series Forecasting), an accurate choice to handle complex temporal patterns. from darts.models import NBEATSModel model = NBEATSModel(input_chunk_length=24, output_chunk_length=12, n_epochs=10) model.fit(series, verbose=True) forecast = model.predict(n=12) from darts.models import NBEATSModel model = NBEATSModel(input_chunk_length=24, output_chunk_length=12, n_epochs=10) model.fit(series, verbose=True) forecast = model.predict(n=12) 5 Python Libraries for Advanced Time Series Forecasting: A Simple ComparisonImage by Editor 4. PyTorch Forecasting For high-dimensional and large-scale forecasting problems with massive data, PyTorch Forecasting is a solid choice that incorporates state-of-the-art forecasting models like Temporal Fusion Transformers (TFT), as well as tools for model interpretability. The following code snippet illustrates, in a simplified fashion, the use of a TFT model. Although not explicitly shown, models in this library are typically instantiated from a TimeSeriesDataSet (in the example, dataset would play that role). from pytorch_forecasting import TemporalFusionTransformer tft = TemporalFusionTransformer.from_dataset(dataset) tft.fit(train_dataloader) pred = tft.predict(val_dataloader) from pytorch_forecasting import TemporalFusionTransformer tft = TemporalFusionTransformer.from_dataset(dataset) tft.fit(train_dataloader) pred = tft.predict(val_dataloader) 5. GluonTS Lastly, GluonTS is a deep learning–based library that specializes in probabilistic forecasting, making it ideal for handling uncertainty in large time series datasets, including those with non-stationary characteristics. We wrap up with an example that shows how to import GluonTS modules and classes — training a Deep Autoregressive model (DeepAR) for probabilistic time series forecasting that predicts a distribution of possible future values rather than a single point forecast: from gluonts.model.deepar import DeepAREstimator from gluonts.mx.trainer import Trainer estimator = DeepAREstimator(freq=”D”, prediction_length=14, trainer=Trainer(epochs=5)) predictor = estimator.train(train_data) from gluonts.model.deepar import DeepAREstimator from gluonts.mx.trainer import Trainer estimator = DeepAREstimator(freq=“D”, prediction_length=14, trainer=Trainer(epochs=5)) predictor = estimator.train(train_data) Wrapping Up Choosing the right tool from this arsenal depends on your specific trade-offs between interpretability, training speed, and the scale of your data. While classical libraries like Statsmodels offer statistical rigor, modern frameworks like Darts and GluonTS are pushing the boundaries of what deep learning can achieve with temporal data. There is rarely a “one-size-fits-all” solution in advanced forecasting, so we encourage you to use these snippets as a launchpad for benchmarking multiple approaches against one another. Experiment with different architectures and exogenous variables to see which library best captures the nuances of your signals. The tools are available; now it’s time to turn that historical noise into actionable future insights.

Viksit Bharat Young Leaders 2026: Why NSA Ajit Doval Avoids Mobile Phones And Internet; Know About His Career | Technology News

Viksit Bharat Young Leaders 2026: In a world where mobile phones and the internet are an important part of daily life, National Security Advisor Ajit Doval shared a surprising detail about his personal habits. Speaking at the inaugural session of the Viksit Bharat Young Leaders Dialogue 2026 on Saturday, he addressed the youth and spoke about the high price India paid for its independence, with many generations facing hardship and loss. During a question-and-answer session, the former Indian intelligence and law enforcement officer revealed that he still does not use a mobile phone or the internet. This statement quickly caught attention online and left many people curious about how a top security expert works in today’s digital world. Why India’s Top Security Chief Avoids Mobile Phones And Internet Add Zee News as a Preferred Source During the Q&A session at Bharat Mandapam, NSA Ajit Doval was asked if he really avoids using mobile phones and the internet. He smiled and confirmed that it was true. Doval explained that phones and the internet are not the only ways to communicate and that there are other methods most people are not aware of. He added that he only uses phones or the internet in special situations, such as talking to family or connecting with people abroad. Ajit Doval also shared an important lesson: patience is essential, and messages should be communicated honestly without using propaganda. (Also Read: OnePlus Likely To Launch OnePlus Nord 6 In India With 9,000mAh Battery; Check Expected Camera, Display, Chipset, Price And Other Specs) Who Is Ajit Doval and His Achievements Ajit Kumar Doval is the fifth and current National Security Advisor (NSA) to the Prime Minister of India. He is a retired Indian Police Service (IPS) officer from Kerala and has worked in Indian intelligence and law enforcement. He was born in Uttarakhand in 1945 and became the youngest police officer in India to receive the Kirti Chakra, a bravery award for his service. Doval played an important role in India’s September 2016 surgical strikes and the February 2019 Balakot airstrikes in Pakistan. He also helped resolve the Doklam standoff and took strong steps to fight insurgency in Northeast India. Ajit Doval’s Career Ajit Doval began his police career in 1968 as an IPS officer. He worked actively in fighting insurgency in Mizoram and Punjab. In 1999, he was one of three negotiators who helped release passengers from the hijacked IC-814 plane in Kandahar. Between 1971 and 1999, he successfully handled at least 15 hijacking cases of Indian Airlines aircraft. Ajit Doval is said to have spent seven years working undercover in Pakistan, gathering important information on active militant groups. After one year as a secret agent, he worked at the Indian High Commission in Islamabad for six years. Ajit Doval’s Powerful Message To Youth Addressing the gathering, Ajit Doval urged young people to learn from history and work towards building a strong and great India based on its values, rights, and beliefs. Looking back at India’s past, he said the country once had a highly advanced civilization.

How To Delete OpenAI’s ChatGPT History From Android, iOS Devices And Laptops: Step-By-Step Guide | Technology News

OpenAI’s ChatGPT History Delete: Are you one of the millions of people using OpenAI’s ChatGPT every day? AI chatbots have now become a regular part of daily life, helping users save time and complete tasks more easily. From writing a quick email to creating a full article, generating ideas on a topic, or exploring different angles for a story, ChatGPT can do it all. However, as more people rely on this powerful tool, an important question comes up: where does your data go? By default, ChatGPT saves your chat history, which may include personal and work-related information. One question naturally comes to mind: is there a way to delete the data stored in ChatGPT? The answer is yes, and the process is quite simple. With just a few steps, you can clear your chat history and take control of your privacy. Add Zee News as a Preferred Source But there’s another side to the story. Experts warn that relying too heavily on AI tools can have drawbacks. Over time, constant dependence on AI may reduce your own thinking ability, creativity, and problem-solving skills, making it important to use these tools wisely rather than endlessly. (Also Read: Want To Turn Off Google’s Gemini AI Features In Gmail? Follow THESE Simple Steps) How To Delete OpenAI’s ChatGPT From Android Or iOS Device Step 1: Open the ChatGPT app on your Android or iOS device and log in using your email ID. Step 2: Tap the two horizontal lines in the top-left corner to open the menu. Step 3: Select your profile to access ChatGPT settings. Step 4: Scroll down and tap on Data Controls. Step 5: Choose Delete OpenAI Account and follow the instructions to permanently delete your account. How To Delete OpenAI’s ChatGPT From Your PC and Laptops Step 1: Open ChatGPT on your laptop using any web browser and log in with your email account. Step 2: Look at the bottom-left corner of the screen and click on your profile icon. Step 3: From the menu, select Settings. Step 4: In the Settings window, click on the Account option. Step 5: Scroll down and select Delete Account, then follow the on-screen instructions to confirm. It is important to note that deleting your ChatGPT account is permanent and cannot be reversed, so make sure you are certain before going ahead. If you have an active subscription through the Google Play Store, you will need to cancel it separately, as deleting your account will not cancel the subscription. Once the account is deleted, you won’t be able to sign up again using the same email address or phone number. All your data across OpenAI apps will be erased. After you click on “Delete,” it may take up to 30 days for all your data to be fully removed.