Google Alerts Visa Employees: Google has warned some employees in the US on visas to avoid traveling abroad. The company said visa re-entry processing at US embassies and consulates could be delayed for up to a year, according to Business Insider. According to the Google’s external immigration counsel, BAL Immigration Law, warned employees who need a visa stamp that traveling abroad could keep them from returning to the U.S. for several months because of long appointment delays, according to Business Insider. The internal memo said US embassies and consulates are facing visa stamping delays of up to 12 months. It advised employees to avoid international travel unless it’s absolutely necessary. This affects workers on H-1B, H-4, F, J, and M visas. Add Zee News as a Preferred Source It is important to note that the delays are being reported across several countries as US missions grapple with routine visa backlogs following the rollout of enhanced social media screening requirements. These checks apply to H-1B workers and their dependents, as well as students and exchange visitors on F, J, and M visas, Business Insider reported. Google’s Legal Advisory On the other hand, Google’s legal advisory noted that the disruption spans multiple visa categories but did not specify next steps for employees who are already outside the US and facing postponed appointments. A Google spokesperson declined to comment, Business Insider reported. The US Department of State confirmed the delays, telling Business Insider on Friday, December 19, that it is conducting “online presence reviews for applicants.” A spokesperson said visa appointments might be rescheduled as staffing and resources change, and applicants can request expedited processing in certain cases. (Also Read: Gmail Vs Zoho Mail Comparison: Why People Are Moving Away From Gmail? Check Security And Safety Features; Here’s How To Switch) H-1B Visa Programme The H-1B program, which gives out 85,000 new visas each year, is an important way for major US tech companies like Google, Amazon, Microsoft, and Meta to hire skilled workers. Under the Trump administration, the program became controversial, with critics saying stricter rules and higher costs make it harder for companies to hire foreign talent. The H-1B visa is widely used to hire skilled workers from India and China. This year, a $100,000 fee for new applications added more attention to the program. In September, Google’s parent company, Alphabet, advised employees to avoid traveling abroad and urged H-1B visa holders to stay in the U.S., according to an email seen by Reuters.

India’s Digital Economy To Reach $1.2 Trillion By 2030, Led By AI Depth: Report | Technology News

India’s digital economy is projected to reach $1.2 trillion by 2029–30, as the depth of AI capabilities is expected to shape the next phase of growth, a report said on Friday. The report by TeamLease Digital said India’s AI market could touch $17 billion by 2027, with AI talent expected to double to nearly 1.25 million professionals, accounting for about 16 per cent of global AI talent. The growth is being driven by enterprise AI spending, national digital rails, and a strong STEM pipeline, the report said, adding that high-value AI roles are expanding rapidly while demand for legacy roles is plateauing. Add Zee News as a Preferred Source The firm identified six enterprise-grade AI skills emerging as foundational by 2026. These include Simulation Governance, which can fetch salaries of Rs 26–35 LPA; Agent Design with expected pay of Rs 25–32 LPA; AI Orchestration (Rs 24–30 LPA); Prompt Engineering (Rs 22–28 LPA); LLM Safety and Tuning (Rs 20–26 LPA); and AI Compliance and Risk Operations (Rs 18–24 LPA). Globally, up to 40 per cent of roles are expected to be impacted by AI, particularly in sectors such as IT services, healthcare, BFSI, and customer experience. The report emphasised that organisations are increasingly treating AI capability building as an enterprise-wide priority, extending beyond data science to leadership, operations, risk, and compliance, while prioritising broad-based upskilling and hybrid human–AI workflows. It added that the strongest demand is for enterprise-grade AI skills that support governance, trust, orchestration, and scalability, rather than generic AI roles. Demand for these skills is concentrated in hubs such as Bengaluru, Hyderabad, and Pune, driven by global capability centres, AI-first startups, and large enterprises across BFSI, healthcare, and manufacturing. The report noted that the importance of mid-level professionals is increasing, as they can bridge applied AI with governance, orchestration, and real-world business needs.

Samsung Unveils Details Of New Exynos Chipset For Galaxy S26 | Technology News

Seoul: Samsung Electronics on Friday unveiled details of the new Exynos 2600 application processor (AP), widely expected to power the upcoming flagship Galaxy S26 smartphone. The South Korean tech giant said in its website post that the Exynos 2600, boasting the industry’s first 2-nanometer gate-all-around (GAA) production technology, is currently under “mass production” status, reports Yonhap news agency. APs, often described as the brains of mobile devices, handle the core computing tasks that run operating systems and applications. “The Exynos 2600 delivers enhanced AI and gaming experiences by integrating a powerful CPU, NPU and GPU into a single compact chip,” the company said on the website. Compared with its predecessor, the Exynos 2500, the new AP boasts up to 39 per cent improved CPU capability and 113 per cent higher generative AI performance, according to Samsung. “Thanks to these improvements, you can perform more on-device AI tasks, such as intelligent image editing and AI assistant functions, quicker and more efficiently,” the company added. Add Zee News as a Preferred Source Samsung Electronics plans to hold a launch ceremony for the Galaxy S26 smartphone in February in the United States. Earlier this month, Samsung Electronics hinted at a new Exynos application processor (AP) widely expected to power the upcoming flagship Galaxy S26 smartphone. The South Korean tech giant uploaded a clip titled “The next Exynos” on YouTube, featuring the Exynos 2600. Exynos is the company’s proprietary mobile chipset. APs, often described as the brains of mobile devices, handle the core computing tasks that run operating systems and applications. Industry sources said Samsung Electronics began commercial production of the Exynos 2600 last month, making it the first AP manufactured using the 2-nanometer process technology. Compared with Qualcomm’s Snapdragon 8 Elite Gen 5, the new Exynos is expected to deliver a 30 per cent improvement in NPU performance and a 29 per cent gain in graphics processing capability.

How LLMs Choose Their Words: A Practical Walk-Through of Logits, Softmax and Sampling

Large Language Models (LLMs) can produce varied, creative, and sometimes surprising outputs even when given the same prompt. This randomness is not a bug but a core feature of how the model samples its next token from a probability distribution. In this article, we break down the key sampling strategies and demonstrate how parameters such as temperature, top-k, and top-p influence the balance between consistency and creativity. In this tutorial, we take a hands-on approach to understand: How logits become probabilities How temperature, top-k, and top-p sampling work How different sampling strategies shape the model’s next-token distribution By the end, you will understand the mechanics behind LLM inference and be able to adjust the creativity or determinism of the output. Let’s get started. How LLMs Choose Their Words: A Practical Walk-Through of Logits, Softmax and SamplingPhoto by Colton Duke. Some rights reserved. Overview This article is divided into four parts; they are: How Logits Become Probabilities Temperature Top-k Sampling Top-p Sampling How Logits Become Probabilities When you ask an LLM a question, it outputs a vector of logits. Logits are raw scores the model assigns to each possible next token in its vocabulary. If the model has a vocabulary of $V$ tokens, it will output a vector of $V$ logits for each next word position. A logit is a real number. It is converted into a probability by the softmax function: $$p_i = \frac{e^{x_i}}{\sum_{j=1}^{V} e^{x_j}}$$ where $x_i$ is the logit for token $i$ and $p_i$ is the corresponding probability. Softmax transforms these raw scores into a probability distribution. All $p_i$ are positive, and their sum is 1. Suppose we give the model this prompt: Today’s weather is so ___ The model considers every token in its vocabulary as a possible next word. For simplicity, let’s say there are only 6 tokens in the vocabulary: wonderful cloudy nice hot gloomy delicious wonderful cloudy nice hot gloomy delicious The model produces one logit for each token. Here’s an example set of logits the model might output and the corresponding probabilities based on the softmax function: Token Logit Probability wonderful 1.2 0.0457 cloudy 2.0 0.1017 nice 3.5 0.4556 hot 3.0 0.2764 gloomy 1.8 0.0832 delicious 1.0 0.0374 You can confirm this by using the softmax function from PyTorch: import torch import torch.nn.functional as F vocab = [“wonderful”, “cloudy”, “nice”, “hot”, “gloomy”, “delicious”] logits = torch.tensor([1.2, 2.0, 3.5, 3.0, 1.8, 1.0]) probs = F.softmax(logits, dim=-1) print(probs) # Output: # tensor([0.0457, 0.1017, 0.4556, 0.2764, 0.0832, 0.0374]) import torch import torch.nn.functional as F vocab = [“wonderful”, “cloudy”, “nice”, “hot”, “gloomy”, “delicious”] logits = torch.tensor([1.2, 2.0, 3.5, 3.0, 1.8, 1.0]) probs = F.softmax(logits, dim=–1) print(probs) # Output: # tensor([0.0457, 0.1017, 0.4556, 0.2764, 0.0832, 0.0374]) Based on this result, the token with the highest probability is “nice”. LLMs don’t always select the token with the highest probability; instead, they sample from the probability distribution to produce a different output each time. In this case, there’s a 46% probability of seeing “nice”. If you want the model to give a more creative answer, how can you change the probability distribution such that “cloudy”, “hot”, and other answers would also appear more often? Temperature Temperature ($T$) is a model inference parameter. It is not a model parameter; it is a parameter of the algorithm that generates the output. It scales logits before applying softmax: $$p_i = \frac{e^{x_i / T}}{\sum_{j=1}^{V} e^{x_j / T}}$$ You can expect the probability distribution to be more deterministic if $T<1$, since the difference between each value of $x_i$ will be exaggerated. On the other hand, it will be more random if $T>1$, as the difference between each value of $x_i$ will be reduced. Now, let’s visualize this effect of temperature on the probability distribution: import matplotlib.pyplot as plt import torch import torch.nn.functional as F vocab = [“wonderful”, “cloudy”, “nice”, “hot”, “gloomy”, “delicious”] logits = torch.tensor([1.2, 2.0, 3.5, 3.0, 1.8, 1.0]) # (vocab_size,) scores = logits.unsqueeze(0) # (1, vocab_size) temperatures = [0.1, 0.5, 1.0, 3.0, 10.0] fig, ax = plt.subplots(figsize=(10, 6)) for temp in temperatures: # Apply temperature scaling scores_processed = scores / temp # Convert to probabilities probs = F.softmax(scores_processed, dim=-1)[0] # Sample from the distribution sampled_idx = torch.multinomial(probs, num_samples=1).item() print(f”Temperature = {temp}, sampled: {vocab[sampled_idx]}”) # Plot the probability distribution ax.plot(vocab, probs.numpy(), marker=”o”, label=f”T={temp}”) ax.set_title(“Effect of Temperature”) ax.set_ylabel(“Probability”) ax.legend() plt.show() 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 import matplotlib.pyplot as plt import torch import torch.nn.functional as F vocab = [“wonderful”, “cloudy”, “nice”, “hot”, “gloomy”, “delicious”] logits = torch.tensor([1.2, 2.0, 3.5, 3.0, 1.8, 1.0]) # (vocab_size,) scores = logits.unsqueeze(0) # (1, vocab_size) temperatures = [0.1, 0.5, 1.0, 3.0, 10.0] fig, ax = plt.subplots(figsize=(10, 6)) for temp in temperatures: # Apply temperature scaling scores_processed = scores / temp # Convert to probabilities probs = F.softmax(scores_processed, dim=–1)[0] # Sample from the distribution sampled_idx = torch.multinomial(probs, num_samples=1).item() print(f“Temperature = {temp}, sampled: {vocab[sampled_idx]}”) # Plot the probability distribution ax.plot(vocab, probs.numpy(), marker=‘o’, label=f“T={temp}”) ax.set_title(“Effect of Temperature”) ax.set_ylabel(“Probability”) ax.legend() plt.show() This code generates a probability distribution over each token in the vocabulary. Then it samples a token based on the probability. Running this code may produce the following output: Temperature = 0.1, sampled: nice Temperature = 0.5, sampled: nice Temperature = 1.0, sampled: nice Temperature = 3.0, sampled: wonderful Temperature = 10.0, sampled: delicious Temperature = 0.1, sampled: nice Temperature = 0.5, sampled: nice Temperature = 1.0, sampled: nice Temperature = 3.0, sampled: wonderful Temperature = 10.0, sampled: delicious and the following plot showing the probability distribution for each temperature: The effect of temperature to the resulting probability distribution The model may produce the nonsensical output “Today’s weather is so delicious” if you set the temperature to 10! Top-k Sampling The model’s output is a vector of logits for each position in the output sequence. The inference algorithm converts the logits to actual words, or in LLM terms, tokens. The simplest method for selecting the next token is greedy sampling,

India’s Annual Telecom Exports Jump Up By 72% In Last 5 Years | Technology News

New Delhi: India’s annual telecom exports have jumped by 72 per cent to over Rs 18,406 crore in the last five years, Union Communications Minister Jyotiraditya M. Scindia informed Parliament on Wednesday. Responding to a question in the Lok Sabha, the minister stated that India’s telecom exports have risen from Rs 10,000 crore in 2020–21 to Rs 18,406 crore in 2024–25, while imports have remained capped at around Rs 51,000 crore. He said that under the leadership of Prime Minister Narendra Modi, India is not only moving rapidly towards self-reliance in the telecom sector but is also preparing itself for global leadership. In response to a supplementary question, Scindia highlighted India’s achievements in 5G deployment. He informed that out of 778 districts in the country, 767 districts have already been connected to the 5G network. He further stated that India currently has 36 crore 5G subscribers, a number expected to rise to 42 crore by 2026 and reach 100 crore by 2030. About SATCOM, Scindia said that the worldwide experience shows that areas which cannot be connected through conventional BTS or backhaul, or through broadband connectivity using optical fibre cable, can only be served through satellite communications. In this context, India has taken a decisive step to ensure that SATCOM services are made available to customers across the length and breadth of the country. Add Zee News as a Preferred Source He noted that the objective of the government is to offer a full bouquet of telecom services to every customer, enabling individuals to make informed choices based on their needs and preferred price points. The Union Minister highlighted that the SATCOM policy framework is firmly in place, with spectrum slated for administrative assignment. Three licences have already been issued — to Starlink, OneWeb, and Reliance. He further said that two key aspects must be addressed before operators can commence commercial services. The first pertains to spectrum assignment, including the determination of administrative spectrum charges, which falls under the purview of the Telecom Regulatory Authority of India (TRAI). The second aspect relates to security clearances from enforcement agencies. To facilitate this process, operators have been provided with a sample spectrum to conduct demonstrations, and all three licensees are currently undertaking the required compliance activities. Once the operators demonstrate adherence to prescribed security norms — including the requirement to host international gateways within India — the necessary approvals will be granted, enabling the rollout of SATCOM services to customers, the minister added.

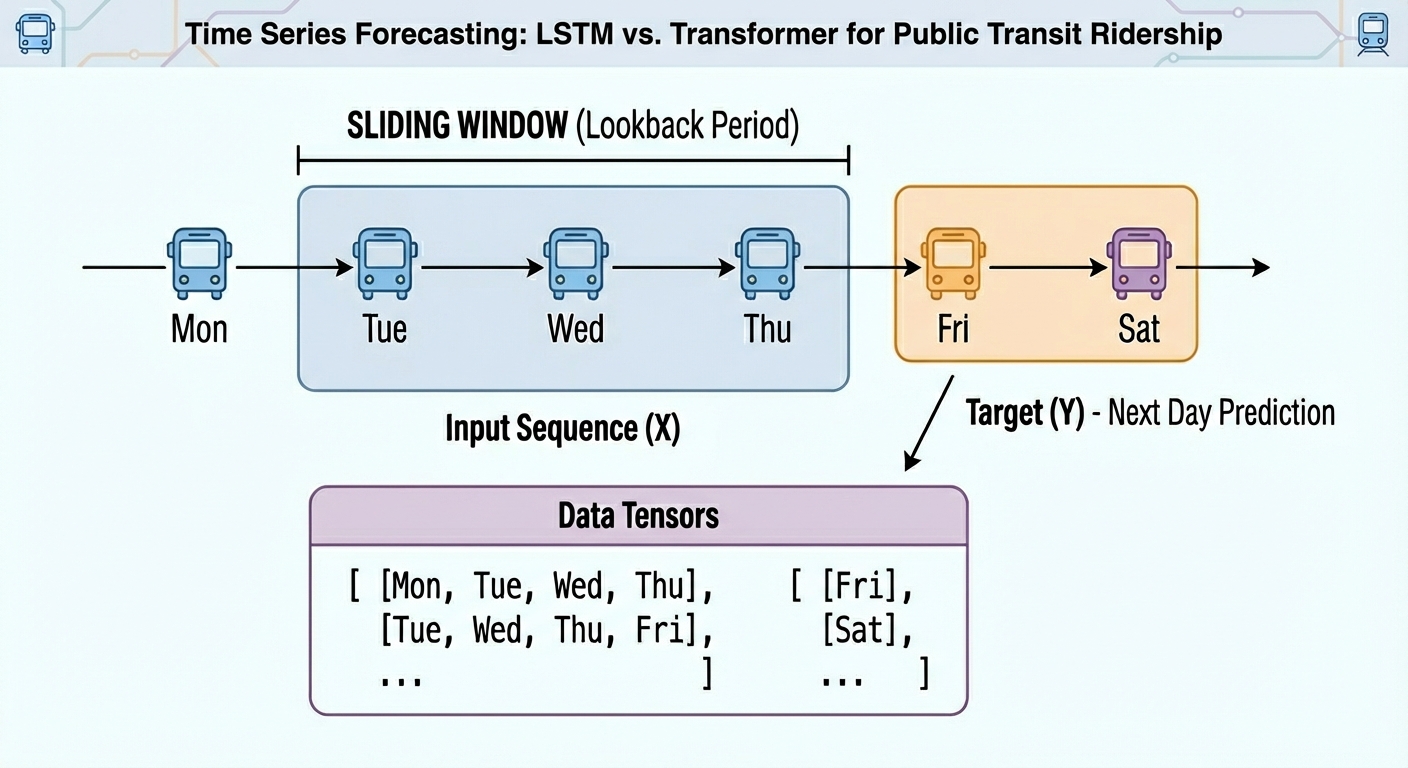

Transformer vs LSTM for Time Series: Which Works Better?

In this article, you will learn how to build, train, and compare an LSTM and a transformer for next-day univariate time series forecasting on real public transit data. Topics we will cover include: Structuring and windowing a time series for supervised learning. Implementing compact LSTM and transformer architectures in PyTorch. Evaluating and comparing models with MAE and RMSE on held-out data. All right, full steam ahead. Transformer vs LSTM for Time Series: Which Works Better?Image by Editor Introduction From daily weather measurements or traffic sensor readings to stock prices, time series data are present nearly everywhere. When these time series datasets become more challenging, models with a higher level of sophistication — such as ensemble methods or even deep learning architectures — can be a more convenient option than classical time series analysis and forecasting techniques. The objective of this article is to showcase how two deep learning architectures are trained and used to handle time series data — long short term memory (LSTM) and the transformer. The main focus is not merely leveraging the models, but understanding their differences when handling time series and whether one architecture clearly outperforms the other. Basic knowledge of Python and machine learning essentials is recommended. Problem Setup and Preparation For this illustrative comparison, we will consider a forecasting task on a univariate time series: given the temporally ordered previous N time steps, predict the (N+1)th value. In particular, we will use a publicly available version of the Chicago rides dataset, which contains daily recordings for bus and rail passengers in the Chicago public transit network dating back to 2001. This initial piece of code imports the libraries and modules needed and loads the dataset. We will import pandas, NumPy, Matplotlib, and PyTorch — all for the heavy lifting — along with the scikit-learn metrics that we will rely on for evaluation. import pandas as pd import numpy as np import matplotlib.pyplot as plt import torch import torch.nn as nn from sklearn.metrics import mean_squared_error, mean_absolute_error url = “https://data.cityofchicago.org/api/views/6iiy-9s97/rows.csv?accessType=DOWNLOAD” df = pd.read_csv(url, parse_dates=[“service_date”]) print(df.head()) import pandas as pd import numpy as np import matplotlib.pyplot as plt import torch import torch.nn as nn from sklearn.metrics import mean_squared_error, mean_absolute_error url = “https://data.cityofchicago.org/api/views/6iiy-9s97/rows.csv?accessType=DOWNLOAD” df = pd.read_csv(url, parse_dates=[“service_date”]) print(df.head()) Since the dataset contains post-COVID real data about passenger numbers — which may severely mislead the predictive power of our models due to being very differently distributed than pre-COVID data — we will filter out records from January 1, 2020 onwards. df_filtered = df[df[‘service_date’] <= ‘2019-12-31’] print(“Filtered DataFrame head:”) display(df_filtered.head()) print(“\nShape of the filtered DataFrame:”, df_filtered.shape) df = df_filtered df_filtered = df[df[‘service_date’] <= ‘2019-12-31’] print(“Filtered DataFrame head:”) display(df_filtered.head()) print(“\nShape of the filtered DataFrame:”, df_filtered.shape) df = df_filtered A simple plot will do the job to show what the filtered data looks like: df.sort_values(“service_date”, inplace=True) ts = df.set_index(“service_date”)[“total_rides”].fillna(0) plt.plot(ts) plt.title(“CTA Daily Total Rides”) plt.show() df.sort_values(“service_date”, inplace=True) ts = df.set_index(“service_date”)[“total_rides”].fillna(0) plt.plot(ts) plt.title(“CTA Daily Total Rides”) plt.show() Chicago rides time series dataset plotted Next, we split the time series data into training and test sets. Importantly, in time series forecasting tasks — unlike classification and regression — this partition cannot be done at random, but in a purely sequential fashion. In other words, all training instances come chronologically first, followed by test instances. This code takes the first 80% of the time series as a training set, and the remaining 20% for testing. n = len(ts) train = ts[:int(0.8*n)] test = ts[int(0.8*n):] train_vals = train.values.astype(float) test_vals = test.values.astype(float) n = len(ts) train = ts[:int(0.8*n)] test = ts[int(0.8*n):] train_vals = train.values.astype(float) test_vals = test.values.astype(float) Furthermore, raw time series must be converted into labeled sequences (x, y) spanning a fixed time window to properly train neural network-based models upon them. For example, if we use a time window of N=30 days, the first instance will span the first 30 days of the time series, and the associated label to predict will be the 31st day, and so on. This gives the dataset an appropriate labeled format for supervised learning tasks without losing its important temporal meaning: def create_sequences(data, seq_len=30): X, y = [], [] for i in range(len(data)-seq_len): X.append(data[i:i+seq_len]) y.append(data[i+seq_len]) return np.array(X), np.array(y) SEQ_LEN = 30 X_train, y_train = create_sequences(train_vals, SEQ_LEN) X_test, y_test = create_sequences(test_vals, SEQ_LEN) # Convert our formatted data into PyTorch tensors X_train = torch.tensor(X_train).float().unsqueeze(-1) y_train = torch.tensor(y_train).float().unsqueeze(-1) X_test = torch.tensor(X_test).float().unsqueeze(-1) y_test = torch.tensor(y_test).float().unsqueeze(-1) def create_sequences(data, seq_len=30): X, y = [], [] for i in range(len(data)–seq_len): X.append(data[i:i+seq_len]) y.append(data[i+seq_len]) return np.array(X), np.array(y) SEQ_LEN = 30 X_train, y_train = create_sequences(train_vals, SEQ_LEN) X_test, y_test = create_sequences(test_vals, SEQ_LEN) # Convert our formatted data into PyTorch tensors X_train = torch.tensor(X_train).float().unsqueeze(–1) y_train = torch.tensor(y_train).float().unsqueeze(–1) X_test = torch.tensor(X_test).float().unsqueeze(–1) y_test = torch.tensor(y_test).float().unsqueeze(–1) We are now ready to train, evaluate, and compare our LSTM and transformer models! Model Training We will use the PyTorch library for the modeling stage, as it provides the necessary classes to define both recurrent LSTM layers and encoder-only transformer layers suitable for predictive tasks. First up, we have an LSTM-based RNN architecture like this: class LSTMModel(nn.Module): def __init__(self, hidden=32): super().__init__() self.lstm = nn.LSTM(1, hidden, batch_first=True) self.fc = nn.Linear(hidden, 1) def forward(self, x): out, _ = self.lstm(x) return self.fc(out[:, -1]) lstm_model = LSTMModel() class LSTMModel(nn.Module): def __init__(self, hidden=32): super().__init__() self.lstm = nn.LSTM(1, hidden, batch_first=True) self.fc = nn.Linear(hidden, 1) def forward(self, x): out, _ = self.lstm(x) return self.fc(out[:, –1]) lstm_model = LSTMModel() As for the encoder-only transformer for next-day time series forecasting, we have: class SimpleTransformer(nn.Module): def __init__(self, d_model=32, nhead=4): super().__init__() self.embed = nn.Linear(1, d_model) enc_layer = nn.TransformerEncoderLayer(d_model=d_model, nhead=nhead, batch_first=True) self.transformer = nn.TransformerEncoder(enc_layer, num_layers=1) self.fc = nn.Linear(d_model, 1) def forward(self, x): x = self.embed(x) x = self.transformer(x) return self.fc(x[:, -1]) transformer_model = SimpleTransformer() class SimpleTransformer(nn.Module): def __init__(self, d_model=32, nhead=4): super().__init__() self.embed = nn.Linear(1, d_model) enc_layer = nn.TransformerEncoderLayer(d_model=d_model, nhead=nhead, batch_first=True) self.transformer = nn.TransformerEncoder(enc_layer, num_layers=1) self.fc = nn.Linear(d_model, 1) def forward(self, x): x = self.embed(x) x = self.transformer(x) return self.fc(x[:, –1]) transformer_model = SimpleTransformer() Note that

OnePlus 15R Launched In India With Qualcomm Snapdragon 8 Gen 5; Check Camera, Battery, Display, Price, Sale Date And , Bank Offers Other Specs | Technology News

OnePlus 15R Price In India: OnePlus has launched the OnePlus 15R along with the OnePlus Pad Go 2 in the Indian market. The Chinese smartphone also launched the ‘Ace’ variant of the OnePlus 15R. The phone comes in Charcoal Black, Mint Breeze, and Electric Violet colour options. The dual SIM handset runs on Android 16-based OxygenOS 16 and the company promises four OS upgrades and six years of security updates for the smartphone. OnePlus 15R Specifications The OnePlus 15R features a 6.83-inch Full-HD+ AMOLED display with a resolution of 1,272×2,800 pixels, offering a smooth 165Hz refresh rate, 450ppi pixel density, and a tall 19.8:9 aspect ratio. Add Zee News as a Preferred Source The smartphone is powered by the Qualcomm’s octa-core 3nm Snapdragon 8 Gen 5 chipset with a peak clock speed of 3.8GHz, paired with 12GB LPDDR5x Ultra RAM, up to 512GB of UFS 4.1 storage, and an Adreno 8-series GPU. The device packs a large 7,400mAh silicon carbon battery with 80W wired fast charging support, and OnePlus claims it will retain 80 percent of its capacity even after four years of use. It also comes with IP66, IP68, IP69, and IP69K ratings for dust and water resistance. On the photography front, the OnePlus 15R houses a 50-megapixel primary rear camera with OIS, alongside an 8-megapixel ultra-wide lens with a 112-degree field of view, supporting up to 4K video recording at 120fps, cinematic mode, multi-view video, and video zoom. For quality selfies and videos, there is a 32-megapixel selfie camera capable of shooting 4K videos at 30fps. On the connectivity front, the smartphone supports 5G, 4G LTE, Wi-Fi 7, Bluetooth 6.0, NFC, USB Type-C, GPS, GLONASS, BDS, Galileo, QZSS, and NavIC. OnePlus 15R Bank Offers Buyers can avail attractive bank offers on the OnePlus 15R, with HDFC Bank credit card users getting an instant discount of up to Rs 3,000 along with easy EMI options. Axis Bank credit card customers can also enjoy an instant Rs 3,000 discount and flexible EMI plans. In addition, consumers can opt for up to six months of no-cost EMI on major credit cards. As part of other benefits, OnePlus is offering a 180-day phone replacement plan along with a Lifetime Display Warranty for all OnePlus 15R users. OnePlus 15R Price In India And Availability The smartphone is priced at Rs 47,999 for the base variant with 12GB RAM and 256GB storage. The 512GB storage variant costs Rs 52,999. Pre-orders begin today, while sales start next week. In India, the handset will go on sale on December 22 at 12 PM (noon) via Amazon, the OnePlus India online store, and select offline retail stores.

India Now Largest Market In World In AI Model Adoption: BofA | Technology News

New Delhi: India has emerged as the world’s largest and most active market for large language model (LLM) adoption, according to an analysis by Bank of America (BofA) on Wednesday. The country now leads globally in the number of users for popular AI apps such as ChatGPT, Gemini and Perplexity, both in terms of monthly active users (MAUs) and daily active users (DAUs). BofA said India’s rapid rise as a key AI market is driven by a combination of scale, affordability and demographics. India has the second-largest online population in the world, with more than 700–750 million mobile internet users. Affordable data plans have made AI access easier, with users able to consume 20–30 GB of data a month at around $2. In addition, more than 60 per cent of Indian internet users are under the age of 35, and a large part of this population is English-speaking and quick to adopt new technologies. Add Zee News as a Preferred Source AI adoption in India is also getting a push from telecom companies. BofA noted that telcos such as Jio and Bharti Airtel are offering complimentary subscriptions to paid versions of AI apps like Gemini and Perplexity. This, according to the report, is creating a win-win situation for users, AI companies and telecom operators. For consumers, access to advanced AI tools at a low cost is helping create a level playing field. Indian users are using these tools to improve learning outcomes and boost productivity. The availability of AI models in multiple Indian languages is also helping bridge the digital divide and reduce language barriers, leading to what BofA described as the democratisation of AI. The report also highlighted that India could emerge as a testing ground for the next phase of AI, known as agentic AI. These are AI applications that can reason, plan and execute tasks independently. Given India’s massive and diverse user base, BofA believes the country is well suited to stress-test such applications in real-world conditions before they are rolled out globally. It also suggested that global AI companies could partner with Indian firms for fulfilment services, similar to how AI agents in the US have tied up with travel platforms like Booking and Expedia.

Google Translate Brings Real-Time Translation To Any Headphones: How To Use It And How It Differs From Apple’s Live Translation | Technology News

Google Translate In Real Time App: In a country like India, every journey is a conversation in itself, one that shifts languages with every mile. From a chai-side chat in a small town to a boardroom discussion in a bustling metro, words often change faster than people can keep up. That is where Google Translate quietly steps in, turning confusion into clarity. Whether you are travelling across states, attending meetings, or connecting with someone from a different culture, language barriers no longer have to slow you down. Now, Google is taking this experience a step further by offering real-time translation through the Translate app, powered by Gemini and seamlessly accessible across all headphones, making communication feel natural, instant, smooth, and effortless. More interesting part is that you do not need costly smart earbuds or special translation devices. Any regular wired or wireless headphones will do the job. If you are wondering how to use Google Translate for real-time translations on any headphones, this article will guide you step by step. Add Zee News as a Preferred Source How To Use Google Translate On Any Headphones In Real Time Step 1: Open the Google Translate app on your smartphone. Step 2: At the top, select your spoken language on the left and the language you want to understand on the right. Step 3: Tap the Conversation option located at the bottom left of the main screen. Step 4: When the prompt appears, tap the Start button to begin real-time translation. Step 5: Keep your phone close to the person who is speaking and ask them to speak clearly at a normal pace. Step 6: The app will listen through your phone’s microphone, and the translated audio will automatically play in your connected headphones. Google Translate Vs Apple Live Translation: What Makes Google’s Real-Time App Different Google’s real-time translation keeps things simple and easy to use. You do not need to buy special devices or costly earbuds. An Android phone, the Google Translate app, and any headphones, wired or wireless, are enough. Once you turn it on, you just listen while the other person speaks, and the translated voice plays in your headphones. The feature supports more than 70 languages in beta and even keeps the speaker’s voice style and pauses, so it feels natural. (Also Read: Google Pixel 10 Pro Gets Huge Discount On THIS Platform Under Rs 1,00,000; Check Camera, Display, Battery, Price And Other Specs) On the other hand, Apple takes a different path with Live Translation. It is deeply tied to the Apple ecosystem and works only with certain AirPods and an iPhone running the latest iOS with Apple Intelligence. The big advantage is two-way conversation, where both people can talk and hear translations. Apple also offers text transcripts and replay options, making it helpful but limited to Apple devices. Conclusion: Since this is a beta feature, you may notice a few bugs or minor translation errors, which are common with AI tools. Tech giant Google is actively improving the feature to make it more accurate and reliable. iPhone users are expected to get access sometime in 2026, along with a wider rollout to more countries. It is important to note that Google Translate does not work in offline mode, so you must ensure a stable network connection while using the app.

Google Offers $8 Mn For India’s AI Centers For Health, Agriculture, Education, And Sustainable Cities | Technology News

New Delhi: In a bid to support India’s research ecosystem, Google.org, the philanthropic arm of Google, on Tuesday announced a funding of $8 million for four AI Centres of Excellence for health, agriculture, education, and sustainable cities. The centres were established by the Government, aligning with the vision to “Make AI in India and Make AI work for India”. The centres include TANUH at IISc Bangalore, which will focus on developing scalable AI solutions for effective treatment of non-communicable diseases; Airawat Research Foundation at IIT Kanpur, which will focus on pioneering research on AI to transform urban governance. The AI Centre of Excellence for Education at IIT Madras will focus on developing solutions to enhance learning and teaching outcomes, while ANNAM.AI at IIT Ropar will focus on developing data-driven solutions for agriculture and farmer welfare. Add Zee News as a Preferred Source In addition, Google announced a $2 million founding contribution to establish the new Indic Language Technologies Research Hub at IIT Bombay. The hub, set up in memory of Professor Pushpak Bhattacharyya, a pioneer in Indic language technologies and a Visiting Researcher at Google DeepMind, will aim to ensure that global AI advancements serve India’s linguistic diversity. “India is approaching artificial intelligence as a strategic national capability, not as a short-term technology trend. The four AI Centres of Excellence have been conceived as a coordinated national research mission, advancing foundational research, responsible AI, and applied solutions that serve public purpose, and contributing to our larger aspiration of Viksit Bharat 2047,” said Dharmendra Pradhan, Minister of Education. “Building a globally competitive AI ecosystem requires not only public investment, but also strong institutional leadership and long-term partnerships with industry. This effort is supported by Google and Google.org through their $8 million contribution to the AI Centres of Excellence and a $2 million founding contribution to the Indic Language Technologies Research Hub at IIT Bombay,” he added. At Google’s “Lab to Impact” dialogue, supported by the India AI Impact Summit 2026, the company also committed $400,000 to support the development of India’s Health Foundation model using Google’s MedGemma — the specialised AI model designed for healthcare. As a first step, Ajna Lens will work with experts from the All India Institute of Medical Sciences (AIIMS) to build models that will support India-specific use cases in Dermatology and OPD Triaging. The resulting models will contribute to India’s Digital Public Infrastructure, and their outcomes will be made accessible to the ecosystem. Google is also working with India’s National Health Authority (NHA) to deploy its advanced AI to convert millions of fragmented, unstructured medical records, such as doctors’ clinical and progress notes, into the international, machine-readable FHIR standard. “From foundational research to ecosystem deployment to scaled impact, our full-stack approach is equipping the country to lead a global AI-powered future, with innovations from India’s labs benefiting billions across the world,” said Dr Manish Gupta, Senior Research Director, Google DeepMind.