In this article, you will learn how to use Python’s itertools module to simplify common feature engineering tasks with clean, efficient patterns. Topics we will cover include: Generating interaction, polynomial, and cumulative features with itertools. Building lookup grids, lag windows, and grouped aggregates for structured data workflows. Using iterator-based tools to write cleaner, more composable feature engineering code. On we go. 7 Essential Python Itertools for Feature EngineeringImage by Editor Introduction Feature engineering is where most of the real work in machine learning happens. A good feature often improves a model more than switching algorithms. Yet this step usually leads to messy code with nested loops, manual indexing, hand-built combinations, and the like. Python’s itertools module is a standard library toolkit that most data scientists know exists but rarely reach for when building features. That’s a missed opportunity, as itertools is designed for working with iterators efficiently. A lot of feature engineering, at its core, is structured iteration over pairs of variables, sliding windows, grouped sequences, or every possible subset of a feature set. In this article, you’ll work through seven itertools functions that solve common feature engineering problems. We’ll spin up sample e-commerce data and cover interaction features, lag windows, category combinations, and more. By the end, you’ll have a set of patterns you can drop directly into your own feature engineering pipelines. You can get the code on GitHub. 1. Generating Interaction Features with combinations Interaction features capture the relationship between two variables — something neither variable expresses alone. Manually listing every pair from a multi-column dataset is tedious. combinations in the itertools module does it in one line. Let’s code an example to create interaction features using combinations: import itertools import pandas as pd df = pd.DataFrame({ “avg_order_value”: [142.5, 89.0, 210.3, 67.8, 185.0], “discount_rate”: [0.10, 0.25, 0.05, 0.30, 0.15], “days_since_signup”: [120, 45, 380, 12, 200], “items_per_order”: [3.2, 1.8, 5.1, 1.2, 4.0], “return_rate”: [0.05, 0.18, 0.02, 0.22, 0.08], }) numeric_cols = df.columns.tolist() for col_a, col_b in itertools.combinations(numeric_cols, 2): feature_name = f”{col_a}_x_{col_b}” df[feature_name] = df[col_a] * df[col_b] interaction_cols = [c for c in df.columns if “_x_” in c] print(df[interaction_cols].head()) 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 import itertools import pandas as pd df = pd.DataFrame({ “avg_order_value”: [142.5, 89.0, 210.3, 67.8, 185.0], “discount_rate”: [0.10, 0.25, 0.05, 0.30, 0.15], “days_since_signup”: [120, 45, 380, 12, 200], “items_per_order”: [3.2, 1.8, 5.1, 1.2, 4.0], “return_rate”: [0.05, 0.18, 0.02, 0.22, 0.08], }) numeric_cols = df.columns.tolist() for col_a, col_b in itertools.combinations(numeric_cols, 2): feature_name = f“{col_a}_x_{col_b}” df[feature_name] = df[col_a] * df[col_b] interaction_cols = [c for c in df.columns if “_x_” in c] print(df[interaction_cols].head()) Truncated output: avg_order_value_x_discount_rate avg_order_value_x_days_since_signup \ 0 14.250 17100.0 1 22.250 4005.0 2 10.515 79914.0 3 20.340 813.6 4 27.750 37000.0 avg_order_value_x_items_per_order avg_order_value_x_return_rate \ 0 456.00 7.125 1 160.20 16.020 2 1072.53 4.206 3 81.36 14.916 4 740.00 14.800 … days_since_signup_x_return_rate items_per_order_x_return_rate 0 6.00 0.160 1 8.10 0.324 2 7.60 0.102 3 2.64 0.264 4 16.00 0.320 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 avg_order_value_x_discount_rate avg_order_value_x_days_since_signup \ 0 14.250 17100.0 1 22.250 4005.0 2 10.515 79914.0 3 20.340 813.6 4 27.750 37000.0 avg_order_value_x_items_per_order avg_order_value_x_return_rate \ 0 456.00 7.125 1 160.20 16.020 2 1072.53 4.206 3 81.36 14.916 4 740.00 14.800 … days_since_signup_x_return_rate items_per_order_x_return_rate 0 6.00 0.160 1 8.10 0.324 2 7.60 0.102 3 2.64 0.264 4 16.00 0.320 combinations(numeric_cols, 2) generates every unique pair exactly once without duplicates. With 5 columns, that is 10 pairs; with 10 columns, it is 45. This approach scales as you add columns. 2. Building Cross-Category Feature Grids with product itertools.product gives you the Cartesian product of two or more iterables — every possible combination across them — including repeats across different groups. In the e-commerce sample we’re working with, this is useful when you want to build a feature matrix across customer segments and product categories. import itertools customer_segments = [“new”, “returning”, “vip”] product_categories = [“electronics”, “apparel”, “home_goods”, “beauty”] channels = [“mobile”, “desktop”] # All segment × category × channel combinations combos = list(itertools.product(customer_segments, product_categories, channels)) grid_df = pd.DataFrame(combos, columns=[“segment”, “category”, “channel”]) # Simulate a conversion rate lookup per combination import numpy as np np.random.seed(7) grid_df[“avg_conversion_rate”] = np.round( np.random.uniform(0.02, 0.18, size=len(grid_df)), 3 ) print(grid_df.head(12)) print(f”\nTotal combinations: {len(grid_df)}”) 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 import itertools customer_segments = [“new”, “returning”, “vip”] product_categories = [“electronics”, “apparel”, “home_goods”, “beauty”] channels = [“mobile”, “desktop”] # All segment × category × channel combinations combos = list(itertools.product(customer_segments, product_categories, channels)) grid_df = pd.DataFrame(combos, columns=[“segment”, “category”, “channel”]) # Simulate a conversion rate lookup per combination import numpy as np np.random.seed(7) grid_df[“avg_conversion_rate”] = np.round( np.random.uniform(0.02, 0.18, size=len(grid_df)), 3 ) print(grid_df.head(12)) print(f“\nTotal combinations: {len(grid_df)}”) Output: segment category channel avg_conversion_rate 0 new electronics mobile 0.032 1 new electronics desktop 0.145 2 new apparel mobile 0.090 3 new apparel desktop 0.136 4 new home_goods mobile 0.176 5 new home_goods desktop 0.106 6 new beauty mobile 0.100 7 new beauty desktop 0.032 8 returning electronics mobile 0.063 9 returning electronics desktop 0.100 10 returning apparel mobile 0.129 11 returning apparel desktop 0.149 Total combinations: 24 segment category channel avg_conversion_rate 0 new electronics mobile 0.032 1 new electronics desktop 0.145 2 new apparel mobile 0.090 3 new apparel desktop 0.136 4 new home_goods mobile 0.176 5 new home_goods desktop 0.106 6 new beauty mobile 0.100 7 new beauty desktop 0.032 8 returning electronics mobile 0.063 9 returning electronics desktop 0.100 10 returning apparel mobile 0.129 11 returning apparel desktop 0.149 Total combinations: 24 This grid can then be merged back onto your main transaction dataset as a lookup feature, as every row gets the expected conversion rate for its specific segment × category × channel bucket. product ensures you haven’t missed any valid combination when building that grid. 3. Flattening Multi-Source Feature Sets with chain In most pipelines, features come from multiple sources: a customer profile table, a product metadata table, and a browsing history table. You often need to flatten these into a single feature list for column selection

Access Denied

Access Denied You don’t have permission to access “http://zeenews.india.com/technology/vivo-t5-pro-5g-launched-in-india-at-rs-with-9020-mah-battery-check-camera-features-performance-and-variants-3037654.html” on this server. Reference #18.c4f43717.1776248107.bd0656a4 https://errors.edgesuite.net/18.c4f43717.1776248107.bd0656a4

From Prompt to Prediction: Understanding Prefill, Decode, and the KV Cache in LLMs

In the previous article, we saw how a language model converts logits into probabilities and samples the next token. But where do these logits come from? In this tutorial, we take a hands-on approach to understand the generation pipeline: How the prefill phase processes your entire prompt in a single parallel pass How the decode phase generates tokens one at a time using previously computed context How the KV cache eliminates redundant computation to make decoding efficient By the end, you will understand the two-phase mechanics behind LLM inference and why the KV cache is essential for generating long responses at scale. Let’s get started. From Prompt to Prediction: Understanding Prefill, Decode, and the KV Cache in LLMsPhoto by Neda Astani. Some rights reserved. Overview This article is divided into three parts; they are: How Attention Works During Prefill The Decode Phase of LLM Inference KV Cache: How to Make Decode More Efficient How Attention Works During Prefill Consider the prompt: Today’s weather is so … As humans, we can infer the next token should be an adjective, because the last word “so” is a setup. We also know it probably describes weather, so words like “nice” or “warm” are more likely than something unrelated like “delicious“. Transformers arrive at the same conclusion through attention. During prefill, the model processes the entire prompt in a single forward pass. Every token attends to itself and all tokens before it, building up a contextual representation that captures relationships across the full sequence. The mechanism behind this is the scaled dot-product attention formula: $$\text{Attention}(Q, K, V) = \mathrm{softmax}\left(\frac{QK^\top}{\sqrt{d_k}}\right)V$$ We will walk through this concretely below. To make the attention computation traceable, we assign each token a scalar value representing the information it carries: Position Tokens Values 1 Today 10 2 weather 20 3 is 1 4 so 5 Words like “is” and “so” carry less semantic weight than “Today” or “weather“, and as we’ll see, attention naturally reflects this. Attention Heads In real transformers, attention weights are continuous values learned during training through the $Q$ and $K$ dot product. The behavior of attention heads are learned and usually impossible to describe. No head is hardwired to “attend to even positions”. The four rules below are simplified illustration to make attention mechanism more intuitive, while the weighted aggregation over $V$ is the same. Here are the rules in our toy example: Attend to tokens at even number positions Attend to the last token Attend to the first token Attend to every token For simplicity in this example, the outputs from these heads are then combined (averaged). Let’s walk through the prefill process: Today Even tokens → none Last token → Today → 10 First token → Today → 10 All tokens → Today → 10 weather Even tokens → weather → 20 Last token → weather → 20 First token → Today → 10 All tokens → average(Today, weather) → 15 is Even tokens → weather → 20 Last token → is → 1 First token → Today → 10 All tokens → average(Today, weather, is) → 10.33 so Even tokens → average(weather, so) → 12.5 Last token → so → 5 First token → Today → 10 All tokens → average(Today, weather, is, so) → 9 Parallelizing Attention If the prompt contained 100,000 tokens, computing attention step-by-step would be extremely slow. Fortunately, attention can be expressed as tensor operations, allowing all positions to be computed in parallel. This is the key idea of prefill phase in LLM inference: When you provide a prompt, there are multiple tokens in it and they can be processed in parallel. Such parallel processing helps speed up the response time for the first token generated. To prevent tokens from seeing future tokens, we apply a causal mask, so they can only attend to itself and earlier tokens. import torch tokens = [“Today”, “weather”, “is”, “so”] n = len(tokens) d_k = 64 V = torch.tensor([[10.], [20.], [1.], [5.]], dtype=torch.float32) positions = torch.arange(1, n + 1).float() # 1-based: [1, 2, 3, 4] idx = torch.arange(n) causal_mask = idx.unsqueeze(1) >= idx.unsqueeze(0) print(causal_mask) import torch tokens = [“Today”, “weather”, “is”, “so”] n = len(tokens) d_k = 64 V = torch.tensor([[10.], [20.], [1.], [5.]], dtype=torch.float32) positions = torch.arange(1, n + 1).float() # 1-based: [1, 2, 3, 4] idx = torch.arange(n) causal_mask = idx.unsqueeze(1) >= idx.unsqueeze(0) print(causal_mask) Output: tensor([[ True, False, False, False], [ True, True, False, False], [ True, True, True, False], [ True, True, True, True]]) tensor([[ True, False, False, False], [ True, True, False, False], [ True, True, True, False], [ True, True, True, True]]) Now, we can start writing the “rules” for the 4 attention heads. Rather than computing scores from learned $Q$ and $K$ vectors, we handcraft them directly to match our four attention rules. Each head produces a score matrix of shape (n, n), with one score per query-key pair, which gets masked and passed through softmax to produce attention weights: def selector(condition, size): “””Return a (size, d_k) tensor of +1/-1 depending on condition.””” val = torch.where(condition, torch.ones( size), -torch.ones(size)) # (size,) # (size, d_k) return val.unsqueeze(1).expand(size, d_k).contiguous() # Shared query: every row asks for a property, and K encodes which tokens match it. Q = torch.ones(n, d_k) # Head 1: select even positions # K says whether each token is at an even position. K1 = selector(positions % 2 == 0, n) scores1 = (Q @ K1.T) / (d_k ** 0.5) # Head 2: select the last token # K says whether each token is the last one. K2 = selector(positions == n, n) scores2 = (Q @ K2.T) / (d_k ** 0.5) # Head 3: select the first token # K says whether each token is the first one. K3 = selector(positions == 1, n) scores3 = (Q @ K3.T) / (d_k ** 0.5) # Head 4: select all visible tokens uniformly # K says all the tokens K4 = selector(positions == positions, n) scores4

Access Denied

Access Denied You don’t have permission to access “http://zeenews.india.com/technology/how-to-use-whatsapp-on-two-phones-with-same-number-a-step-by-step-guide-3037478.html” on this server. Reference #18.c4f43717.1776230559.ba3981b5 https://errors.edgesuite.net/18.c4f43717.1776230559.ba3981b5

Top 5 Reranking Models to Improve RAG Results

In this article, you will learn how reranking improves the relevance of results in retrieval-augmented generation (RAG) systems by going beyond what retrievers alone can achieve. Topics we will cover include: How rerankers refine retriever outputs to deliver better answers Five top reranker models to test in 2026 Final thoughts on choosing the right reranker for your system Let’s get started. Top 5 Reranking Models to Improve RAG ResultsImage by Editor Introduction If you have worked with retrieval-augmented generation (RAG) systems, you have probably seen this problem. Your retriever brings back “relevant” chunks, but many of them are not actually useful. The final answer ends up noisy, incomplete, or incorrect. This usually happens because the retriever is optimized for speed and recall, not precision. That is where reranking comes in. Reranking is the second step in a RAG pipeline. First, your retriever fetches a set of candidate chunks. Then, a reranker evaluates the query and each candidate and reorders them based on deeper relevance. In simple terms: Retriever → gets possible matches Reranker → picks the best matches This small step often makes a big difference. You get fewer irrelevant chunks in your prompt, which leads to better answers from your LLM. Benchmarks like MTEB, BEIR, and MIRACL are commonly used to evaluate these models, and most modern RAG systems rely on rerankers for production-quality results. There is no single best reranker for every use case. The right choice depends on your data, latency, cost constraints, and context length requirements. If you are starting fresh in 2026, these are the five models to test first. 1. Qwen3-Reranker-4B If I had to pick one open reranker to test first, it would be Qwen3-Reranker-4B. The model is open-sourced under Apache 2.0, supports 100+ languages, and has a 32k context length. It shows very strong published reranking results (69.76 on MTEB-R, 75.94 on CMTEB-R, 72.74 on MMTEB-R, 69.97 on MLDR, and 81.20 on MTEB-Code). It performs well across different types of data, including multiple languages, long documents, and code. 2. NVIDIA nv-rerankqa-mistral-4b-v3 For question-answering RAG over text passages, nv-rerankqa-mistral-4b-v3 is a solid, benchmark-backed choice. It delivers high ranking accuracy across evaluated datasets, with an average Recall@5 of 75.45% when paired with NV-EmbedQA-E5-v5 across NQ, HotpotQA, FiQA, and TechQA. It is commercially ready. The main limitation is context size (512 tokens per pair), so it works best with clean chunking. 3. Cohere rerank-v4.0-pro For a managed, enterprise-friendly option, rerank-v4.0-pro is designed as a quality-focused reranker with 32k context, multilingual support across 100+ languages, and support for semi-structured JSON documents. It is suitable for production data such as tickets, CRM records, tables, or metadata-rich objects. 4. jina-reranker-v3 Most rerankers score each document independently. jina-reranker-v3 uses listwise reranking, processing up to 64 documents together in a 131k-token context window, achieving 61.94 nDCG@10 on BEIR. This approach is useful for long-context RAG, multilingual search, and retrieval tasks where relative ordering matters. It is published under CC BY-NC 4.0. 5. BAAI bge-reranker-v2-m3 Not every strong reranker needs to be new. bge-reranker-v2-m3 is lightweight, multilingual, easy to deploy, and fast at inference. It is a practical baseline. If a newer model does not significantly outperform BGE, the added cost or latency may not be justified. Final Thoughts Reranking is a simple yet powerful way to improve a RAG system. A good retriever gets you close. A good reranker gets you to the right answer. In 2026, adding a reranker is essential. Here is a shortlist of recommendations: Feature Description Best open model Qwen3-Reranker-4B Best for QA pipelines NVIDIA nv-rerankqa-mistral-4b-v3 Best managed option Cohere rerank-v4.0-pro Best for long context jina-reranker-v3 Best baseline BGE-reranker-v2-m3 This selection provides a strong starting point. Your specific use case and system constraints should guide the final choice. About Kanwal Mehreen Kanwal Mehreen is an aspiring Software Developer with a keen interest in data science and applications of AI in medicine. Kanwal was selected as the Google Generation Scholar 2022 for the APAC region. Kanwal loves to share technical knowledge by writing articles on trending topics, and is passionate about improving the representation of women in tech industry.

Access Denied

Access Denied You don’t have permission to access “http://zeenews.india.com/technology/world-quantum-day-2026-why-is-it-celebrated-history-significance-purpose-all-you-need-to-know-3037141.html” on this server. Reference #18.c4f43717.1776167909.b0f5cd28 https://errors.edgesuite.net/18.c4f43717.1776167909.b0f5cd28

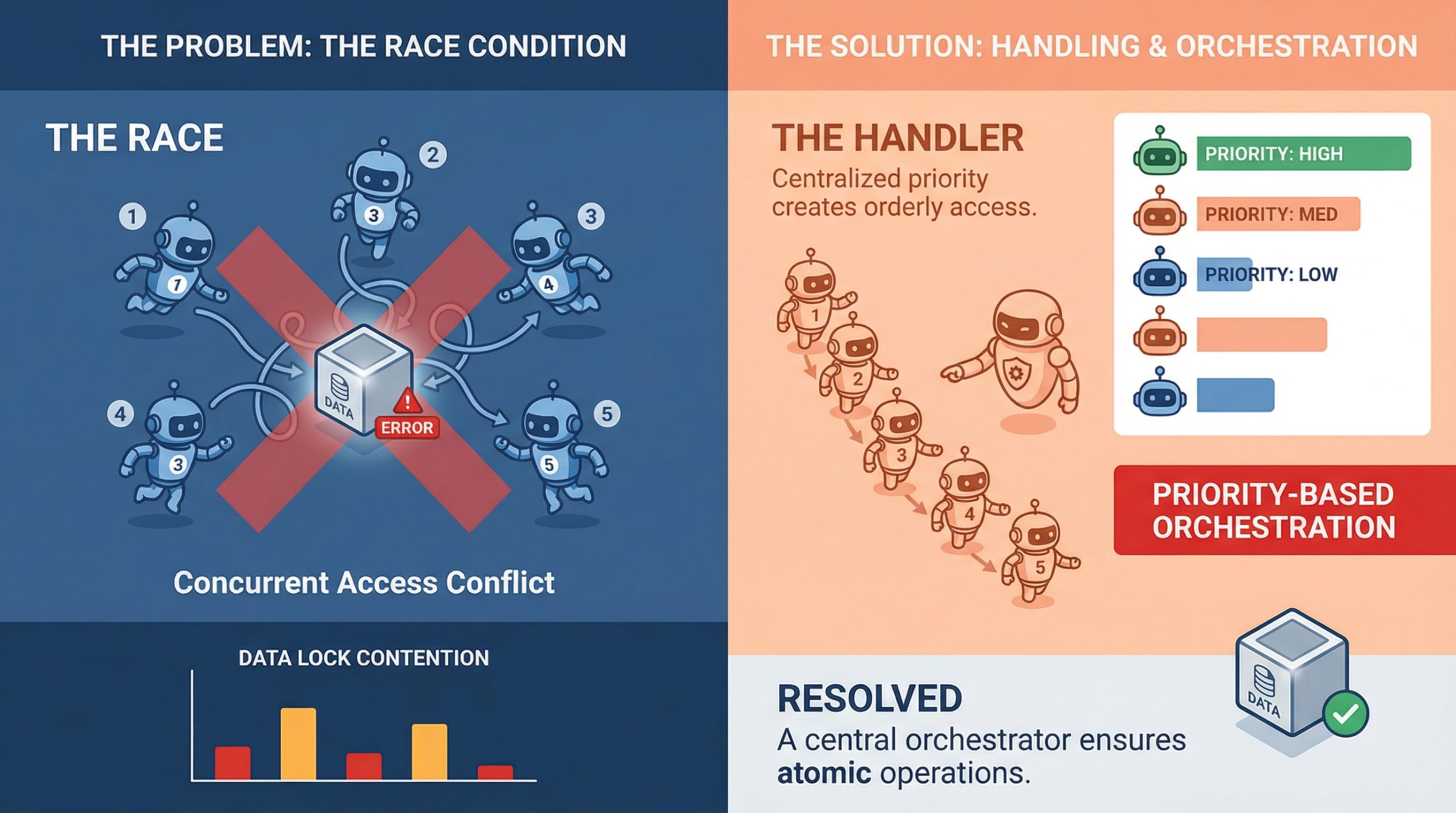

Handling Race Conditions in Multi-Agent Orchestration

In this article, you will learn how to identify, understand, and mitigate race conditions in multi-agent orchestration systems. Topics we will cover include: What race conditions look like in multi-agent environments Architectural patterns for preventing shared-state conflicts Practical strategies like idempotency, locking, and concurrency testing Let’s get straight to it. Handling Race Conditions in Multi-Agent OrchestrationImage by Editor If you’ve ever watched two agents confidently write to the same resource at the same time and produce something that makes zero sense, you already know what a race condition feels like in practice. It’s one of those bugs that doesn’t show up in unit tests, behaves perfectly in staging, and then detonates in production during your highest-traffic window. In multi-agent systems, where parallel execution is the whole point, race conditions aren’t edge cases. They’re expected guests. Understanding how to handle them is less about being defensive and more about building systems that assume chaos by default. What Race Conditions Actually Look Like in Multi-Agent Systems A race condition happens when two or more agents try to read, modify, or write shared state at the same time, and the final result depends on which one gets there first. In a single-agent pipeline, that’s manageable. In a system with five agents running concurrently, it’s a genuinely different problem. The tricky part is that race conditions aren’t always obvious crashes. Sometimes they’re silent. Agent A reads a document, Agent B updates it half a second later, and Agent A writes back a stale version with no error thrown anywhere. The system looks fine. The data is compromised. What makes this worse in machine learning pipelines specifically is that agents often work on mutable shared objects, whether that’s a shared memory store, a vector database, a tool output cache, or a simple task queue. Any of these can become a contention point when multiple agents start pulling from them simultaneously. Why Multi-Agent Pipelines Are Especially Vulnerable Traditional concurrent programming has decades of tooling around race conditions: threads, mutexes, semaphores, and atomic operations. Multi-agent large language model (LLM) systems are newer, and they are often built on top of async frameworks, message brokers, and orchestration layers that don’t always give you fine-grained control over execution order. There’s also the problem of non-determinism. LLM agents don’t always take the same amount of time to complete a task. One agent might finish in 200ms, while another takes 2 seconds, and the orchestrator has to handle that gracefully. When it doesn’t, agents start stepping on each other, and you end up with a corrupted state or conflicting writes that the system silently accepts. Agent communication patterns matter a lot here, too. If agents are sharing state through a central object or a shared database row rather than passing messages, they are almost guaranteed to run into write conflicts at scale. This is as much a design pattern issue as it is a concurrency issue, and fixing it usually starts at the architecture level before you even touch the code. Locking, Queuing, and Event-Driven Design The most direct way to handle shared resource contention is through locking. Optimistic locking works well when conflicts are rare: each agent reads a version tag alongside the data, and if the version has changed by the time it tries to write, the write fails and retries. Pessimistic locking is more aggressive and reserves the resource before reading. Both approaches have trade-offs, and which one fits depends on how often your agents are actually colliding. Queuing is another solid approach, especially for task assignment. Instead of multiple agents polling a shared task list directly, you push tasks into a queue and let agents consume them one at a time. Systems like Redis Streams, RabbitMQ, or even a basic Postgres advisory lock can handle this well. The queue becomes your serialization point, which takes the race out of the equation for that particular access pattern. Event-driven architectures go further. Rather than agents reading from shared state, they react to events. Agent A completes its work and emits an event. Agent B listens for that event and picks up from there. This creates looser coupling and naturally reduces the overlap window where two agents might be modifying the same thing at once. Idempotency Is Your Best Friend Even with solid locking and queuing in place, things still go wrong. Networks hiccup, timeouts happen, and agents retry failed operations. If those retries are not idempotent, you will end up with duplicate writes, double-processed tasks, or compounding errors that are painful to debug after the fact. Idempotency means that running the same operation multiple times produces the same result as running it once. For agents, that often means including a unique operation ID with every write. If the operation has already been applied, the system recognizes the ID and skips the duplicate. It’s a small design choice with a significant impact on reliability. It’s worth building idempotency in from the start at the agent level. Retrofitting it later is painful. Agents that write to databases, update records, or trigger downstream workflows should all carry some form of deduplication logic, because it makes the whole system more resilient to the messiness of real-world execution. Testing for Race Conditions Before They Test You The hard part about race conditions is reproducing them. They are timing-dependent, which means they often only appear under load or in specific execution sequences that are difficult to reproduce in a controlled test environment. One useful approach is stress testing with intentional concurrency. Spin up multiple agents against a shared resource simultaneously and observe what breaks. Tools like Locust, pytest-asyncio with concurrent tasks, or even a simple ThreadPoolExecutor can help simulate the kind of overlapping execution that exposes contention bugs in staging rather than production. Property-based testing is underused in this context. If you can define invariants that should always hold regardless of execution order, you can run randomized tests that attempt to violate them. It won’t catch everything, but it will surface many of the

Access Denied

Access Denied You don’t have permission to access “http://zeenews.india.com/technology/how-to-solve-smartphone-lagging-problems-here-are-5-simple-tips-to-fix-it-3035998.html” on this server. Reference #18.eff43717.1776122794.83144fe2 https://errors.edgesuite.net/18.eff43717.1776122794.83144fe2

Access Denied

Access Denied You don’t have permission to access “http://zeenews.india.com/technology/infinix-note-60-pro-launched-in-india-price-features-camera-battery-and-all-you-need-to-know-3036994.html” on this server. Reference #18.c4f43717.1776090862.a4a25aa2 https://errors.edgesuite.net/18.c4f43717.1776090862.a4a25aa2

Access Denied

Access Denied You don’t have permission to access “http://zeenews.india.com/technology/whatsapp-for-carplay-launched-key-features-and-uses-how-it-benefits-drivers-and-passengers-3036253.html” on this server. Reference #18.c4f43717.1776062548.9f944c18 https://errors.edgesuite.net/18.c4f43717.1776062548.9f944c18