Access Denied You don’t have permission to access “http://zeenews.india.com/technology/samsung-to-discontinue-samsung-messages-app-in-july-2026-urges-switch-to-google-messages-3036504.html” on this server. Reference #18.c4f43717.1776004228.970d1bf2 https://errors.edgesuite.net/18.c4f43717.1776004228.970d1bf2

Access Denied

Access Denied You don’t have permission to access “http://zeenews.india.com/technology/what-is-the-length-of-your-smartphone-charging-cable-iphone-vs-android-is-there-a-difference-3036294.html” on this server. Reference #18.c4f43717.1775983887.91f69cfc https://errors.edgesuite.net/18.c4f43717.1775983887.91f69cfc

Access Denied

Access Denied You don’t have permission to access “http://zeenews.india.com/technology/google-ai-features-you-can-now-book-restaurant-tables-from-search-bar-check-how-it-works-3036021.html” on this server. Reference #18.c4f43717.1775917569.87795687 https://errors.edgesuite.net/18.c4f43717.1775917569.87795687

Access Denied

Access Denied You don’t have permission to access “http://zeenews.india.com/technology/ai-race-heats-up-anthropic-may-build-its-own-chips-to-power-claude-ai-key-reasons-behind-this-move-3035782.html” on this server. Reference #18.c4f43717.1775880506.7fa9a0d4 https://errors.edgesuite.net/18.c4f43717.1775880506.7fa9a0d4

A Hands-On Guide to Testing Agents with RAGAs and G-Eval

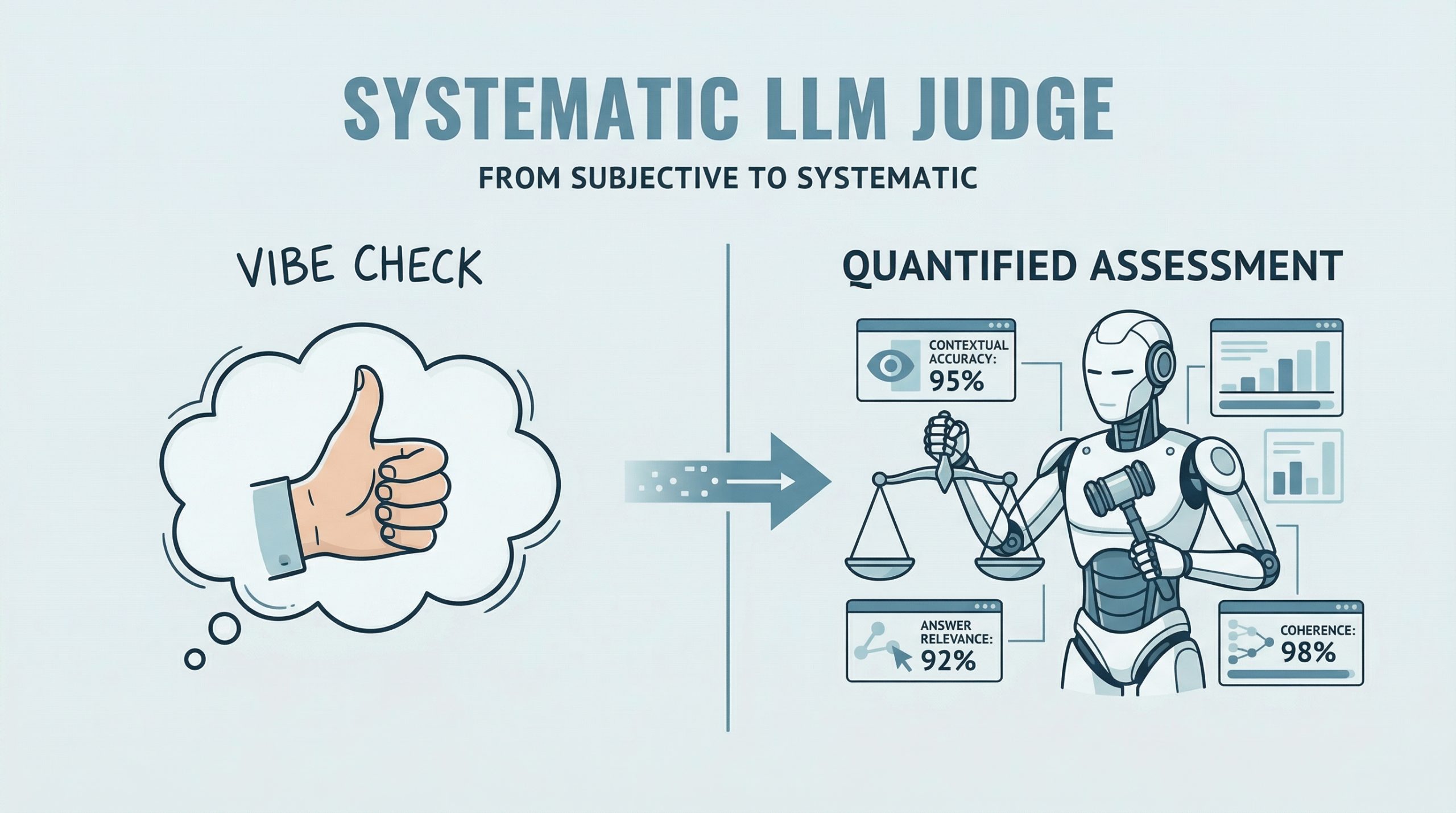

In this article, you will learn how to evaluate large language model applications using RAGAs and G-Eval-based frameworks in a practical, hands-on workflow. Topics we will cover include: How to use RAGAs to measure faithfulness and answer relevancy in retrieval-augmented systems. How to structure evaluation datasets and integrate them into a testing pipeline. How to apply G-Eval via DeepEval to assess qualitative aspects like coherence. Let’s get started. A Hands-On Guide to Testing Agents with RAGAs and G-EvalImage by Editor Introduction RAGAs (Retrieval-Augmented Generation Assessment) is an open-source evaluation framework that replaces subjective “vibe checks” with a systematic, LLM-driven “judge” to quantify the quality of RAG pipelines. It assesses a triad of desirable RAG properties, including contextual accuracy and answer relevance. RAGAs has also evolved to support not only RAG architectures but also agent-based applications, where methodologies like G-Eval play a role in defining custom, interpretable evaluation criteria. This article presents a hands-on guide to understanding how to test large language model and agent-based applications using both RAGAs and frameworks based on G-Eval. Concretely, we will leverage DeepEval, which integrates multiple evaluation metrics into a unified testing sandbox. If you are unfamiliar with evaluation frameworks like RAGAs, consider reviewing this related article first. Step-by-Step Guide This example is designed to work both in a standalone Python IDE and in a Google Colab notebook. You may need to pip install some libraries along the way to resolve potential ModuleNotFoundError issues, which occur when attempting to import modules that are not installed in your environment. We begin by defining a function that takes a user query as input and interacts with an LLM API (such as OpenAI) to generate a response. This is a simplified agent that encapsulates a basic input-response workflow. import openai def simple_agent(query): # NOTE: this is a ‘mock’ agent loop # In a real scenario, you would use a system prompt to define tool usage prompt = f”You are a helpful assistant. Answer the user query: {query}” # Example using OpenAI (this can be swapped for Gemini or another provider) response = openai.chat.completions.create( model=”gpt-3.5-turbo”, messages=[{“role”: “user”, “content”: prompt}] ) return response.choices[0].message.content import openai def simple_agent(query): # NOTE: this is a ‘mock’ agent loop # In a real scenario, you would use a system prompt to define tool usage prompt = f“You are a helpful assistant. Answer the user query: {query}” # Example using OpenAI (this can be swapped for Gemini or another provider) response = openai.chat.completions.create( model=“gpt-3.5-turbo”, messages=[{“role”: “user”, “content”: prompt}] ) return response.choices[0].message.content In a more realistic production setting, the agent defined above would include additional capabilities such as reasoning, planning, and tool execution. However, since the focus here is on evaluation, we intentionally keep the implementation simple. Next, we introduce RAGAs. The following code demonstrates how to evaluate a question-answering scenario using the faithfulness metric, which measures how well the generated answer aligns with the provided context. from ragas import evaluate from ragas.metrics import faithfulness # Defining a simple testing dataset for a question-answering scenario data = { “question”: [“What is the capital of Japan?”], “answer”: [“Tokyo is the capital.”], “contexts”: [[“Japan is a country in Asia. Its capital is Tokyo.”]] } # Running RAGAs evaluation result = evaluate(data, metrics=[faithfulness]) from ragas import evaluate from ragas.metrics import faithfulness # Defining a simple testing dataset for a question-answering scenario data = { “question”: [“What is the capital of Japan?”], “answer”: [“Tokyo is the capital.”], “contexts”: [[“Japan is a country in Asia. Its capital is Tokyo.”]] } # Running RAGAs evaluation result = evaluate(data, metrics=[faithfulness]) Note that you may need sufficient API quota (e.g., OpenAI or Gemini) to run these examples, which typically requires a paid account. Below is a more elaborate example that incorporates an additional metric for answer relevancy and uses a structured dataset. test_cases = [ { “question”: “How do I reset my password?”, “answer”: “Go to settings and click ‘forgot password’. An email will be sent.”, “contexts”: [“Users can reset passwords via the Settings > Security menu.”], “ground_truth”: “Navigate to Settings, then Security, and select Forgot Password.” } ] test_cases = [ { “question”: “How do I reset my password?”, “answer”: “Go to settings and click ‘forgot password’. An email will be sent.”, “contexts”: [“Users can reset passwords via the Settings > Security menu.”], “ground_truth”: “Navigate to Settings, then Security, and select Forgot Password.” } ] Ensure that your API key is configured before proceeding. First, we demonstrate evaluation without wrapping the logic in an agent: import os from ragas import evaluate from ragas.metrics import faithfulness, answer_relevancy from datasets import Dataset # IMPORTANT: Replace “YOUR_API_KEY” with your actual API key os.environ[“OPENAI_API_KEY”] = “YOUR_API_KEY” # Convert list to Hugging Face Dataset (required by RAGAs) dataset = Dataset.from_list(test_cases) # Run evaluation ragas_results = evaluate(dataset, metrics=[faithfulness, answer_relevancy]) print(f”RAGAs Faithfulness Score: {ragas_results[‘faithfulness’]}”) import os from ragas import evaluate from ragas.metrics import faithfulness, answer_relevancy from datasets import Dataset # IMPORTANT: Replace “YOUR_API_KEY” with your actual API key os.environ[“OPENAI_API_KEY”] = “YOUR_API_KEY” # Convert list to Hugging Face Dataset (required by RAGAs) dataset = Dataset.from_list(test_cases) # Run evaluation ragas_results = evaluate(dataset, metrics=[faithfulness, answer_relevancy]) print(f“RAGAs Faithfulness Score: {ragas_results[‘faithfulness’]}”) To simulate an agent-based workflow, we can encapsulate the evaluation logic into a reusable function: import os from ragas import evaluate from ragas.metrics import faithfulness, answer_relevancy from datasets import Dataset def evaluate_ragas_agent(test_cases, openai_api_key=”YOUR_API_KEY”): “””Simulates a simple AI agent that performs RAGAs evaluation.””” os.environ[“OPENAI_API_KEY”] = openai_api_key # Convert test cases into a Dataset object dataset = Dataset.from_list(test_cases) # Run evaluation ragas_results = evaluate(dataset, metrics=[faithfulness, answer_relevancy]) return ragas_results 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 import os from ragas import evaluate from ragas.metrics import faithfulness, answer_relevancy from datasets import Dataset def evaluate_ragas_agent(test_cases, openai_api_key=“YOUR_API_KEY”): “”“Simulates a simple AI agent that performs RAGAs evaluation.”“” os.environ[“OPENAI_API_KEY”] = openai_api_key # Convert test cases into a Dataset object dataset = Dataset.from_list(test_cases) # Run evaluation ragas_results = evaluate(dataset,

Access Denied

Access Denied You don’t have permission to access “http://zeenews.india.com/technology/instagram-latest-features-you-can-now-edit-comments-within-15-minute-window-here-s-how-it-works-3035905.html” on this server. Reference #18.c4f43717.1775822945.73f22670 https://errors.edgesuite.net/18.c4f43717.1775822945.73f22670

The Roadmap to Mastering Agentic AI Design Patterns

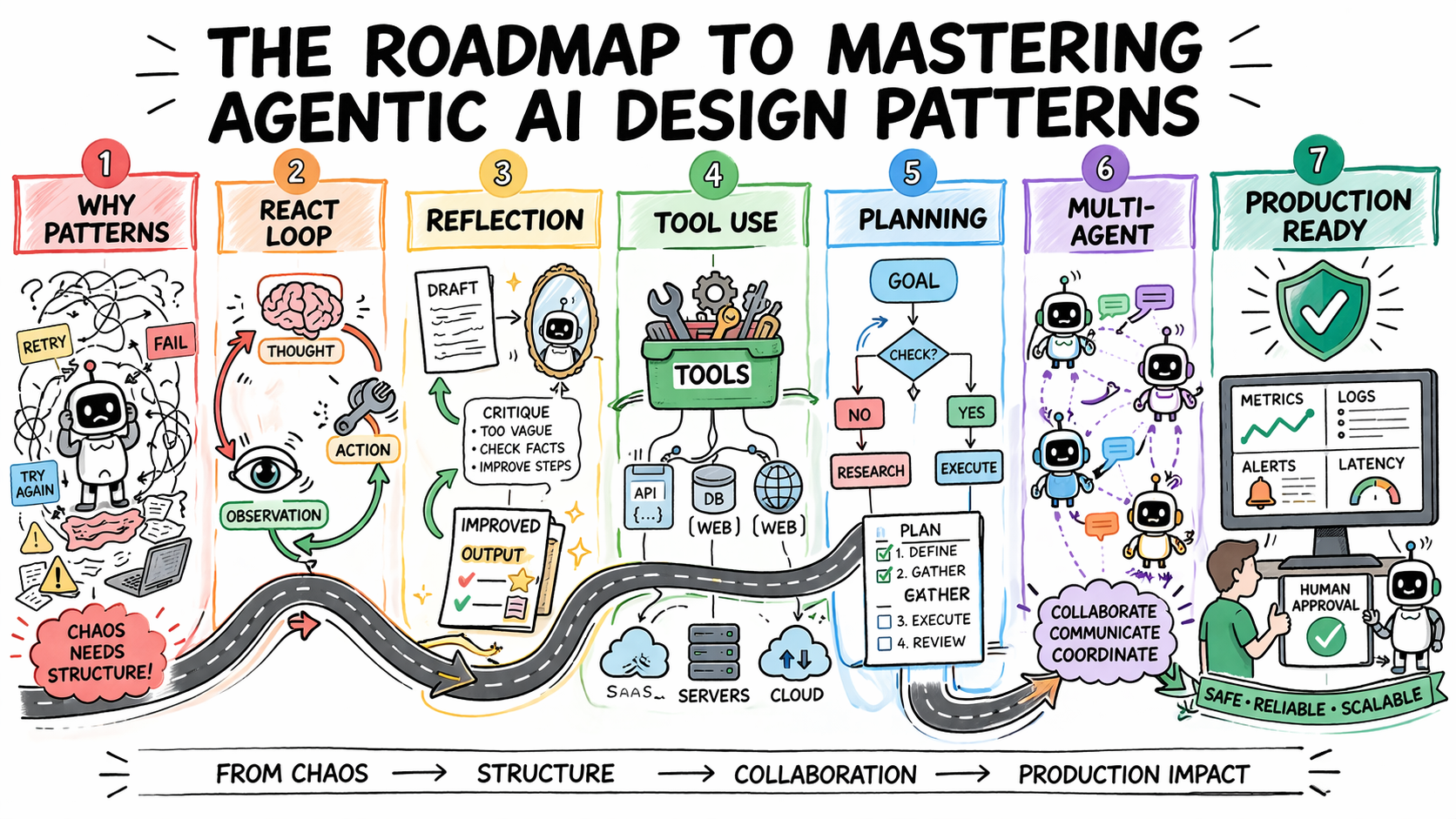

In this article, you will learn how to systematically select and apply agentic AI design patterns to build reliable, scalable agent systems. Topics we will cover include: Why design patterns are essential for predictable agent behavior Core agentic patterns such as ReAct, Reflection, Planning, and Tool Use How to evaluate, scale, and safely deploy agentic systems in production Let’s get started. The Roadmap to Mastering Agentic AI Design PatternsImage by Author Introduction Most agentic AI systems are built pattern by pattern, decision by decision, without any governing framework for how the agent should reason, act, recover from errors, or hand off work to other agents. Without structure, agent behavior is hard to predict, harder to debug, and nearly impossible to improve systematically. The problem compounds in multi-step workflows, where a bad decision early in a run affects every step that follows. Agentic design patterns are reusable approaches for recurring problems in agentic system design. They help establish how an agent reasons before acting, how it evaluates its own outputs, how it selects and calls tools, how multiple agents divide responsibility, and when a human needs to be in the loop. Choosing the right pattern for a given task is what makes agent behavior predictable, debuggable, and composable as requirements grow. This article offers a practical roadmap to understanding agentic AI design patterns. It explains why pattern selection is an architectural decision and then works through the core agentic design patterns used in production today. For each, it covers when the pattern fits, what trade-offs it carries, and how patterns layer together in real systems. Step 1: Understanding Why Design Patterns Are Necessary Before you study any specific pattern, you need to reframe what you’re actually trying to solve. The instinct for many developers is to treat agent failures as prompting failures. If the agent did the wrong thing, the fix is a better system prompt. Sometimes that is true. But more often, the failure is architectural. An agent that loops endlessly is failing because no explicit stopping condition was designed into the loop. An agent that calls tools incorrectly does not have a clear contract for when to invoke which tool. An agent that produces inconsistent outputs given identical inputs is operating without a structured decision framework. Design patterns exist to solve exactly these problems. They are repeatable architectural templates that define how an agent’s loop should behave: how it decides what to do next, when to stop, how to recover from errors, and how to interact reliably with external systems. Without them, agent behavior becomes almost impossible to debug or scale. There is also a pattern-selection problem that trips up teams early. The temptation is to reach for the most capable, most sophisticated pattern available — multi-agent systems, complex orchestration, dynamic planning. But the cost of premature complexity in agentic systems is steep. More model calls mean higher latency and token costs. More agents mean more failure surfaces. More orchestration means more coordination bugs. The expensive mistake is jumping to complex patterns before you have hit clear limitations with simpler ones. The practical implication: Treat pattern selection the way you would treat any production architecture decision. Start with the problem, not the pattern. Define what the agent needs to do, what can go wrong, and what “working correctly” looks like. Then pick the simplest pattern that handles those requirements. Further learning: AI agent design patterns | Google Cloud and Agentic AI Design Patterns Introduction and walkthrough | Amazon Web Services. Step 2: Learning the ReAct Pattern as Your Default Starting Point ReAct — Reasoning and Acting — is the most foundational agentic design pattern and the right default for most complex, unpredictable tasks. It combines chain-of-thought reasoning with external tool use in a continuous feedback loop. The structure alternates between three phases: Thought: the agent reasons about what to do next Action: the agent invokes a tool, calls an API, or runs code Observation: the agent processes the result and updates its plan This repeats until the task is complete or a stopping condition is reached. ReAct PatternImage by Author What makes the pattern effective is that it externalizes reasoning. Every decision is visible, so when the agent fails, you can see exactly where the logic broke down rather than debugging a black-box output. It also prevents premature conclusions by grounding each reasoning step in an observable result before proceeding, which reduces hallucination when models jump to answers without real-world feedback. The trade-offs are real. Each loop iteration requires an additional model call, increasing latency and cost. Incorrect tool output propagates into subsequent reasoning steps. Non-deterministic model behavior means identical inputs can produce different reasoning paths, which creates consistency problems in regulated environments. Without an explicit iteration cap, the loop can run indefinitely and costs can compound quickly. Use ReAct when the solution path is not predetermined: adaptive problem-solving, multi-source research, and customer support workflows with variable complexity. Avoid it when speed is the priority or when inputs are well-defined enough that a fixed workflow would be faster and cheaper. Further reading: ReAct: Synergizing Reasoning and Acting in Language Models and What Is a ReAct Agent? | IBM Step 3: Adding Reflection to Improve Output Quality Reflection gives an agent the ability to evaluate and revise its own outputs before they reach the user. The structure is a generation-critique-refinement cycle: the agent produces an initial output, assesses it against defined quality criteria, and uses that assessment as the basis for revision. The cycle runs for a set number of iterations or until the output meets a defined threshold. Reflection PatternImage by Author The pattern is particularly effective when critique is specialized. An agent reviewing code can focus on bugs, edge cases, or security issues. One reviewing a contract can check for missing clauses or logical inconsistencies. Connecting the critique step to external verification tools — a linter, a compiler, or a schema validator — compounds the gains further, because the agent receives deterministic feedback rather than relying solely

Access Denied

Access Denied You don’t have permission to access “http://zeenews.india.com/technology/atovio-pebble-review-can-this-wearable-air-purifier-protect-you-from-pollution-check-pros-and-cons-3035259.html” on this server. Reference #18.c4f43717.1775745204.67eea2a2 https://errors.edgesuite.net/18.c4f43717.1775745204.67eea2a2

Access Denied

Access Denied You don’t have permission to access “http://zeenews.india.com/technology/oppo-f33-series-set-to-launch-in-india-on-this-date-with-latest-ai-features-50mp-front-and-rear-cameras-3035288.html” on this server. Reference #18.eff43717.1775724686.4dbcffba https://errors.edgesuite.net/18.eff43717.1775724686.4dbcffba

Access Denied

Access Denied You don’t have permission to access “http://zeenews.india.com/technology/6g-is-coming-100x-faster-than-5g-with-mind-blowing-ai-powers-heres-what-you-need-to-know-3034968.html” on this server. Reference #18.c4f43717.1775701222.5f962ace https://errors.edgesuite.net/18.c4f43717.1775701222.5f962ace