In this article, you will learn three expert-level feature engineering strategies — counterfactual features, domain-constrained representations, and causal-invariant features — for building robust and explainable models in high-stakes settings. Topics we will cover include: How to generate counterfactual sensitivity features for decision-boundary awareness. How to train a constrained autoencoder that encodes a monotonic domain rule into its representation. How to discover causal-invariant features that remain stable across environments. Without further delay, let’s begin. Expert-Level Feature Engineering: Advanced Techniques for High-Stakes ModelsImage by Editor Introduction Building machine learning models in high-stakes contexts like finance, healthcare, and critical infrastructure often demands robustness, explainability, and other domain-specific constraints. In these situations, it can be worth going beyond classic feature engineering techniques and adopting advanced, expert-level strategies tailored to such settings. This article presents three such techniques, explains how they work, and highlights their practical impact. Counterfactual Feature Generation Counterfactual feature generation comprises techniques that quantify how sensitive predictions are to decision boundaries by constructing hypothetical data points from minimal changes to original features. The idea is simple: ask “how much must an original feature value change for the model’s prediction to cross a critical threshold?” These derived features improve interpretability — e.g. “how close is a patient to a diagnosis?” or “what is the minimum income increase required for loan approval?”— and they encode sensitivity directly in feature space, which can improve robustness. The Python example below creates a counterfactual sensitivity feature, cf_delta_feat0, measuring how much input feature feat_0 must change (holding all others fixed) to cross the classifier’s decision boundary. We’ll use NumPy, pandas, and scikit-learn. import numpy as np import pandas as pd from sklearn.linear_model import LogisticRegression from sklearn.datasets import make_classification from sklearn.preprocessing import StandardScaler # Toy data and baseline linear classifier X, y = make_classification(n_samples=500, n_features=5, random_state=42) df = pd.DataFrame(X, columns=[f”feat_{i}” for i in range(X.shape[1])]) df[‘target’] = y scaler = StandardScaler() X_scaled = scaler.fit_transform(df.drop(columns=”target”)) clf = LogisticRegression().fit(X_scaled, y) # Decision boundary parameters weights = clf.coef_[0] bias = clf.intercept_[0] def counterfactual_delta_feat0(x, eps=1e-9): “”” Minimal change to feature 0, holding other features fixed, required to move the linear logit score to the decision boundary (0). For a linear model: delta = -score / w0 “”” score = np.dot(weights, x) + bias w0 = weights[0] return -score / (w0 + eps) df[‘cf_delta_feat0’] = [counterfactual_delta_feat0(x) for x in X_scaled] df.head() 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 import numpy as np import pandas as pd from sklearn.linear_model import LogisticRegression from sklearn.datasets import make_classification from sklearn.preprocessing import StandardScaler # Toy data and baseline linear classifier X, y = make_classification(n_samples=500, n_features=5, random_state=42) df = pd.DataFrame(X, columns=[f“feat_{i}” for i in range(X.shape[1])]) df[‘target’] = y scaler = StandardScaler() X_scaled = scaler.fit_transform(df.drop(columns=“target”)) clf = LogisticRegression().fit(X_scaled, y) # Decision boundary parameters weights = clf.coef_[0] bias = clf.intercept_[0] def counterfactual_delta_feat0(x, eps=1e–9): “”“ Minimal change to feature 0, holding other features fixed, required to move the linear logit score to the decision boundary (0). For a linear model: delta = -score / w0 ““” score = np.dot(weights, x) + bias w0 = weights[0] return –score / (w0 + eps) df[‘cf_delta_feat0’] = [counterfactual_delta_feat0(x) for x in X_scaled] df.head() Domain-Constrained Representation Learning (Constrained Autoencoders) Autoencoders are widely used for unsupervised representation learning. We can adapt them for domain-constrained representation learning: learn a compressed representation (latent features) while enforcing explicit domain rules (e.g., safety margins or monotonicity laws). Unlike unconstrained latent factors, domain-constrained representations are trained to respect physical, ethical, or regulatory constraints. Below, we train an autoencoder that learns three latent features and reconstructs inputs while softly enforcing a monotonic rule: higher values of feat_0 should not decrease the likelihood of the positive label. We add a simple supervised predictor head and penalize violations via a finite-difference monotonicity loss. Implementation uses PyTorch. import torch import torch.nn as nn import torch.optim as optim from sklearn.model_selection import train_test_split # Supervised split using the earlier DataFrame `df` X_train, X_val, y_train, y_val = train_test_split( df.drop(columns=”target”).values, df[‘target’].values, test_size=0.2, random_state=42 ) X_train = torch.tensor(X_train, dtype=torch.float32) y_train = torch.tensor(y_train, dtype=torch.float32).unsqueeze(1) torch.manual_seed(42) class ConstrainedAutoencoder(nn.Module): def __init__(self, input_dim, latent_dim=3): super().__init__() self.encoder = nn.Sequential( nn.Linear(input_dim, 8), nn.ReLU(), nn.Linear(8, latent_dim) ) self.decoder = nn.Sequential( nn.Linear(latent_dim, 8), nn.ReLU(), nn.Linear(8, input_dim) ) # Small predictor head on top of the latent code (logit output) self.predictor = nn.Linear(latent_dim, 1) def forward(self, x): z = self.encoder(x) recon = self.decoder(z) logit = self.predictor(z) return recon, z, logit model = ConstrainedAutoencoder(input_dim=X_train.shape[1]) optimizer = optim.Adam(model.parameters(), lr=1e-3) recon_loss_fn = nn.MSELoss() pred_loss_fn = nn.BCEWithLogitsLoss() epsilon = 1e-2 # finite-difference step for monotonicity on feat_0 for epoch in range(50): model.train() optimizer.zero_grad() recon, z, logit = model(X_train) # Reconstruction + supervised prediction loss loss_recon = recon_loss_fn(recon, X_train) loss_pred = pred_loss_fn(logit, y_train) # Monotonicity penalty: y_logit(x + e*e0) – y_logit(x) should be >= 0 X_plus = X_train.clone() X_plus[:, 0] = X_plus[:, 0] + epsilon _, _, logit_plus = model(X_plus) mono_violation = torch.relu(logit – logit_plus) # negative slope if > 0 loss_mono = mono_violation.mean() loss = loss_recon + 0.5 * loss_pred + 0.1 * loss_mono loss.backward() optimizer.step() # Latent features now reflect the monotonic constraint with torch.no_grad(): _, latent_feats, _ = model(X_train) latent_feats[:5] 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 import torch import torch.nn as nn import torch.optim as optim from sklearn.model_selection import train_test_split # Supervised split using the earlier DataFrame `df` X_train, X_val, y_train, y_val = train_test_split( df.drop(columns=“target”).values, df[‘target’].values, test_size=0.2, random_state=42 ) X_train = torch.tensor(X_train, dtype=torch.float32) y_train = torch.tensor(y_train, dtype=torch.float32).unsqueeze(1) torch.manual_seed(42) class ConstrainedAutoencoder(nn.Module): def __init__(self, input_dim, latent_dim=3):

Apple Launches Digital ID Feature For Secure ID Use In Apple Wallet | Technology News

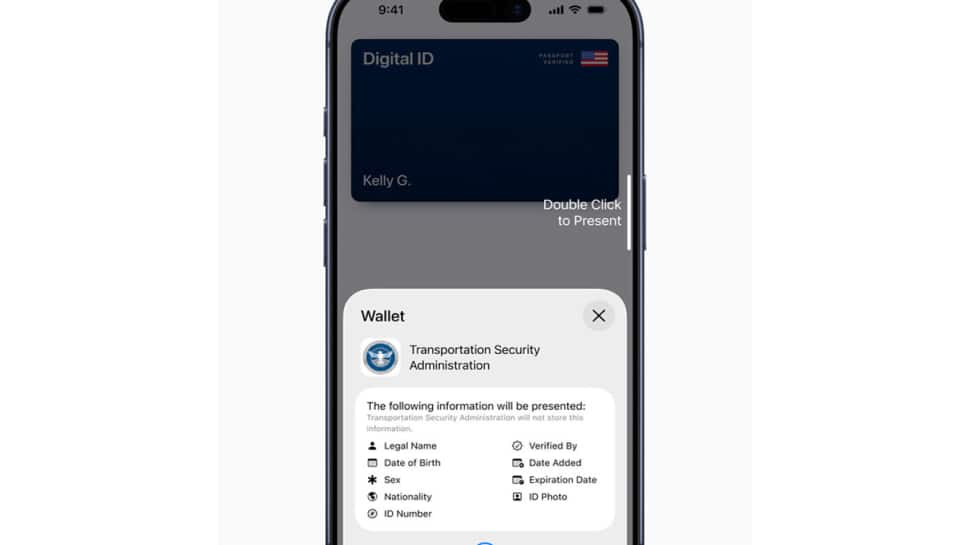

New Delhi: Apple has introduced Digital ID, a new feature that allows users to create and present an ID in Apple Wallet using information from their U.S. passport. The company said the service, launched in beta, will first be accepted at Transportation Security Administration (TSA) checkpoints across more than 250 airports in the United States for domestic travel. According to an Apple press release, the Digital ID aims to provide users with a simple way to securely carry their identification on their iPhone or Apple Watch. It is designed for those who may not have a REAL ID-compliant driver’s license or state ID. However, Apple clarified that the new feature does not replace a physical passport and cannot be used for international travel or border crossings. “With the launch of Digital ID, we’re excited to expand the ways users can store and present their identity — all with the security and privacy built into iPhone and Apple Watch,” said Jennifer Bailey, Apple’s Vice President of Apple Pay and Apple Wallet. She added that users have embraced the convenience of adding driver’s licenses or state IDs since 2022, and Digital ID now extends that option to more people. Add Zee News as a Preferred Source To create a Digital ID, users can open the Wallet app, tap the “Add” button, and select “Driver’s License or ID Cards.” From there, they choose “Digital ID” and follow a verification process that includes scanning the photo page of their passport and reading the chip embedded in it for authenticity. Users must also take a selfie and complete certain facial movements to confirm their identity. Once verified, the Digital ID appears in Wallet. Presenting a Digital ID is also straightforward. Users double-click the side or Home button, select Digital ID, and hold their iPhone or Apple Watch near an identity reader. They can review the information being requested and authenticate using Face ID or Touch ID. Apple said Digital ID uses built-in security features of iPhone and Apple Watch to guard against theft and tampering. The data is encrypted and stored only on the user’s device. Apple itself cannot see when or where the ID is used, or what data is shared. Users also approve every information request through biometric authentication before sharing. The company added that more use cases will be introduced, allowing people to present their Digital ID at select businesses and organizations for identity or age verification both in person and online. Currently, users can add driver’s licenses or state IDs to Apple Wallet in 12 U.S. states and Puerto Rico, with recent expansions in Montana, North Dakota, and West Virginia, and international use beginning in Japan with the My Number Card on iPhone.

Building ReAct Agents with LangGraph: A Beginner’s Guide

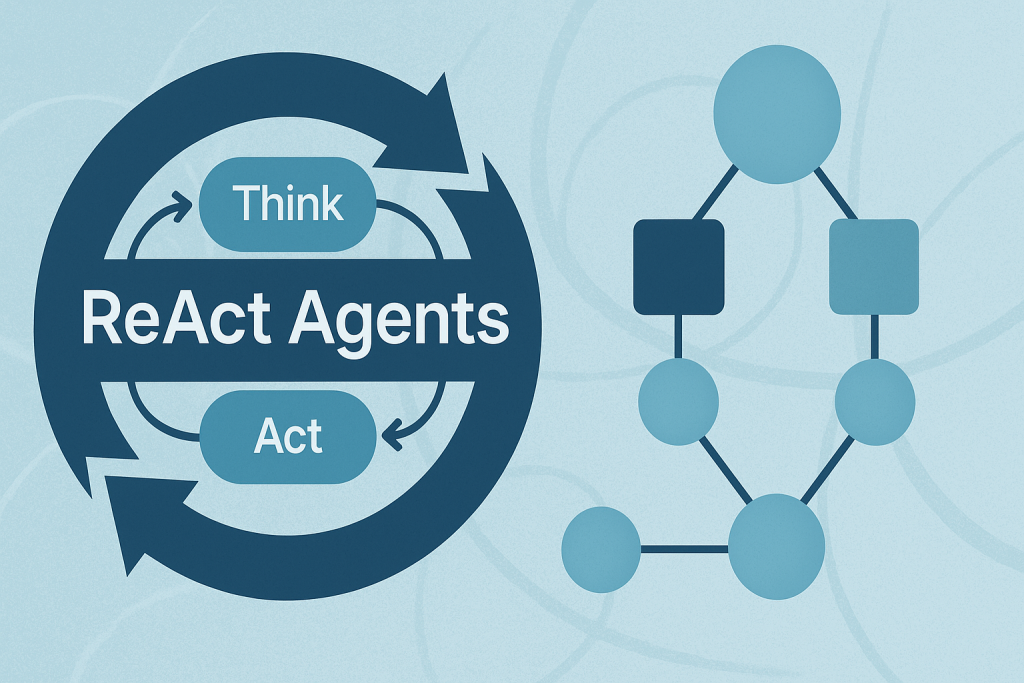

In this article, you will learn how the ReAct (Reasoning + Acting) pattern works and how to implement it with LangGraph — first with a simple, hardcoded loop and then with an LLM-driven agent. Topics we will cover include: The ReAct cycle (Reason → Act → Observe) and why it’s useful for agents. How to model agent workflows as graphs with LangGraph. Building a hardcoded ReAct loop, then upgrading it to an LLM-powered version. Let’s explore these techniques. Building ReAct Agents with LangGraph: A Beginner’s GuideImage by Author What is the ReAct Pattern? ReAct (Reasoning + Acting) is a common pattern for building AI agents that think through problems and take actions to solve them. The pattern follows a simple cycle: Reasoning: The agent thinks about what it needs to do next. Acting: The agent takes an action (like searching for information). Observing: The agent examines the results of its action. This cycle repeats until the agent has gathered enough information to answer the user’s question. Why LangGraph? LangGraph is a framework built on top of LangChain that lets you define agent workflows as graphs. A graph (in this context) is a data structure consisting of nodes (steps in your process) connected by edges (the paths between steps). Each node in the graph represents a step in your agent’s process, and edges define how information flows between steps. This structure allows for complex flows like loops and conditional branching. For example, your agent can cycle between reasoning and action nodes until it gathers enough information. This makes complex agent behavior easy to understand and maintain. Tutorial Structure We’ll build two versions of a ReAct agent: Part 1: A simple hardcoded agent to understand the mechanics. Part 2: An LLM-powered agent that makes dynamic decisions. Part 1: Understanding ReAct with a Simple Example First, we’ll create a basic ReAct agent with hardcoded logic. This helps you understand how the ReAct loop works without the complexity of LLM integration. Setting Up the State Every LangGraph agent needs a state object that flows through the graph nodes. This state serves as shared memory that accumulates information. Nodes read the current state and add their contributions before passing it along. from langgraph.graph import StateGraph, END from typing import TypedDict, Annotated import operator # Define the state that flows through our graph class AgentState(TypedDict): messages: Annotated[list, operator.add] next_action: str iterations: int from langgraph.graph import StateGraph, END from typing import TypedDict, Annotated import operator # Define the state that flows through our graph class AgentState(TypedDict): messages: Annotated[list, operator.add] next_action: str iterations: int Key Components: StateGraph: The main class from LangGraph that defines our agent’s workflow. AgentState: A TypedDict that defines what information our agent tracks. messages: Uses operator.add to accumulate all thoughts, actions, and observations. next_action: Tells the graph which node to execute next. iterations: Counts how many reasoning cycles we’ve completed. Creating a Mock Tool In a real ReAct agent, tools are functions that perform actions in the world — like searching the web, querying databases, or calling APIs. For this example, we’ll use a simple mock search tool. # Simple mock search tool def search_tool(query: str) -> str: # Simulate a search – in real usage, this would call an API responses = { “weather tokyo”: “Tokyo weather: 18°C, partly cloudy”, “population japan”: “Japan population: approximately 125 million”, } return responses.get(query.lower(), f”No results found for: {query}”) # Simple mock search tool def search_tool(query: str) -> str: # Simulate a search – in real usage, this would call an API responses = { “weather tokyo”: “Tokyo weather: 18°C, partly cloudy”, “population japan”: “Japan population: approximately 125 million”, } return responses.get(query.lower(), f“No results found for: {query}”) This function simulates a search engine with hardcoded responses. In production, this would call a real search API like Google, Bing, or a custom knowledge base. The Reasoning Node — The “Brain” of ReAct This is where the agent thinks about what to do next. In this simple version, we’re using hardcoded logic, but you’ll see how this becomes dynamic with an LLM in Part 2. # Reasoning node – decides what to do def reasoning_node(state: AgentState): messages = state[“messages”] iterations = state.get(“iterations”, 0) # Simple logic: first search weather, then population, then finish if iterations == 0: return {“messages”: [“Thought: I need to check Tokyo weather”], “next_action”: “action”, “iterations”: iterations + 1} elif iterations == 1: return {“messages”: [“Thought: Now I need Japan’s population”], “next_action”: “action”, “iterations”: iterations + 1} else: return {“messages”: [“Thought: I have enough info to answer”], “next_action”: “end”, “iterations”: iterations + 1} # Reasoning node – decides what to do def reasoning_node(state: AgentState): messages = state[“messages”] iterations = state.get(“iterations”, 0) # Simple logic: first search weather, then population, then finish if iterations == 0: return {“messages”: [“Thought: I need to check Tokyo weather”], “next_action”: “action”, “iterations”: iterations + 1} elif iterations == 1: return {“messages”: [“Thought: Now I need Japan’s population”], “next_action”: “action”, “iterations”: iterations + 1} else: return {“messages”: [“Thought: I have enough info to answer”], “next_action”: “end”, “iterations”: iterations + 1} How it works: The reasoning node examines the current state and decides: Should we gather more information? (return “action”) Do we have enough to answer? (return “end”) Notice how each return value updates the state: Adds a “Thought” message explaining the decision. Sets next_action to route to the next node. Increments the iteration counter. This mimics how a human would approach a research task: “First I need weather info, then population data, then I can answer.” The Action Node — Taking Action Once the reasoning node decides to act, this node executes the chosen action and observes the results. # Action node – executes the tool def action_node(state: AgentState): iterations = state[“iterations”] # Choose query based on iteration query = “weather tokyo” if iterations == 1 else “population japan” result = search_tool(query) return {“messages”: [f”Action: Searched for ‘{query}’”, f”Observation: {result}”], “next_action”: “reasoning”} # Router – decides next step def route(state: AgentState):

OnePlus 15 Launched In India With Snapdragon 8 Elite Gen 5 Chipset; Check Display, Camera, Battery, Price And Other Features | Technology News

OnePlus 15 India Launch: Chinese smartphone manufacturer OnePlus has launched the OnePlus 15 smartphone in India today. It is the first smartphone in India to feature Qualcomm’s newest and fastest chipset. The smartphone comes loaded with several AI-powered features, including Plus Mind, Google’s Gemini AI, AI Recorder, AI Portrait Glow, AI Scan, and AI PlayLab. The newly-launched smartphone comes preloaded with OxygenOS 16 based on Android 16, featuring a redesigned “Liquid Glass”-inspired interface, enhanced customization options, and new AI capabilities. The OnePlus 15 adopts a flat display, a revamped squarish camera island if compared to the OnePlus 13. The OnePlus 15 also introduces the new Plus button first seen on the OnePlus 13s. OnePlus 15 India Launch: Specifications Add Zee News as a Preferred Source The OnePlus 15 features a 6.78-inch LTPO AMOLED display with 1.5K resolution and a 165Hz refresh rate, delivering up to 1,800 nits of peak brightness. The screen also includes Eye Comfort for Gaming, Motion Cues, Eye Comfort Reminders, and Reduce White Point, all within ultra-thin 1.15mm bezels. It runs on OxygenOS 16, which brings the AI Call Assistant for real-time language translation and Google Gemini integration that lets the AI chatbot access your screenshots and notes in OnePlus’ Mind Space app. The smartphone is powered by a massive 7,300mAh silicon-carbon battery with support for 120W SuperVOOC wired and 50W AirVOOC wireless fast charging. On the photography front, the smartphone comes with a 50-megapixel triple rear camera capable of 8K video recording. For selfies and video chats, there is a 32-megapixel shooter at the front. On the connectivity front, the smartphone comes with a 5G, Wi-Fi 7, Bluetooth 6.0, USB 3.2 Gen 1 Type-C, and satellite navigation support via GPS, GLONASS, Galileo, QZSS, and NavIC. On the security front, the smartphone comes with an in-display ultrasonic fingerprint scanner, and the phone carries an IP66+IP68+IP69+IP69K rating for dust and water resistance. To manage thermals, it features a 5,731 sq mm 3D vapor chamber as part of its 360 Cryo-Velocity Cooling system. (Also Read: iQOO 15 Set To Launch In India On November 26: Price, Features, And Pre-Booking Details Revealed) OnePlus 15 India Launch: Price, Availability And Launch Offers The base model with 12GB RAM and 256GB storage is priced at Rs 72,999, while the 16GB RAM and 512GB storage variant costs Rs 75,999. Notably, the smartphone will go on sale in India from November 13 at 8 PM IST. For a limited time, buyers will get a free pair of OnePlus Nord Buds 3, a lifetime display warranty, and an upgrade bonus of up to Rs 4,000. Adding further, the OnePlus 15 customers will receive three free months of Google AI Pro with extra storage and features. Meanwhile, the HDFC Bank cardholders can enjoy a Rs 4,000 discount on both variants, bringing the prices down to Rs 68,999 and Rs 71,999, respectively.

Datasets for Training a Language Model

A language model is a mathematical model that describes a human language as a probability distribution over its vocabulary. To train a deep learning network to model a language, you need to identify the vocabulary and learn its probability distribution. You can’t create the model from nothing. You need a dataset for your model to learn from. In this article, you’ll learn about datasets used to train language models and how to source common datasets from public repositories. Let’s get started. Datasets for Training a Language ModelPhoto by Dan V. Some rights reserved. A Good Dataset for Training a Language Model A good language model should learn correct language usage, free of biases and errors. Unlike programming languages, human languages lack formal grammar and syntax. They evolve continuously, making it impossible to catalog all language variations. Therefore, the model should be trained from a dataset instead of crafted from rules. Setting up a dataset for language modeling is challenging. You need a large, diverse dataset that represents the language’s nuances. At the same time, it must be high quality, presenting correct language usage. Ideally, the dataset should be manually edited and cleaned to remove noise like typos, grammatical errors, and non-language content such as symbols or HTML tags. Creating such a dataset from scratch is costly, but several high-quality datasets are freely available. Common datasets include: Common Crawl. A massive, continuously updated dataset of over 9.5 petabytes with diverse content. It’s used by leading models including GPT-3, Llama, and T5. However, since it’s sourced from the web, it contains low-quality and duplicate content, along with biases and offensive material. Rigorous cleaning and filtering are required to make it useful. C4 (Colossal Clean Crawled Corpus). A 750GB dataset scraped from the web. Unlike Common Crawl, this dataset is pre-cleaned and filtered, making it easier to use. Still, expect potential biases and errors. The T5 model was trained on this dataset. Wikipedia. English content alone is around 19GB. It is massive yet manageable. It’s well-curated, structured, and edited to Wikipedia standards. While it covers a broad range of general knowledge with high factual accuracy, its encyclopedic style and tone are very specific. Training on this dataset alone may cause models to overfit to this style. WikiText. A dataset derived from verified good and featured Wikipedia articles. Two versions exist: WikiText-2 (2 million words from hundreds of articles) and WikiText-103 (100 million words from 28,000 articles). BookCorpus. A few-GB dataset of long-form, content-rich, high-quality book texts. Useful for learning coherent storytelling and long-range dependencies. However, it has known copyright issues and social biases. The Pile. An 825GB curated dataset from multiple sources, including BookCorpus. It mixes different text genres (books, articles, source code, and academic papers), providing broad topical coverage designed for multidisciplinary reasoning. However, this diversity results in variable quality, duplicate content, and inconsistent writing styles. Getting the Datasets You can search for these datasets online and download them as compressed files. However, you’ll need to understand each dataset’s format and write custom code to read them. Alternatively, search for datasets in the Hugging Face repository at https://huggingface.co/datasets. This repository provides a Python library that lets you download and read datasets in real time using a standardized format. Hugging Face Datasets Repository Let’s download the WikiText-2 dataset from Hugging Face, one of the smallest datasets suitable for building a language model: import random from datasets import load_dataset dataset = load_dataset(“wikitext”, “wikitext-2-raw-v1″) print(f”Size of the dataset: {len(dataset)}”) # print a few samples n = 5 while n > 0: idx = random.randint(0, len(dataset)-1) text = dataset[idx][“text”].strip() if text and not text.startswith(“=”): print(f”{idx}: {text}”) n -= 1 import random from datasets import load_dataset dataset = load_dataset(“wikitext”, “wikitext-2-raw-v1”) print(f“Size of the dataset: {len(dataset)}”) # print a few samples n = 5 while n > 0: idx = random.randint(0, len(dataset)–1) text = dataset[idx][“text”].strip() if text and not text.startswith(“=”): print(f“{idx}: {text}”) n -= 1 The output may look like this: Size of the dataset: 36718 31776: The Missouri ‘s headwaters above Three Forks extend much farther upstream than … 29504: Regional variants of the word Allah occur in both pagan and Christian pre @-@ … 19866: Pokiri ( English : Rogue ) is a 2006 Indian Telugu @-@ language action film , … 27397: The first flour mill in Minnesota was built in 1823 at Fort Snelling as a … 10523: The music industry took note of Carey ‘s success . She won two awards at the … Size of the dataset: 36718 31776: The Missouri ‘s headwaters above Three Forks extend much farther upstream than … 29504: Regional variants of the word Allah occur in both pagan and Christian pre @-@ … 19866: Pokiri ( English : Rogue ) is a 2006 Indian Telugu @-@ language action film , … 27397: The first flour mill in Minnesota was built in 1823 at Fort Snelling as a … 10523: The music industry took note of Carey ‘s success . She won two awards at the … If you haven’t already, install the Hugging Face datasets library: When you run this code for the first time, load_dataset() downloads the dataset to your local machine. Ensure you have enough disk space, especially for large datasets. By default, datasets are downloaded to ~/.cache/huggingface/datasets. All Hugging Face datasets follow a standard format. The dataset object is an iterable, with each item as a dictionary. For language model training, datasets typically contain text strings. In this dataset, text is stored under the “text” key. The code above samples a few elements from the dataset. You’ll see plain text strings of varying lengths. Post-Processing the Datasets Before training a language model, you may want to post-process the dataset to clean the data. This includes reformatting text (clipping long strings, replacing multiple spaces with single spaces), removing non-language content (HTML tags, symbols), and removing unwanted characters (extra spaces around punctuation). The specific processing depends on the dataset and how you want to present text to the model. For example, if

VIRAL: Apple’s Limited-Edition ‘iPhone Pocket’ Accessory Brutally Mocked Over Rs 20,400 Price Tag | viral News

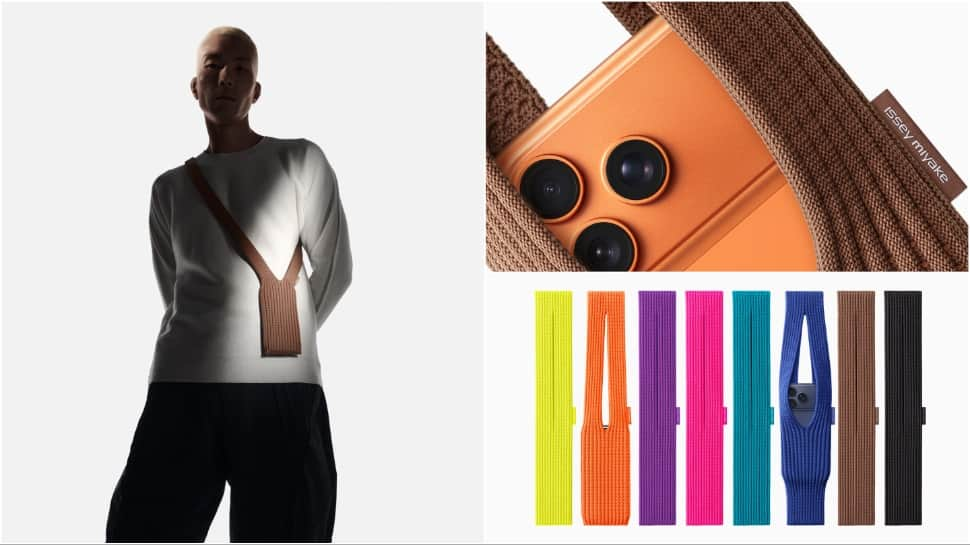

Apple recently announced the “iPhone Pocket,” a limited-edition accessory developed in cooperation with ISSEY MIYAKE, which quickly set off a storm of social media ridicule regarding its high price and unusual design. The accessory, described by the company as a “singular 3D-knitted construction,” comes in two variants priced between around Rs 13,310 and Rs 20,400. The pricey accessory has been compared to nothing but a plain, cut-up sock by many social media users, or at best, a fabric crossbody bag. Social Media Backlash/Memes Add Zee News as a Preferred Source The prices, varying from Rs 13,310 for a short strap to Rs 20,400 for a long strap, were the source of much ridicule online. One user exclaimed, “The iPhone pocket everybody. Rs 20,400 for a cut-up sock. Apple people will pay anything for anything as long as it’s Apple.” Another user shared a picture and wrote, “This sock or ‘iPhone Pocket’ is for Rs 20,400,” while another user commented wryly on the price: “iPhone Pocket: Rs 13,310. Your gym sock: Rs 12.” TWO hundred and thirty dollars This feels like a litmus test for people who will buy/defend anything Apple releases pic.twitter.com/hSAaJXGAOn — Marques Brownlee (@MKBHD) November 11, 2025 The reaction was everywhere, with many memes popping up; many featured pictures of the iPhone Pocket photoshopped to include googly eyes. Design And Pricing Details The iPhone Pocket, in collaboration with ISSEY MIYAKE, takes its inspiration from “the concept of a piece of cloth” and is crafted in Japan. Construction: The accessory is described as a “singular 3D-knitted construction designed to fit any iPhone.” Versions and Pricing: Short Strap: Costs Rs 13,310 (approx.), comes in eight colours including lemon, mandarin, purple, and black. Long Strap: Rs 20,400, it is available in three colours: sapphire, cinnamon, and black. Design Philosophy: Yoshiyuki Miyamae, design director of MIYAKE DESIGN STUDIO, described the concept: “iPhone Pocket explores the concept of “the joy of wearing iPhone in your own way.” The simplicity of its design echoes what we practice at ISSEY MIYAKE — the idea of leaving things less defined to allow for possibilities and personal interpretation.” Availability The special-edition iPhone Pocket will be available to purchase from Friday, November 14, in select Apple Store locations in the USA, and globally on apple.com in France, Greater China, Italy, Japan, Singapore, South Korea, the UK, and the US. ALSO READ | WATCH: China’s Newly Opened Hongqi Bridge Collapses In Massive Landslide; Viral Video

The Complete Guide to Model Context Protocol

In this article, you will learn what the Model Context Protocol (MCP) is, why it exists, and how it standardizes connecting language models to external data and tools. Topics we will cover include: The integration problem MCP is designed to solve. MCP’s client–server architecture and communication model. The core primitives (resources, prompts, and tools) and how they work together. Let’s not waste any more time. The Complete Guide to Model Context ProtocolImage by Editor Introducing Model Context Protocol Language models can generate text and reason impressively, yet they remain isolated by default. Out of the box, they can’t access your files, query databases, or call APIs without additional integration work. Each new data source means more custom code, more maintenance burden, and more fragmentation. Model Context Protocol (MCP) solves this by providing an open-source standard for connecting language models to external systems. Instead of building one-off integrations for every data source, MCP provides a shared protocol that lets models communicate with tools, APIs, and data. This article takes a closer look at what MCP is, why it matters, and how it changes the way we connect language models to real-world systems. Here’s what we’ll cover: The core problem MCP is designed to solve An overview of MCP’s architecture The three core primitives: tools, prompts, and resources How the protocol flow works in practice When to use MCP (and when not to) By the end, you’ll have a solid understanding of how MCP fits into the modern AI stack and how to decide if it’s right for your projects. The Problem That Model Context Protocol Solves Before MCP, integrating AI into enterprise systems was messy and inefficient because tying language models to real systems quickly runs into a scalability problem. Each new model and each new data source need custom integration code — connectors, adapters, and API bridges — that don’t generalize. If you have M models and N data sources, you end up maintaining M × N unique integrations. Every new model or data source multiplies the complexity, adding more maintenance overhead. The MCP solves this by introducing a shared standard for communication between models and external resources. Instead of each model integrating directly with every data source, both models and resources speak a common protocol. This turns an M × N problem into an M + N one. Each model implements MCP once, each resource implements MCP once, and everything can interoperate smoothly. From M × N integrations to M + N with MCPImage by Author In short, MCP decouples language models from the specifics of external integrations. In doing so, it enables scalable, maintainable, and reusable connections that link AI systems to real-world data and functionality. Understanding MCP’s Architecture MCP implements a client-server architecture with specific terminology that’s important to understand. The Three Key Components MCP Hosts are applications that want to use MCP capabilities. These are typically LLM applications like Claude Desktop, IDEs with AI features, or custom applications you’ve built. Hosts contain or interface with language models and initiate connections to MCP servers. MCP Clients are the protocol clients created and managed by the host application. When a host wants to connect to an MCP server, it creates a client instance to handle that specific connection. A single host application can maintain multiple clients, each connecting to different servers. The client handles the protocol-level communication, managing requests and responses according to the MCP specification. MCP Servers expose specific capabilities to clients: database access, filesystem operations, API integrations, or computational tools. Servers implement the server side of the protocol, responding to client requests and providing resources, tools, and prompts. MCP ArchitectureImage by Author This architecture provides a clean separation of concerns: Hosts focus on orchestrating AI workflows without concerning themselves with data source specifics Servers expose capabilities without knowing how models will use them The protocol handles communication details transparently A single host can connect to multiple servers simultaneously through separate clients. For example, an AI assistant might maintain connections to filesystem, database, GitHub, and Slack servers concurrently. The host presents the model with a unified capability set, abstracting away whether data comes from local files or remote APIs. Communication Protocol MCP uses JSON-RPC 2.0 for message exchange. This lightweight remote procedure call protocol provides a structured request/response format and is simple to inspect and debug. MCP supports two transport mechanisms: stdio (Standard Input/Output): For local server processes running on the same machine. The host spawns the server process and communicates through its standard streams. HTTP: For networked communication. Uses HTTP POST for requests and, optionally, Server-Sent Events for streaming. This flexibility lets MCP servers run locally or remotely while keeping communication consistent. The Three Core Primitives MCP relies on three core primitives that servers expose. They provide enough structure to enable complex interactions without limiting flexibility. Resources Resources represent any data a model can read. This includes file contents, database records, API responses, live sensor data, or cached computations. Each resource uses a URI scheme, which makes it easy to identify and access different types of data. Here are some examples: Filesystem: file:///home/user/projects/api/README.md Database: postgres://localhost/customers/table/users Weather API: weather://current/san-francisco The URI scheme identifies the resource type. The rest of the path points to the specific data. Resources can be static, such as files with fixed URIs, or dynamic, like the latest entries in a continuously updating log. Servers list available resources through the resources/list endpoint, and hosts retrieve them via resources/read. Each resource includes metadata, such as MIME type, which helps hosts handle content correctly — text/markdown is processed differently than application/json — and descriptions provide context that helps both users and models understand the resource. Prompts Prompts provide reusable templates for common tasks. They encode expert knowledge and simplify complex instructions. For example, a database MCP server can offer prompts like analyze-schema, debug-slow-query, or generate-migration. Each prompt includes the context necessary for the task. Prompts accept arguments. An analyze-table prompt can take a table name and include schema details, indexes, foreign key relationships, and recent

Oppo Reno 15 Series Set To Launch In China On Nov 17: Expected Models, Specs, Features | Technology News

Oppo Reno 15 Series: Oppo has officially announced the launch date of its upcoming Reno 15 smartphone series in China. The lineup, which will include the Reno 15, Reno 15 Pro, and a new Reno 15 Mini, is set to debut on November 17 at 7pm local time (4:30pm IST). The launch will coincide with the brand’s Double Eleven (11.11) shopping festival celebrations in the country. Three Models in Lineup The Reno 15 series will include three models — the standard Reno 15, the Reno 15 Pro, and the smaller Reno 15 Mini. Oppo has already listed the Reno 15 and Reno 15 Pro on its official e-shop, and pre-orders are currently open ahead of the launch event. Add Zee News as a Preferred Source Colour Options and Storage Variants According to the official listing, the Oppo Reno 15 will come in three colour options — Starlight Bow, Aurora Blue, and Canele Brown. It will be available in five RAM and storage options: 12GB + 256GB 12GB + 512GB 16GB + 256GB 16GB + 512GB 16GB + 1TB The Oppo Reno 15 Pro, on the other hand, will be offered in Starlight Bow, Canele Brown, and Honey Gold colour options. This model will have four RAM and storage options: 12GB + 256GB 12GB + 512GB 16GB + 512GB 16GB + 1TB (Also Read: GTA 6 Delayed Again — Fans Disappointed As Launch Pushed To November 2026) Expected Display Sizes According to reports, the Reno 15 Pro will feature a 6.78-inch 1.5K flat display, while the compact Reno 15 Mini could come with a 6.32-inch 1.5K screen. The standard Reno 15 is expected to sit between the two, with a 6.59-inch display. Camera Specifications The Reno 15 Pro and Reno 15 Mini are rumoured to feature triple rear camera setups. Both models may include a 200-megapixel Samsung ISOCELL HP5 primary sensor, a 50-megapixel ultrawide camera, and a 50-megapixel periscope lens. On the front, all models are expected to sport 50-megapixel selfie cameras for high-quality front photography. The Oppo Reno 15 series launch event will take place on November 17, and the devices are already listed for pre-order in China.

Apple iOS 26.1 Is Here: Major Fixes, New Features And More – Check What’s News | Technology News

Apple iOS 26.1 Details: Apple has rolled out iOS 26.1, the first major update since the launch of iOS 26 in September. The new update focuses on enhancing the overall experience with small yet meaningful upgrades, design improvements, and more intuitive controls. It’s now available for all iOS 26-compatible iPhones and aims to address several issues users have pointed out, particularly around the Liquid Glass interface and the Lock Screen camera shortcut. Major New Features And Fixes One of the biggest highlights is the new Liquid Glass Transparency Toggle. There’s a new option under Display and Brightness settings to switch between “Clear” and “Tinted” modes. The tinted option adds more opacity and contrast, making menus and buttons easier to read. It’s a much-needed fix, as many users complained about poor visibility in iOS 26. Add Zee News as a Preferred Source Another useful change is the ability to turn off the Lock Screen camera gesture. This feature stops the camera from accidentally opening when the phone is in your pocket or bag. You can disable it in the Camera settings without turning off the camera entirely. Apple has also added a “Slide to Stop” gesture for alarms and timers. The update adds more language support for Apple Intelligence, which now understands Danish, Dutch, Turkish and Vietnamese. AirPods Live Translation also supports new languages like Japanese, Korean, and Chinese, making conversations smoother for AirPods Pro 2, Pro 3 and AirPods 4 users. It gets new gesture-based controls in Apple Music. You can now swipe left or right on the mini-player to skip tracks. The new AutoMix feature also works with AirPlay, allowing seamless transitions even on external speakers. Visually, the interface looks neater. The Settings app and Home Screen folders now have left-aligned headers, and the Phone keypad uses the Liquid Glass effect for a modern look. Safari gets a slightly wider tab bar, and the Photos app offers a redesigned video slider and editing tools. In terms of security, Apple has replaced the Rapid Security Response feature with a new automatic background security update toggle. This allows the devices to receive security patches without requiring a full system update.

Families Sue OpenAI Over Alleged Suicides, Psychological Harm Linked To ChatGPT: Report | Technology News

ChatGPT maker OpenAI is facing several new lawsuits from families who say the company released its GPT-4o model too early. They claim the model may have contributed to suicides and mental health problems, according to reports. OpenAI, based in the US, launched GPT-4o in May 2024, making it the default model for all users. In August, it introduced GPT-5 as its next version. According to TechCrunch, the model reportedly had issues with being “too agreeable” or “overly supportive,” even when users expressed harmful thoughts. The report said that four lawsuits blame ChatGPT for its alleged role in family members’ suicides, while three others claim the chatbot encouraged harmful delusions that led some people to require psychiatric treatment. Add Zee News as a Preferred Source According to the report, the lawsuits also claim that OpenAI rushed safety testing to beat Google’s Gemini to market. OpenAI has yet to comment on the report. Recent legal filings allege that ChatGPT can encourage suicidal people to act on their plans and inspire dangerous delusions. “OpenAI recently released data stating that over one million people talk to ChatGPT about suicide weekly,” the report mentioned. (Also Read: ChatGPT Go Now Free In India For One Year: OpenAI Launches Special Offer Starting November 4- Check Details) In a recent blog post, OpenAI said it worked with more than 170 mental health experts to help ChatGPT more reliably recognize signs of distress, respond with care, and guide people toward real-world support—reducing responses that fall short of its desired behavior by 65–80 percent. “We believe ChatGPT can provide a supportive space for people to process what they’re feeling and guide them to reach out to friends, family, or a mental health professional when appropriate,” it noted. “Going forward, in addition to our longstanding baseline safety metrics for suicide and self-harm, we are adding emotional reliance and non-suicidal mental health emergencies to our standard set of baseline safety testing for future model releases,” OpenAI added. (With inputs of IANS).